Jsson retweetledi

Jsson

168 posts

Jsson

@jssonxia

Building scalable performance tools | Rice CS & Cornell ECE | Performance engineering + ML systems

Houston, TX Katılım Haziran 2018

110 Takip Edilen439 Takipçiler

Jsson retweetledi

@antlionai Bullish on AMD. Not bullish on Intel. geohot.github.io/blog/jekyll/up…

English

Jsson retweetledi

Jsson retweetledi

Jsson retweetledi

Probably not what you want to hear but docs 😅. Actual real life examples. Better and more comprehensive kwarg docs. More helpful links to actual code not just wrapper of wrapper of wrapper code. Example code of larger apps showing best practices (style of torch titan, nanoGPT or etc). Helpful historical context if any, possibly links to useful issues. In process of my zero to hero videos I think I’ve come by ~10 examples of bad, incomplete, unhelpful or misleading docs where you just kinda have to know somehow.

English

Jsson retweetledi

Explore Compiler Engineering path dmitrysoshnikov.com/courses/compil… + new promos

English

Jsson retweetledi

As the author of this PDF, it's been interesting seeing people guess at the rationale behind its design. However, the rationale had nothing to do with theory vs practice, and everything to do with pragmatically coping with an unaccommodated disability in academia. (1/16)

Deedy@deedydas

Compilers was was known to be the hardest CS class at Cornell which was hard as it is. We were handed a 8-page PDF at the start of sem for a language spec we'd be implementing by the end of sem, split into 6 parts. On part 5, the median was a 0/100 and most the class failed.

English

Jsson retweetledi

And all transport will be fully autonomous within 50 years

Tim Urban@waitbutwhy

How people got around in 190 BC: horseback, horse-drawn carriage, sailboat 2,000 years later... How people got around in 1810: horseback, horse-drawn carriage, sailboat 160 years later... How people got around in 1970: bike, train, subway, car, bus, airplane, spaceship

English

Jsson retweetledi

(1/4) Announcing FlashInfer, a kernel library that provides state-of-the-art kernel implementations for LLM Inference/Serving.

FlashInfer's unique features include:

- Comprehensive Attention Kernels: covering prefill/decode/append attention for various KV-Cache formats (Page Table, Ragged Tensor, etc.) for both single-request and batch-serving scenarios.

- Optimized Shared-Prefix Batch Decoding: 31x faster than vLLM's Page Attention implementation for long prompt large batch decoding.

- Efficient Attention for Compressed KV-Cache: optimized grouped-query attention with Tensor Cores (3x faster than vLLM's GQA), fused-RoPE attention, and high-performance quantized attention.

Check our blog and code at:

1. flashinfer.ai/2024/02/02/int…

2. github.com/flashinfer-ai/…

English

Jsson retweetledi

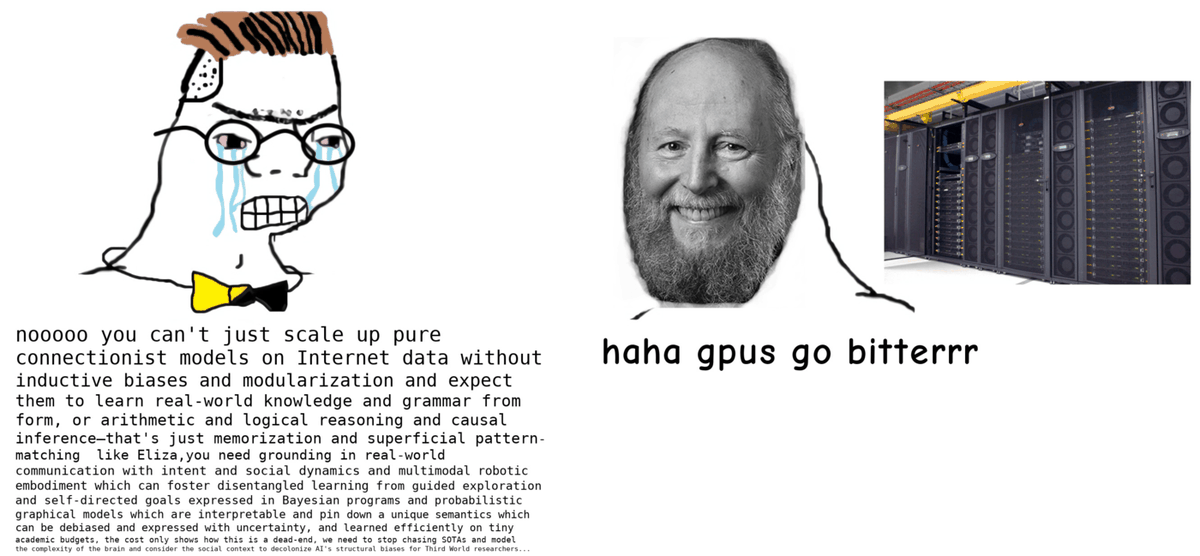

Everybody wants their models to run faster. However, researchers often cargo cult performance without a solid understanding on the underlying principles.

To address that, I wrote a post called "Making Deep Learning Go Brrrr From First Principles". (1/3)

horace.io/brrr_intro.html

English

Jsson retweetledi

Crazy AF. Paper studies @_akhaliq and @arankomatsuzaki paper tweets and finds those papers get 2-3x higher citation counts than control.

They are now influencers 😄 Whether you like it or not, the TikTokification of academia is here!

arxiv.org/abs/2401.13782

English

Jsson retweetledi

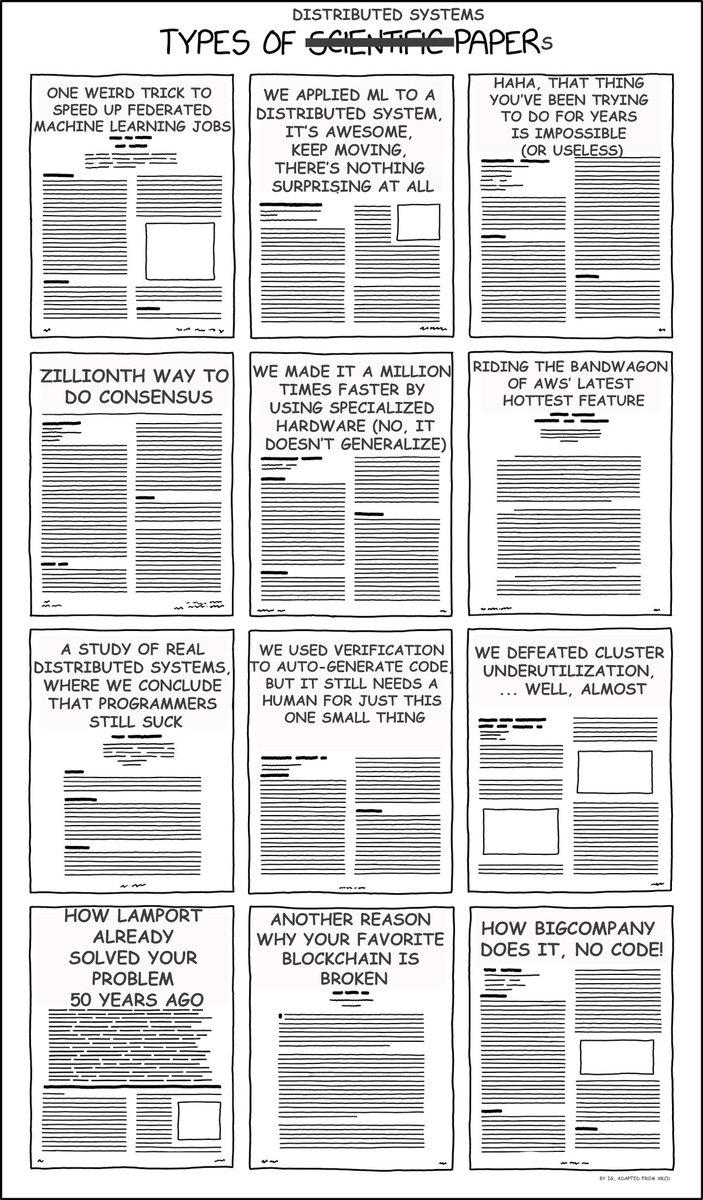

Types of Distributed Systems Papers.

Joke modeled after @xkcd 's xkcd.com/2456/

#distributedsystems #distributedsystemsjokes

English

Jsson retweetledi

Thanks to the amazing @AndrewCMyers, video of our PL-crypto workshop is out! Check out youtube.com/watch?v=LnR-lw…

List of talks are available here: andrewcmyers.github.io/plcrypt/

YouTube

English

Jsson retweetledi

Apache 软件基金会一年花多少钱?2020/2021财年,$1.6M,收入则是$3.0M,即使去掉最大头的赞助商计划,仅凭会议收入和公共捐赠,其收入也有$1.1M。值得注意的是,相比上一财年公共捐赠的收入增加了12倍。

谁愿意研究开源软件基金会的发展,探讨背后的原因。原语里弄可设置课题

apache.org/foundation/doc…

中文