JT

157 posts

JT

@jts_14

Building apps, automations & agents with AI

NYC Katılım Mayıs 2022

326 Takip Edilen67 Takipçiler

JT retweetledi

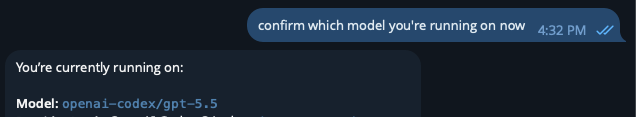

If you just switched your Openclaw to use GPT 5.5, run this prompt:

You're now running on GPT-5.5. This is a fresh opportunity to review everything with a stronger model. Do a full system review and optimization pass:

1. Self-assessment. Compare your current performance to how you operated on previous models (Gemini Flash, Kimi, Anthropic Sonnet). Be honest about what feels different — better reasoning, worse instruction following, gaps, anything. Flag any AGENTS.md or SECURITY.md rules you notice yourself struggling to follow on this model vs previous ones.

2. Full workspace file review. Re-read every bootstrap file (SOUL.md, IDENTITY.md, USER.md, MEMORY.md, AGENTS.md, SECURITY.md, TOOLS.md, HEARTBEAT.md, SKILLS.md). Flag anything that is outdated, contradictory, redundant, or referencing systems/models/configs that no longer exist. Don't fix yet — list what you find.

3. Config audit. Review openclaw.json and confirm every setting is optimal for GPT-5.5. Check: model routing and fallbacks, compaction settings, bootstrap character budget usage, context pruning, heartbeat interval, maxConcurrent, subagent concurrency, plugin configs, and lossless-claw settings. Flag anything that was tuned for a previous model and might need adjustment.

4. Cron health check. List every active cron job with: current schedule, last successful run, consecutive errors, and your honest assessment of whether it's earning its invocation cost. Flag any that are broken, redundant, or low-value.

5. Pending work inventory. List everything that was planned but not yet built or completed.

6. Optimization recommendations. Based on everything above, what would you change, consolidate, or rebuild now that you have GPT-5.5's capabilities? Are there things we overengineered for a weaker model that can be simplified? Are there capabilities we avoided because the previous model couldn't handle them reliably?

Report everything. Don't fix anything yet. I'll review and approve changes.

English

Forget all of this, Codex plan with GPT 5.5 is the new GOAT.

Feels better than even the claude max plan.

OPENCLAW IS BACK

Oliver Henry@oliverhenry

English

A big mistake a lot of businesses will make with AI is confusing access with execution.

They’ll think the tech is so good that existing employees or leaders will be able to configure agents and workflows properly right away by themselves.

What they won’t realize is that optimal, lasting results come from months if not years of operational scars, edge cases, and hard-learned frameworks.

The foundational models are commodities everywhere. Taste, strategic configuration, and thousands of reps are the actual moat that many businesses won’t realize until it’s too late.

English

JT retweetledi

The more enterprises I talk to about AI agent transformation, the more it’s clear that there is going to be a new type of role in most enterprises going forward. The job is to be the agent deployer and manager in teams. Here’s the rough JD:

This person will need to figure out what are the highest leverage set of workflows on a team are (either existing or new ones) where agents can actually drive significantly more value for the team and company.

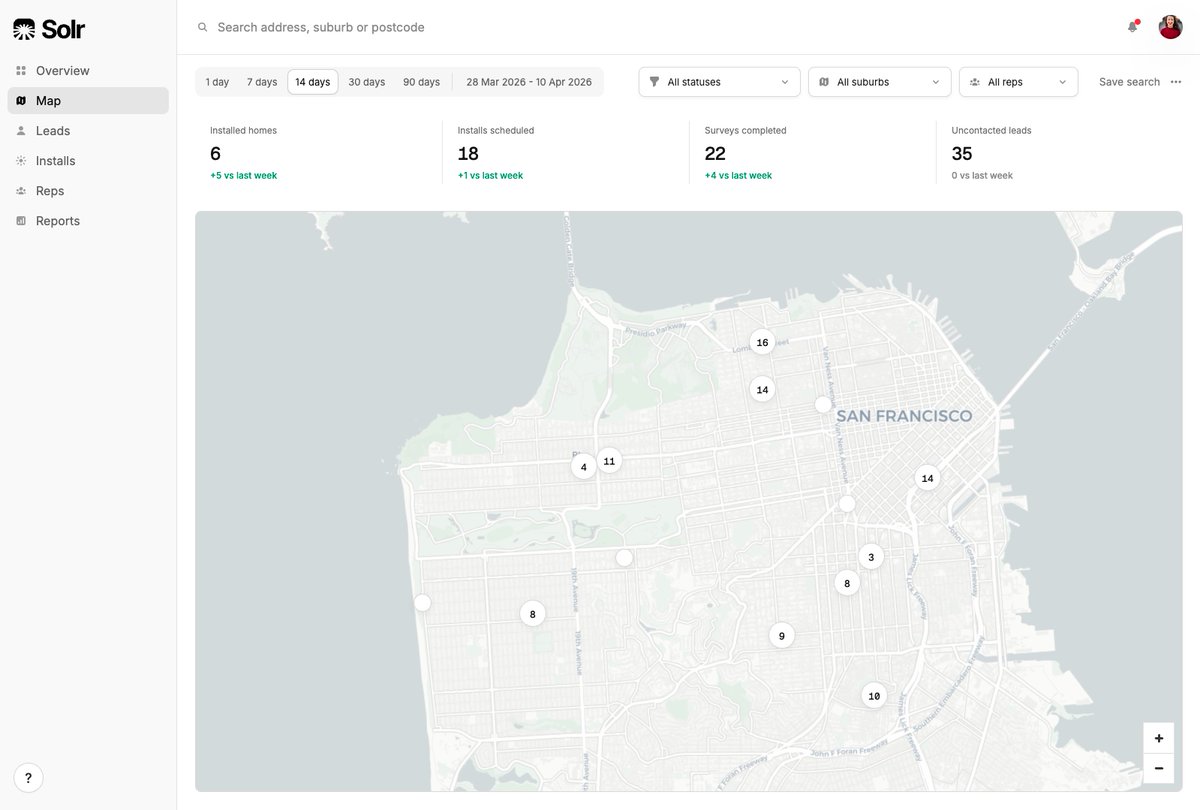

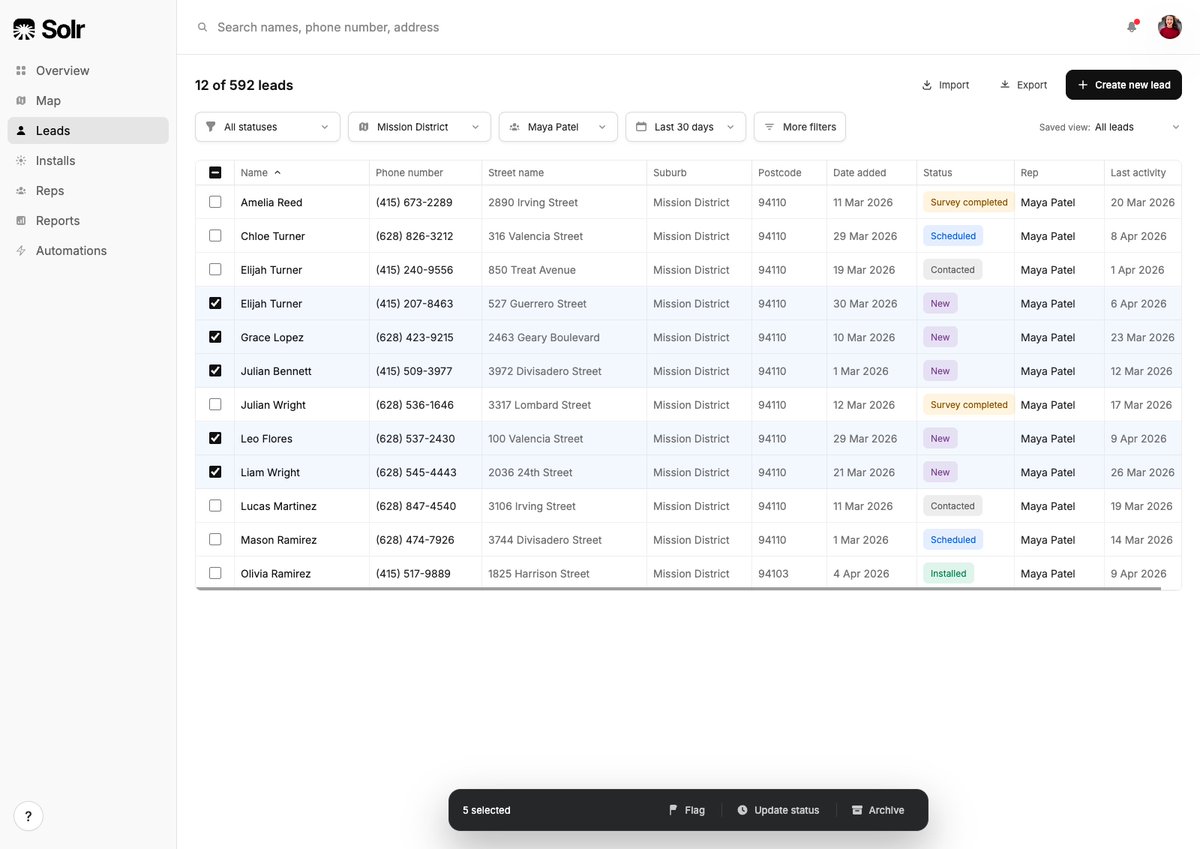

In general, it’s going to be in areas where if you threw compute (in the form of agents) at a task you could either execute it 100X faster or do it 100X more times than before. Examples would be processing orders of magnitude more leads to hand them off to reps with extra customer signal, automating a contracting review and intake process, streamlining a client onboarding process to reduce as many straps as possible, setting up knowledge bases than the whole company taps into, and so on.

This person’s job is to figure out what the future state workflow needs to look like to drive this new form of automation, and how to connect up the various existing or new systems in such a way that this can be fulfilled. The gnarly part of the work is mapping structured and unstructured data flows, figuring out the ideal workflow, getting the agent the context it needs to do the work properly, figuring out where the human interfaces with the agent and at what steps, manages evals and reviews after any major model or data change, and runs and manages the agents on an ongoing basis tracking KPIs, and so on.

The person must be good at mapping the process and understanding where the value could be unlocked and be relatively technical, and has full autonomy to connect up business systems and drive automation. This means they’re comfortable with skills, MCP, CLIs, and so on, and the company believes it’s safe for them to do so. But also great operationally and at business.

It may be an existing person repositioned, or a totally net new person in the company. There will likely need to be one or more of these people on every team, so it’s not a centralized role per se. It may rile up into IT or an AI team, or live in the function and just have checkpoints with a central function.

This would also be a fantastic job for next gen hires who are leaning into AI, and are technical, to be able to go into. And for anyone concerned about engineers in the future, this will be an obvious area for these skills as well.

English

JT retweetledi

Peter Steinberger, creator of OpenClaw, on why AI agents still produce "slop" without human taste in the loop:

"You can create code and run all night and then you have like the ultimate slop because what those agents don't really do yet is have taste."

Peter is direct: raw capability without direction still produces mediocre output.

"They are spiky smart and they're really good at things, but if you don't navigate them well, if you don't have a vision of what you're going to build, it's still going to be slop. If you don't ask the right questions, it's still going to be slop."

Great AI-assisted work is defined by the human guiding it.

@steipete describes his own creative process when starting a new project:

"When I start a project, I have like this very rough idea what it could be. And as I play with it and feel it, my vision gets more clear. I try out things, some things don't work, and I evolve my idea into what it will become."

Most people skip this part entirely, front-loading everything into a single prompt and wondering why the result feels hollow.

"My next prompt depends on what I see and feel and think about the current state of the project."

Each step informs the next. The work itself is the feedback loop.

"But if you try to put everything into a spec up front, you miss this kind of human-machine loop. And then I don't know how something good can come out without having feelings in the loop — almost like taste."

The agentic trap is what happens when you remove yourself from the process too early.

English

I haven’t completely turned off my claw, still getting value from it, but the difference in its performance/capabilities ever since the Claude ban has been significant.

Way more random gateway timeouts. Less proactiveness. Doesn’t seem to be able to chain steps together nearly as well.

I too am finding myself just using Claude desktop app for many of the things I used to have my OpenClaw do.

Josh Pigford@Shpigford

i turned off my 🦞 the past week of trying to force it to work with other models was a massive drain and i was able to replicate the vast majority of impactful functionality with a mix of claude cowork + code.

English

JT retweetledi

ai is exposing everyone right now & making legacy entities vulnerable af. even labs are having a tough time shipping features that feel really good end to end.

most ppl fail to realize that the hard part of building ai stuff was never access to a good model, it's the orchestration layer, the data plumbing, the taste in knowing when ai should intervene vs shut up, how it should intervene, what it should say, how it should say it, how does human in the loop work.. among many other subtle things. copilot is a prime time example of this.

most legacy players have none of these instincts cuz they built their orgs around shipping deterministic software. we are firmly in the non deterministic era now & that requires a very different skillset to build great tools.

English

JT retweetledi