Junyi Zhang

206 posts

Junyi Zhang

@junyi42

CS Ph.D. Student @Berkeley_AI. B.Eng. @SJTU1896 CS. previous with @GoogleDeepMind, @MSFTResearch. Vision, generative model, robotics.

4D vision is particularly challenging for 3D foundation models due to the scarcity of 4D data. In SelfEvo, we ask: can a model learn purely from itself? It works remarkably well, even in scenarios where annotations are nearly impossible to obtain.

3DV Outstanding Doctoral Dissertation Award Honorable Mention goes to Songyou Peng! @songyoupeng Thesis title: "Neural Scene Representations for 3D Reconstruction and Scene Understanding" #3DV2026

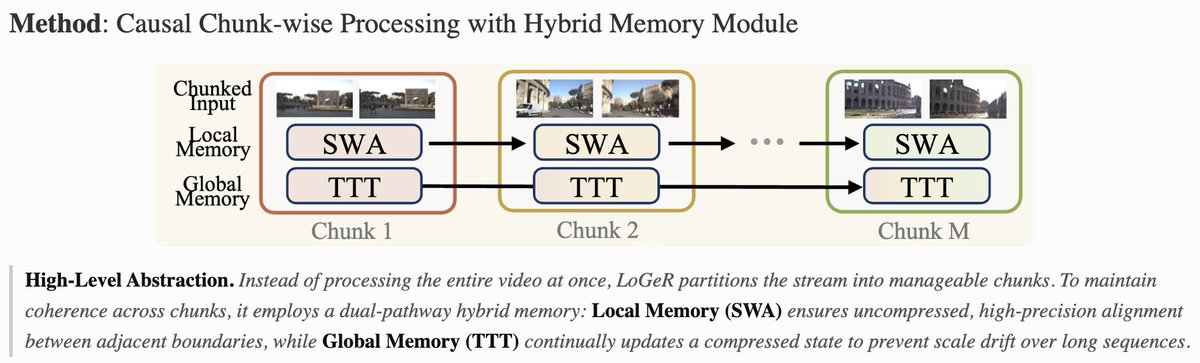

𝗢𝗻𝗲 𝗺𝗲𝗺𝗼𝗿𝘆 𝗰𝗮𝗻’𝘁 𝗿𝘂𝗹𝗲 𝘁𝗵𝗲𝗺 𝗮𝗹𝗹. We present 𝗟𝗼𝗚𝗲𝗥, a new 𝗵𝘆𝗯𝗿𝗶𝗱 𝗺𝗲𝗺𝗼𝗿𝘆 architecture for long-context geometric reconstruction. LoGeR enables stable reconstruction over up to 𝟭𝟬𝗸 𝗳𝗿𝗮𝗺𝗲𝘀 / 𝗸𝗶𝗹𝗼𝗺𝗲𝘁𝗲𝗿 𝘀𝗰𝗮𝗹𝗲, with 𝗹𝗶𝗻𝗲𝗮𝗿-𝘁𝗶𝗺𝗲 𝘀𝗰𝗮𝗹𝗶𝗻𝗴 in sequence length, 𝗳𝘂𝗹𝗹𝘆 𝗳𝗲𝗲𝗱𝗳𝗼𝗿𝘄𝗮𝗿𝗱 inference, and 𝗻𝗼 𝗽𝗼𝘀𝘁-𝗼𝗽𝘁𝗶𝗺𝗶𝘇𝗮𝘁𝗶𝗼𝗻. Yet it matches or surpasses strong optimization-based pipelines. (1/5) @GoogleDeepMind @Berkeley_AI

We present the SOTA feed-forward 3DGS pipeline Selfi, which was accepted by #CVPR2026 Project Page: denghilbert.github.io/selfi

🎮 We release VisGym: Diverse, Customizable, Scalable Environments for Multimodal Agents (w/ @junyi42 @aomaru_21490) 🌐 With 17 environments across multiple domains, we show systematically the brittleness of VLMs in visual interaction, and what training leads to. 🧵[1/8]