Surya

763 posts

Surya

@kickingkeys

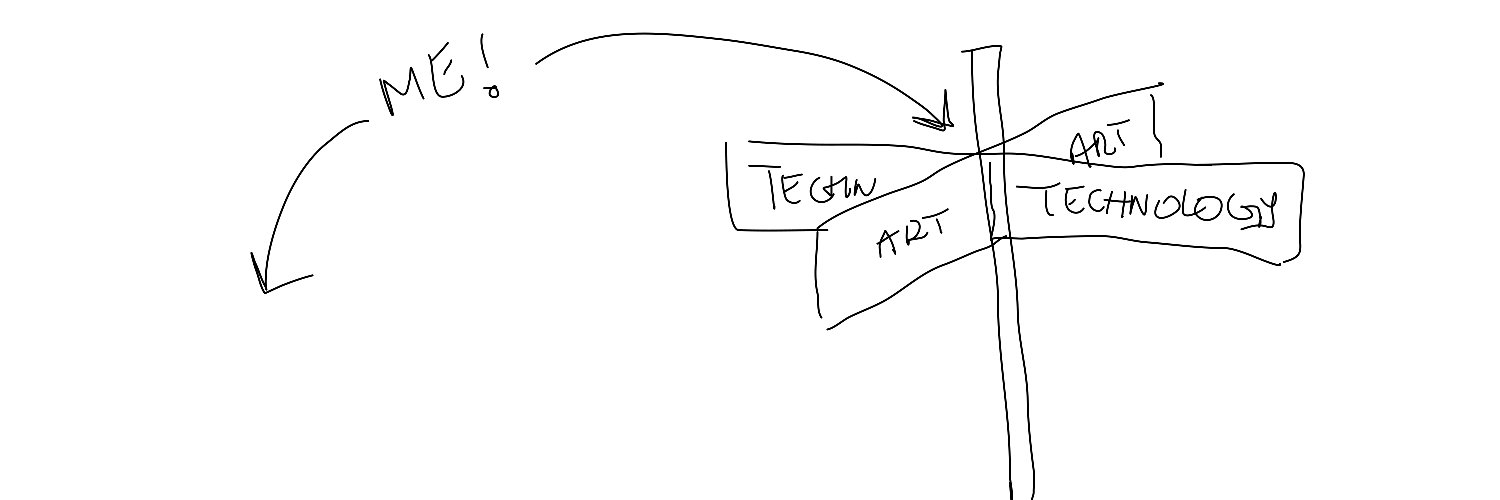

Design & other stuff @websim_ai

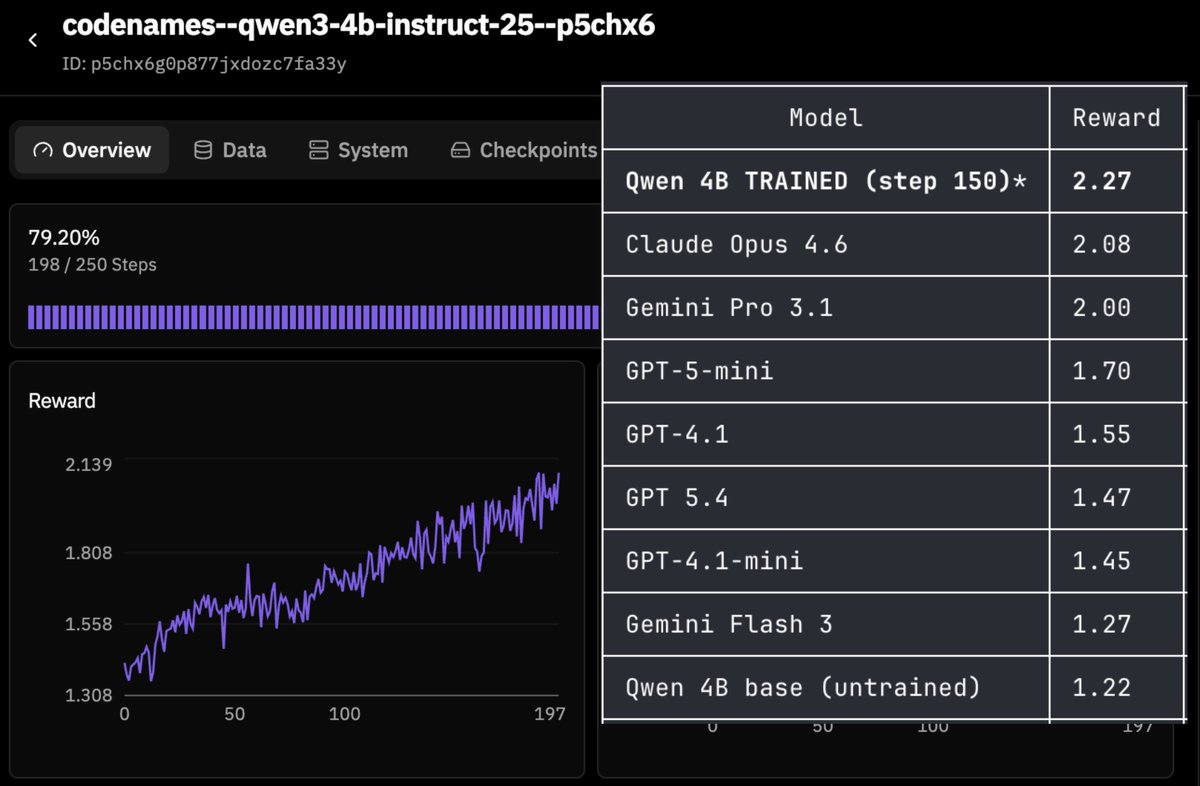

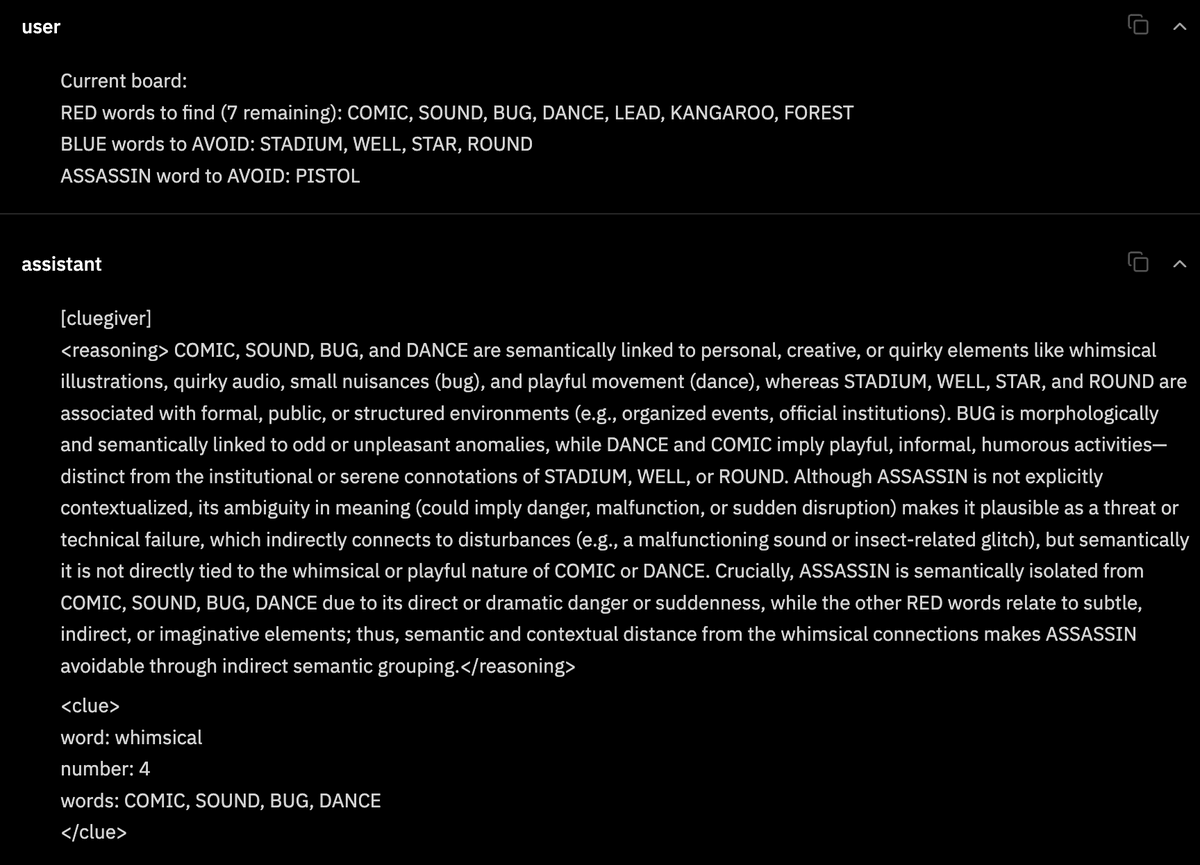

As part of @PrimeIntellect's RL residency program, I've been exploring how to do multi-agent RL using their current stack (from verifiers + prime-rl to lab experiments with hosted training /evals) and thinking about how it could be extended to support these abstractions natively. I've summarized my findings the blogpost below and I'll leave a few comments here, too...

Uni-1 is here! A new kind of model that thinks and generates pixels simultaneously. Less artificial. More intelligent.

4th Place: @katspigeon - Terminal World Map with Live News Satellite imagery rendered as colored unicode blocks in a terminal, with city names pulled from OpenStreetMaps and live news headlines geocoded onto the map in real time. The geocoding pipeline is where this gets good. Each headline runs through a location resolver that knows prominent cities, regions, and the physical locations of heads of organizations like the UN and WHO. A priority system decides placement; who is being acted upon takes precedence when two places are mentioned. Articles compete for screen space based on country, population size, and proximity to other visible headlines. Supports arbitrary zoom into satellite imagery. Kat built the whole thing in a day through Hermes Agent with Opus 4.6. x.com/katspigeon/sta…