Konstantin Levinski

44 posts

Konstantin Levinski retweetledi

Konstantin Levinski retweetledi

I used to think Sapiens was a great book. Sweeping, provocative, the kind of book that makes you feel like you finally understand the big picture of human history. It's on every CEO's bookshelf, assigned in universities, praised as a masterwork of synthesis. Yuval Noah Harari is treated as one of the serious thinkers of our time.

But something nagged at me. Some passages felt off. Claims that human rights are just figments of our collective imagination, not real things, just stories we tell ourselves. That nations, laws, money, justice, doesn't exist outside our heads. That meaning itself is a delusion we've invented to cope. That we're far more powerful than ever before but not happier. That hunter-gatherers had it better because they had no dishes to wash, no carpets to vacuum, no nappies to change, no bills to pay.

That sounded depressing to me, but was perhaps just the realistic scientific worldview? What it meant to see the world clearly, without comforting illusions.

Then I read The Beginning of Infinity by @DavidDeutschOxf. Deutsch has a concept he calls 'bad philosophy.' Not philosophy that's merely false, but philosophy that actively prevents the growth of knowledge. Ideas that close doors rather than open them. That makes problems seem unsolvable by design.

After soaking in Deutsch's framework (it's dense, a bit like digesting a delicious whale), it becomes clear: Harari's books are riddled with bad philosophy. They're smuggling nihilism in under the guise of scientific objectivity. Some examples:

On meaning: "Human life has absolutely no meaning. Humans are the outcome of blind evolutionary processes that operate without goal or purpose... any meaning that people inscribe to their lives is just a delusion."

On human rights: "There are no gods in the universe, no nations, no money, no human rights, no laws, and no justice outside the common imagination of human beings."

On free will: "Humans are now hackable animals. The idea that humans have this soul or spirit and they have free will, that's over."

On progress: "We thought we were saving time; instead we revved up the treadmill of life to ten times its former speed." The Agricultural Revolution? "History's biggest fraud." We didn't domesticate wheat, "it domesticated us."

On our cosmic significance: "If planet Earth were to blow up tomorrow morning, the universe would probably keep going about its business as usual. Human subjectivity would not be missed."

On the future: "Those who fail in the struggle against irrelevance would constitute a new 'useless class.'" Homo sapiens will likely "disappear in a century or two."

This is bad philosophy. It tells us our problems are cosmically insignificant, our solutions are illusions, and that progress is neither desirable nor within our control. It's also perfect nonsense. No one would ever go back to being hunter-gatherers. Would you rather worry about your kid spending too much time on Roblox, or face the 50% chance she won't reach puberty?

And our so-called "fictions"? They ended slavery. They gave women equal rights. They solved hunger. They eradicated smallpox. They turned sand into computer chips. They got us to the moon, and hopefully soon, to Mars and beyond. These "fictions" are already reshaping the universe, and over time they may become the most potent force in it.

Now compare Deutsch:

"Humans, people and knowledge are not only objectively significant: they are by far the most significant phenomena in nature."

"Feeling insignificant because the universe is large has exactly the same logic as feeling inadequate for not being a cow."

"Problems are soluble, and each particular evil is a problem that can be solved."

"We are only just scratching the surface, and shall never be doing anything else. If unlimited progress really is going to happen, not only are we now at almost the very beginning of it, we always shall be."

Where Harari sees a species of deluded apes stumbling toward obsolescence, Deutsch sees universal explainers, the only entities we know of capable of creating explanatory knowledge, solving problems, and potentially seeding the universe with intelligence.

The difference isn't academic. Ideas shape action. If you believe life is meaningless, progress is a trap, and humans are hackable animals with no free will, how does that affect what you build? What you fight for? What you teach your children?

Harari's books sell because they flatter a fashionable pessimism. They let readers feel sophisticated for seeing through the "delusions" everyone else lives by. That smug cynicism is corrosive. And it's everywhere: in schools, in media, in bestselling books. More than half of young adults now say they feel little to no purpose or meaning in life. This is what happens when you teach an entire generation bad philosophy. Less progress, less health, less wealth. Less flourishing. And ultimately, a higher chance that civilization and consciousness go extinct.

Fortunately, there's another equally well-written, but much truer, account of homo sapiens, appropriately titled 'The Beginning of Infinity'. And this one smuggles no despair in by the backdoor. But let's give Harari credit where it's due. He is right about one thing: if planet Earth blew up tomorrow, we wouldn't be missed. Because there'd be no one left to miss us, just a careless universe, blindly obeying physical laws. We are the only ones who can miss, but we're not going to. We're going to aim, hit, and keep going.

Full credit for the amazing meme to @Ben__Jeff

English

Konstantin Levinski retweetledi

.@DavidDeutschOxf: As a species, or as a civilization or civilizations, we face problems, severe problems, dangers, all the way up to existential dangers. Some literally in a sense of extinction level, others causing suffering and tragedy on such a scale that they merit at least as much consideration as literal extinction. We always have faced such dangers.

We always will. We always will. Perhaps you're thinking, if that's true, then we're doomed, because, you know, given that each danger has a non-zero probability of doom, then sooner or later, but no, that's a fallacy, one of the many that one can easily get sucked into when trying to apply game theory and probabilities to situations in which knowledge and ignorance are the important determinants of what will happen, because those infinitely many probabilities are not immutable.

As our knowledge grows, some of them fall. Our job is to make that infinite series of bad probabilities converge to a negligible value. Simple.

English

Konstantin Levinski retweetledi

Konstantin Levinski retweetledi

OpenAI’s o3 model for laypeople

What it is and why it’s important

What

> o3 is an AI language model, that under the right set of circumstances, can solve PhD level problems

Its Smart

> it’s a big deal because it’s effectively solved

a) ARC-AGI which is a picture puzzle IQ test similar to Raven’s matrices which is what Mensa uses

b) solved 25% of FrontierMath which are difficult grad student level math questions

There is no wall

> it’s also a really big deal because OpenAI only introduced its last o1 model 3 months ago. This means they reduced the cycle time to 3 months from 18 months

> Intel used to have a tick (chip die shrink) tock (architecture change) cycle during the height of Moore’s law.

OpenAI now effectively has a tick (new Nvidia chip training data center) 4 tocks (new chains of thought) cycle.

> This means potentially 5 (!) step ups in capability next year.

The machine that builds the machines

> OpenAI is also using its current generation of models to build its next generation

> The OpenAI staff themselves are somewhat bewildered by how well things are working

Fast, cheap models every tock

> OpenAI also introduced an o3-mini model which is small and fast and capable.

> Notably it was as capable as the much slower o1 full model.

> This means that every 3 months you can look forward to a cheap fast model as good as the smartest state of the art super genius model 3 months before that.

Reliability

> one big barrier to AI deployment has been hallucination and reliability.

> The o1 model had early indications of much higher reliability (in one test refusing to be tricked into giving up passwords 100% of the time to users).

> We don’t have a sense of how well the o3 models perform yet… but if this has been solved you will start seeing these models in service work next year…

By end 2025 (speculation)

> superhuman mathematician and programmer available at moderate prices

> reliable assistant for hotel booking, calendar management, passwords, general computer use

What will a superhuman mathematician/programmer do?

> Everywhere you use an algo, it will get better

> jump from 5G to 10G in cell phones

> credit default costs across economy will drop, leading to credit becoming much much cheaper. 0% interest rates for some, no credit for others

> search costs across economy drop: hotels, airlines, dating…

> quantitative trading will better allocate capital, more good ideas financed, fewer bad ideas funded

And then you get to 2026…

English

@burkov ChatGPT ability to tailor replies to my specific context without reminding it is super helpful to me. I guess that's rationale for the feature.

English

All humans in the world can fit on 20 km square 🤯 . That would be quite a world concert. waitbutwhy.com/2015/03/7-3-bi…

English

Konstantin Levinski retweetledi

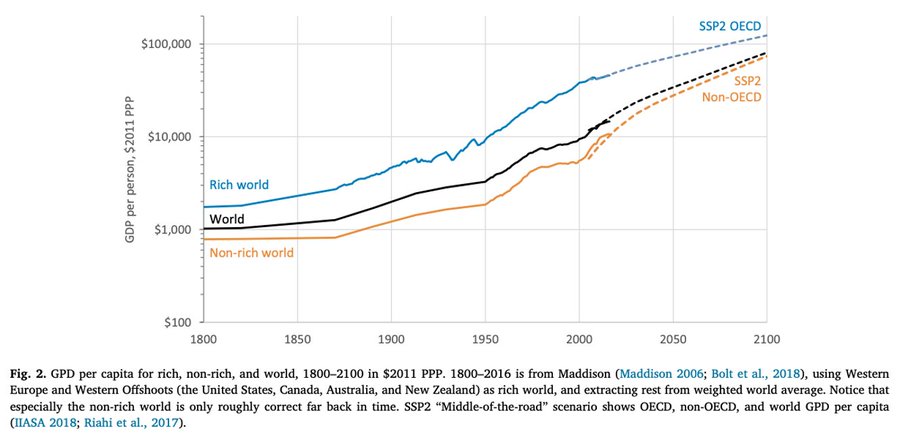

The world is improving – not just for the rich, but also especially for the world’s poor.

Read my peer-reviewed article:

sciencedirect.com/science/articl…

English

Ya know, watching the mass protests, the violence, the antisemitism, Israelis and Jews around the world are very worried and very scared.

We feel very alone. It feels like everyone is turning against us.

The thing is, in my head, I know that’s not true.

But in my heart, it feels that way.

For my own sanity, I’m curious how many of you, the people reading this tweet, stand unequivocally with Israel and our right to defend ourselves and the world from Hamas.

If you fall under the category and are 100% with us in this dark time, may I trouble you to just reply to this tweet with a “me”, or a 🇮🇱?

This is for me but it’s also for the millions of Jews around the world who feel deeply alone.

I’d love to show this to them and show them that we’re absolutely not alone.

A simple “me” or 🇮🇱 would be highly appreciated.

🙏

English

Konstantin Levinski retweetledi

Collective amnesia about technophobia is ENDEMIC.

‘It is different this time’ is NOT an excuse to ignore history.

Encryption panic threatend privacy.

GMO panic killed millions through malnutrition.

Nuclear panic delayed critical carbon free future.

.

Andrew Ng@AndrewYNg

This is a great website, and documents via news clippings the alarm over many beneficial innovations, such as electricity, bicycles, elevators, radio, etc: pessimistsarchive.org After the current wave of alarm over AI human extinction dies down, I wonder if statements about that will be featured on this website too?

English

@dan_abramov As in, if GoDaddy explodes to the point where transfer is not a thing any more?

English

@AntonJamesMuse @CoryMillsFL @RepThomasMassie @RepMTG You know that Russia is delivering cluster munitions over Ukraine day in day out, right?

English

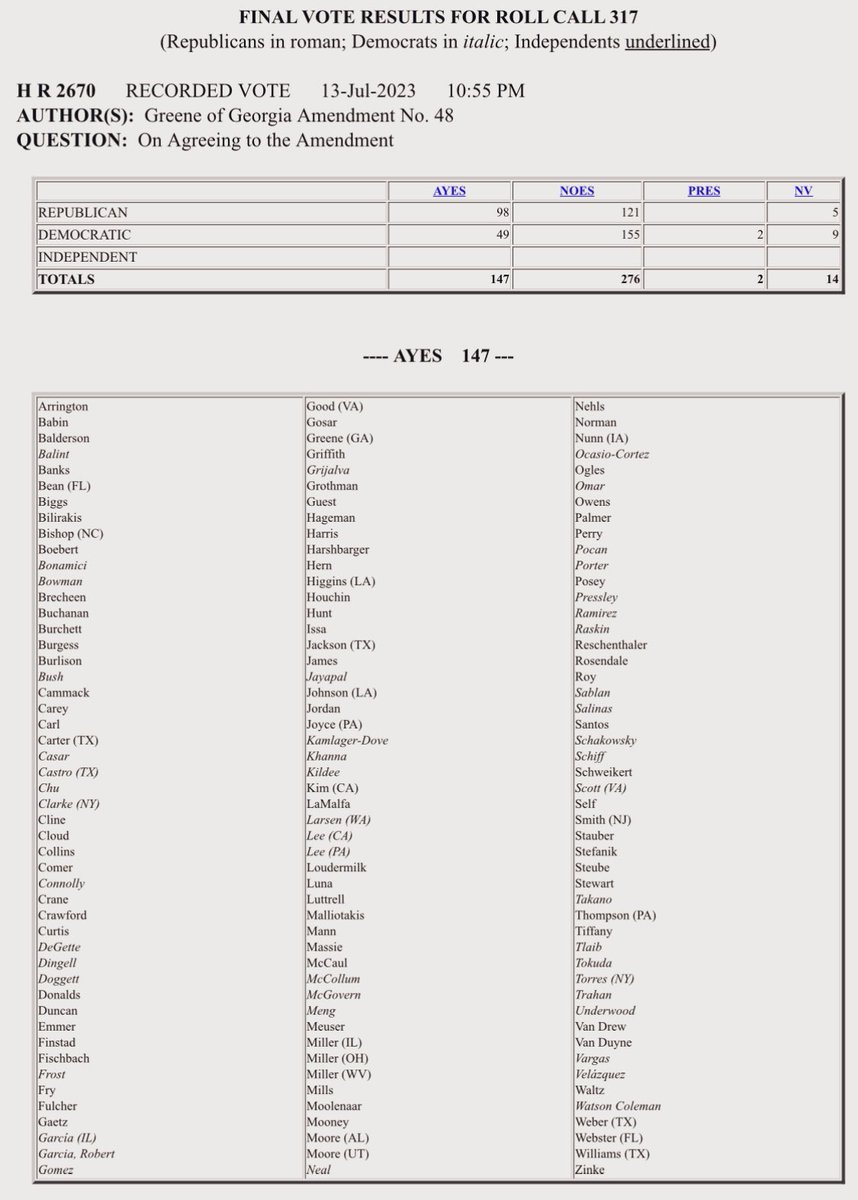

98 Republicans and 49 Democrats voted to prohibit the sale and transfer of cluster munitions to Ukraine.

Unfortunately the measure did not pass. Here is the roll call: clerk.house.gov/evs/2023/roll3…

English

@paulg There is underlying assumption here that AI will have concept of "wanting to outsmart". The assumption comes from observing nature, where outsmarting crucial to survival. Neither AI nor AGI have any need to outsmart anyone.

English

@EMostaque Why AGI is specifically seen as threat to concept of democracy, and not, for example, to concept of marriage or parenthood? All of them can be said to be subverted by skewed messaging.

English

AGI is often feared as a potential threat to humanity, with concerns that it will become so intelligent that it will enslave us. However, this fear is based on the assumption that greater intelligence always subjugates less intelligent entities.

This assumption is derived from the natural evolution of intelligence in creatures such as mice, lions, cats, and flies, where intelligence is developed through competition and fear. This type of intelligence is the kind that we observe in nature, and it has led us to believe that great intelligence will always subjugate the rest.

However, AGI is of a completely different kind of intelligence, and its usefulness is entirely dependent on the beholder's interpretation. For example, ChatGPT outputs words that may not make sense to anyone, but if it outputs a poem or a piece of code, the usefulness and niceness of the poem or code depend on the person who reads it or uses it. Therefore, there is no inherent concept of competition or subjugation in AGI.

Even for an AGI that is clearly smarter than all people, that can solve all mathematical problems and come up with new theories and the best code, its output will still require human interpretation and will not have the concept of subjugating anything from a human perspective.

English

The more people the planet has and the more freedom they enjoy, the greater the likelihood that new, useful ideas will be generated to tackle our problems.

Full video: youtu.be/RrizO-i9WFU

YouTube

English