Sabitlenmiş Tweet

Naveesh /wtf

756 posts

Naveesh /wtf

@krapstarr

Indian firmware in an American operating system

Katılım Aralık 2017

585 Takip Edilen0 Takipçiler

@MichaelGannotti @krapstarr @ollama I understand, but as they are the privacy choice and that’s their origin story they really need to say it not let us assume.

English

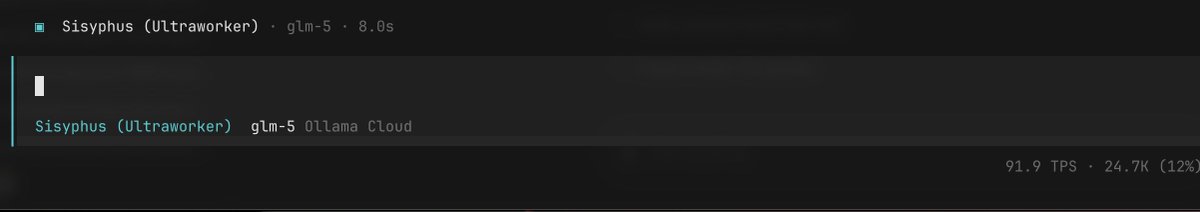

🦞Ollama's cloud is one of the best places to run OpenClaw.

$20 plan is enough for most day to day OpenClaw usage with open models!

To make the switch, all you need is to open the terminal and type:

ollama launch openclaw

Choose a model:

kimi-k2.5:cloud

glm-5:cloud

minimax-m2.7:cloud

If you are affected, Ollama welcomes you!! ❤️

The Verge@verge

Anthropic essentially bans OpenClaw from Claude by making subscribers pay extra theverge.com/ai-artificial-…

English

@OfficialLoganK @GoogleAIStudio Awww its like when my dad comments on my posts

English

@roger @MichaelGannotti @ollama Yeah I have asked several times and no answer which means it’s being routed elsewhere

English

@krapstarr @MichaelGannotti @ollama I’m assuming done that’s been their model. OpenRouter just routes, Ollama hosts. Maybe they can comment and we find out for sure.

English

@JustinGorya @1kartikkabadi1 @ollama They need to be more open about how they are handling these things

English

Open-source models need a license. Nobody knows exactly which model Ollama is hosting. Obviously, for that speed, it needs adjustments and can't be the base model. So, how heavily quantized is it? Is it fine-tuned? Distilled? These are all open questions, and nobody can answer them. ZAI, MiniMax, and Moonshot should create something like a 'Certified and Trusted Partner' license. That way, we would know which provider uses the full power of the raw model

English

@ollama I am paying for it so I am surprised potentially MiniMax 2.7 is being sent somewhere else …

English

@ollama Your answers are not adding up. What is your privacy policy?

English

@John44893657552 @roger @MichaelGannotti @ollama Agreed. Minimax also doesn’t count towards quota…@ollama care to comment?

English

@roger @krapstarr @MichaelGannotti @ollama I am curious how their minimax 2.7 is private since the open source hasn't dropped yet I put some personal data there so hopefully it really is zero retention full privacy

English

@MichaelGannotti @ollama @ollama does any of the data get routed to other providers or is it all in-house in US?

English

@ollama The $20 monthly plan is awesome and $200 annual plan even better! Kimi K2.5 on Ollama powering OpenClaw is a great experience!

English

What made it shrink like that x.com/NASA/status/20…

NASA@NASA

1972 ➡️2026 Apollo 17 ➡️ Artemis II

English

@AlexFinn There are all people who think OpenClaw is the most important software release of our lifetime!

English

@GaryMarcus @DKokotajlo I am unemployed and have a lot of time on my hands. You’re employed and you should be teaching and not trying to correct random strangers on the Internet. You will have more of an impact on all those kids than me.

English

@krapstarr @DKokotajlo see if you can pull yourself above level 2 here, and come back if you can

Gary Marcus@GaryMarcus

Periodic public service announcement, which unfortunately seems all too necessary in this joint: Always aspire to the top, not the bottom, of @paulg’s beautiful pyramid of argumentation:

English

LMAO. Last year most of Silicon Valley was predicting AGI in 2027 (which, spoiler alert, ain’t gonna happen).

Now the most prominent former advocate of AGI in 2027, @DKokotajlo, says 2029, and Groks writes it up as “AI forecasters shorten timelines for AGI”, when on net Daniel has moved his mean projection back by two years.

@eli_lifland is as 2033, but the headline kind of obscures that, too.

Even @grok knows that panic sells; nuance doesn’t.

English

@karpathy I am not sure I see the use case unless the data wasn't already in the training corpus...no?

English

LLM Knowledge Bases

Something I'm finding very useful recently: using LLMs to build personal knowledge bases for various topics of research interest. In this way, a large fraction of my recent token throughput is going less into manipulating code, and more into manipulating knowledge (stored as markdown and images). The latest LLMs are quite good at it. So:

Data ingest:

I index source documents (articles, papers, repos, datasets, images, etc.) into a raw/ directory, then I use an LLM to incrementally "compile" a wiki, which is just a collection of .md files in a directory structure. The wiki includes summaries of all the data in raw/, backlinks, and then it categorizes data into concepts, writes articles for them, and links them all. To convert web articles into .md files I like to use the Obsidian Web Clipper extension, and then I also use a hotkey to download all the related images to local so that my LLM can easily reference them.

IDE:

I use Obsidian as the IDE "frontend" where I can view the raw data, the the compiled wiki, and the derived visualizations. Important to note that the LLM writes and maintains all of the data of the wiki, I rarely touch it directly. I've played with a few Obsidian plugins to render and view data in other ways (e.g. Marp for slides).

Q&A:

Where things get interesting is that once your wiki is big enough (e.g. mine on some recent research is ~100 articles and ~400K words), you can ask your LLM agent all kinds of complex questions against the wiki, and it will go off, research the answers, etc. I thought I had to reach for fancy RAG, but the LLM has been pretty good about auto-maintaining index files and brief summaries of all the documents and it reads all the important related data fairly easily at this ~small scale.

Output:

Instead of getting answers in text/terminal, I like to have it render markdown files for me, or slide shows (Marp format), or matplotlib images, all of which I then view again in Obsidian. You can imagine many other visual output formats depending on the query. Often, I end up "filing" the outputs back into the wiki to enhance it for further queries. So my own explorations and queries always "add up" in the knowledge base.

Linting:

I've run some LLM "health checks" over the wiki to e.g. find inconsistent data, impute missing data (with web searchers), find interesting connections for new article candidates, etc., to incrementally clean up the wiki and enhance its overall data integrity. The LLMs are quite good at suggesting further questions to ask and look into.

Extra tools:

I find myself developing additional tools to process the data, e.g. I vibe coded a small and naive search engine over the wiki, which I both use directly (in a web ui), but more often I want to hand it off to an LLM via CLI as a tool for larger queries.

Further explorations:

As the repo grows, the natural desire is to also think about synthetic data generation + finetuning to have your LLM "know" the data in its weights instead of just context windows.

TLDR: raw data from a given number of sources is collected, then compiled by an LLM into a .md wiki, then operated on by various CLIs by the LLM to do Q&A and to incrementally enhance the wiki, and all of it viewable in Obsidian. You rarely ever write or edit the wiki manually, it's the domain of the LLM. I think there is room here for an incredible new product instead of a hacky collection of scripts.

English

Naveesh /wtf retweetledi