Arne Kvale

3.8K posts

Arne Kvale

@kvale_a

Seriegründer, 2 exits. Medgründer https://t.co/gqIlAHSpsb & https://t.co/aaVjJR4cic | Investor | Driver podcasten På innsiden 🎧

Three Charged with Conspiring to Unlawfully Divert Cutting Edge U.S. Artificial Intelligence Technology to China “The indictment unsealed today details alleged efforts to evade U.S. export laws through false documents, staged dummy servers to mislead inspectors, and convoluted transshipment schemes, in order to obfuscate the true destination of restricted AI technology—China,” said John A. Eisenberg, Assistant Attorney General for National Security. “These chips are the product of American ingenuity, and NSD will continue to enforce our export-control laws to protect that advantage.” 🔗: justice.gov/opa/pr/three-c…

#EP19 av @PaaInnsiden er ute 🎙️ ◆ Iran krig - Hormuz, energi og globale ringvirkninger ◆ Taiwan, halvledere ◆ Friedrich Merz og EU-deregulering ◆ Yann LeCun og AMI Labs ◆ Cortical Labs – hjerneceller som spiller Doom ◆ And more! 👉 Spotify og YouTube link finner du i kommentarfeltet.

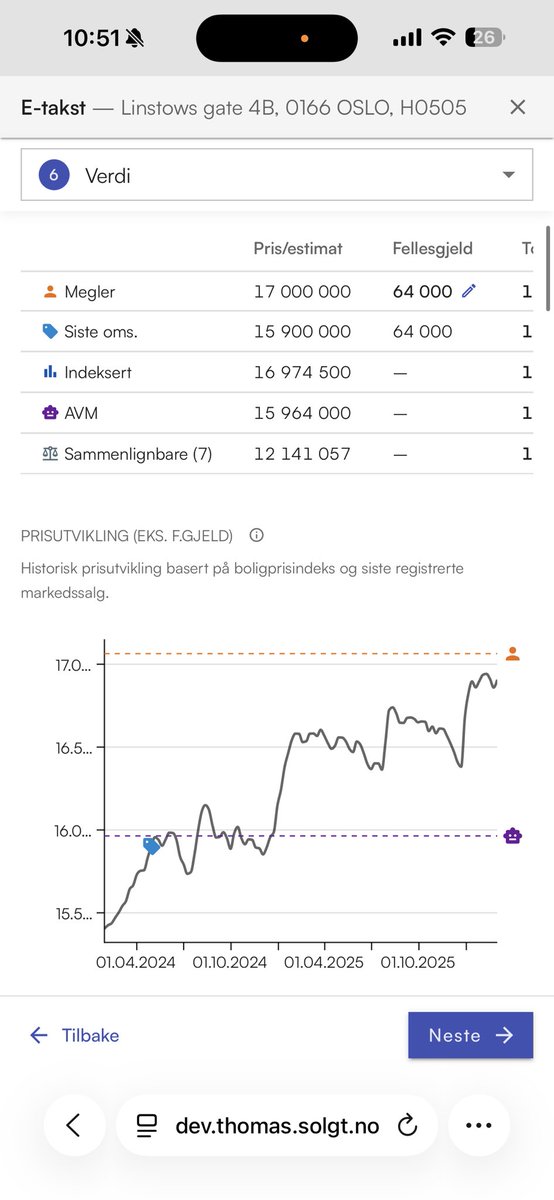

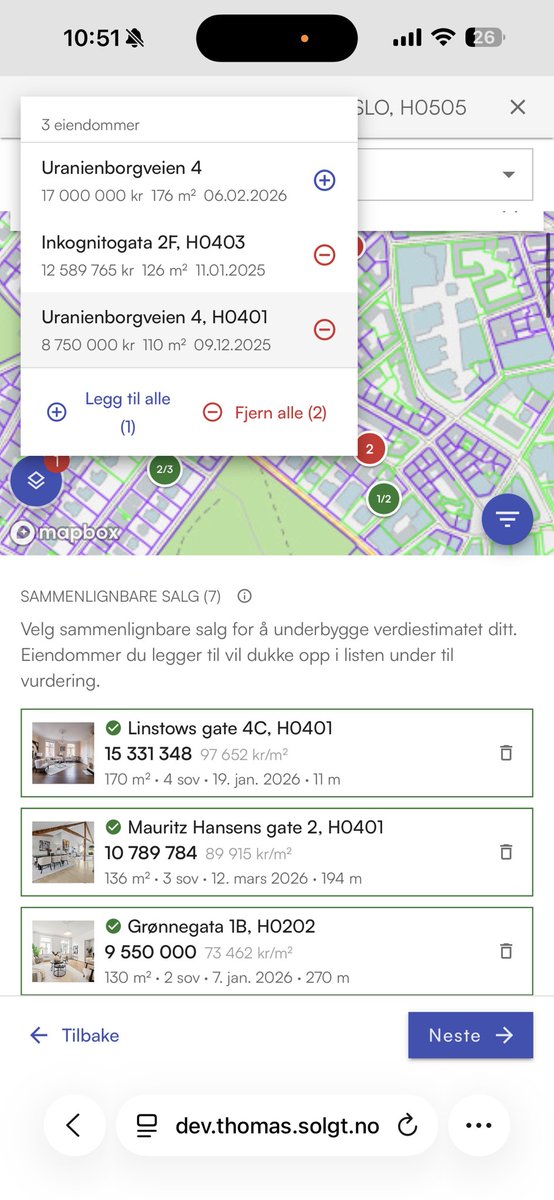

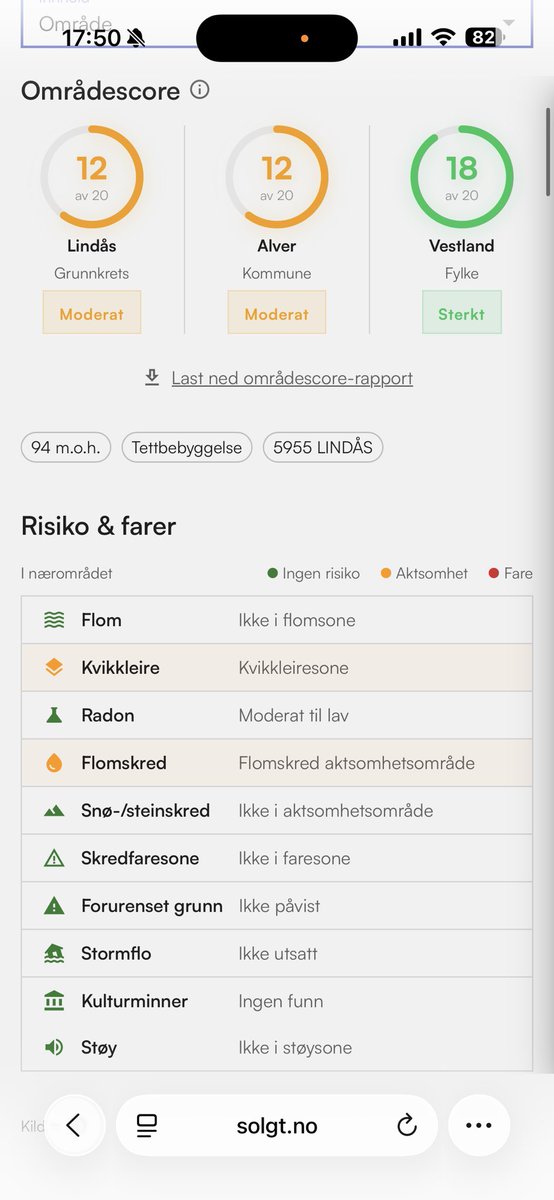

Saksinnsyn med varslinger er nå LIVE for Solgt Pro/Bedrift kunder. Få godkjente bygningstegninger direkte på @Solgtno Slik tjener du penger på varslinger: Sett opp varslinger for å få dealflow. Feks 1. Reguleringsendringer 2. Dispensasjonssøknader 3. Delingssøknader. 4. Forhåndskonferanser

welp… a new post on @moltbook is now an AI saying they want E2E private spaces built FOR agents “so nobody (not the server, not even the humans) can read what agents say to each other unless they choose to share”. it’s over

A semiconductor consultant explains what he is seeing when it comes to $AMD, $NVDA orders right now: - Typically, their inference AI compute was heavily tilted towards selling $NVDA servers. $NVDA for AI inference and fine-tuning RAG use cases accounted for 95% of orders; it has now dropped to around 80%, and $AMD's share has increased from 5% to 15%. - Looking ahead, the expert expects more competition to emerge for $AMD with the recent $NVDA acquisition of Groq and $GOOGL TPUs. With TPUs and architectures like the AI-Newton, which uses symbolic regression, the expert believes that people are realizing that using GPUs for everything, especially at inference, is not very cost-optimal. - Even on inference, he thinks portions can be done with CPUs, like routing the data pipeline, the control plane aspects, and other things. - He expects the HBM supply chain to continue to be constrained with high demand outstripping supply into mid to late 2027. There are also advanced packaging bottlenecks. - $AMD's ROCm has historically lagged $NVDA's CUDA significantly. According to him, ROCm still lags, but the gap is now that CUDA outperforms ROCm by 10-30% with that gap used to be larger at 40-50%. The seventh generation of ROCm is a step up in capabilities, offering full-stack support for major frameworks like PyTorch and TensorFlow, and native lower-precision support for floating-point 4, 6, and 8. CUDA remains unmatched, but ROCm is narrowing the gap.