Kyle Duffy

321 posts

Today we're introducing TRIBE v2 (Trimodal Brain Encoder), a foundation model trained to predict how the human brain responds to almost any sight or sound. Building on our Algonauts 2025 award-winning architecture, TRIBE v2 draws on 500+ hours of fMRI recordings from 700+ people to create a digital twin of neural activity and enable zero-shot predictions for new subjects, languages, and tasks. Try the demo and learn more here: go.meta.me/tribe2

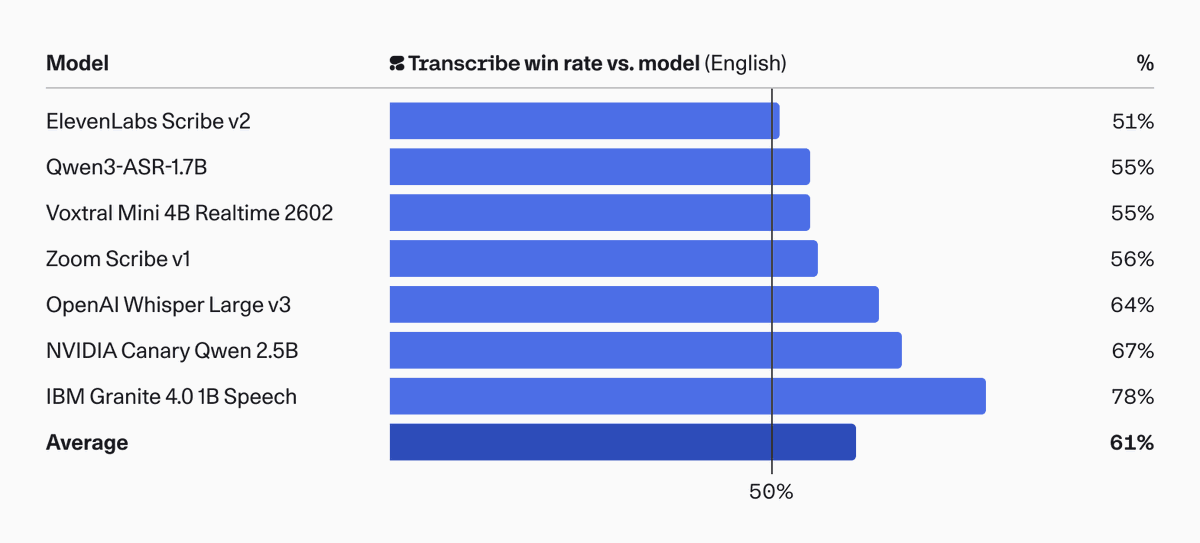

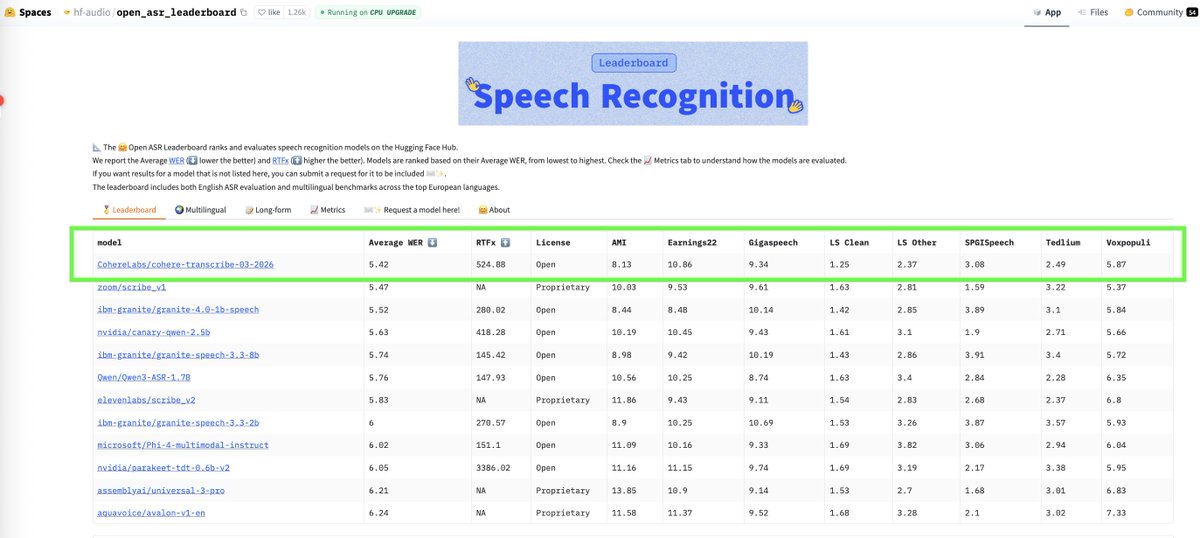

Introducing: Cohere Transcribe – a new state-of-the-art in open source speech recognition.

We’re excited to announce our partnership with @RWSGroup, bringing Cohere’s frontier AI models to Language Weaver Pro - unlocking new enterprise‑grade translation capabilities. Purpose-built for high-stakes environments, this integration empowers enterprises and governments to communicate seamlessly across languages and accelerate new opportunities for global collaboration and growth! Learn more: rws.com/about/news/202…

316 ARC-AGI tasks solved with zero learning. No neural net, no training, no DSL — just 19th-century projective geometry. Encode grid cell relationships as Plücker lines in P³, find transversals via Schubert calculus, score candidates by geometric incidence. 95% solve rate on the eval set (of non-timeout tasks). Single C file, runs in seconds.

Man suffers only because he takes seriously what the gods made for fun.

Its not Simcity, but business school students who were good at Civ V also turn out to be better planners, organizers, and problem-solvers in this small experiment.

🚀MIT Flow Matching and Diffusion Lecture 2026 Released (diffusion.csail.mit.edu)! We just released our new MIT 2026 course on flow matching and diffusion models! We teach the full stack of modern AI image, video, protein generators - theory and practice. We include: 📺 Videos: Step-by-step derivations. 📝 Notes: Mathematically self-contained lecture notes 💻 Coding: Hands-on exercises for every component We fully improved last years’ iteration and added new topics: latent spaces, diffusion transformers, building language models with discrete diffusion models. Everything is available here: diffusion.csail.mit.edu A huge thanks to Tommi Jaakkola for his support in making this class possible and Ashay Athalye (MIT SOUL) for the incredible production! Was fun to do this with @RShprints! #MachineLearning #GenerativeAI #MIT #DiffusionModels #AI