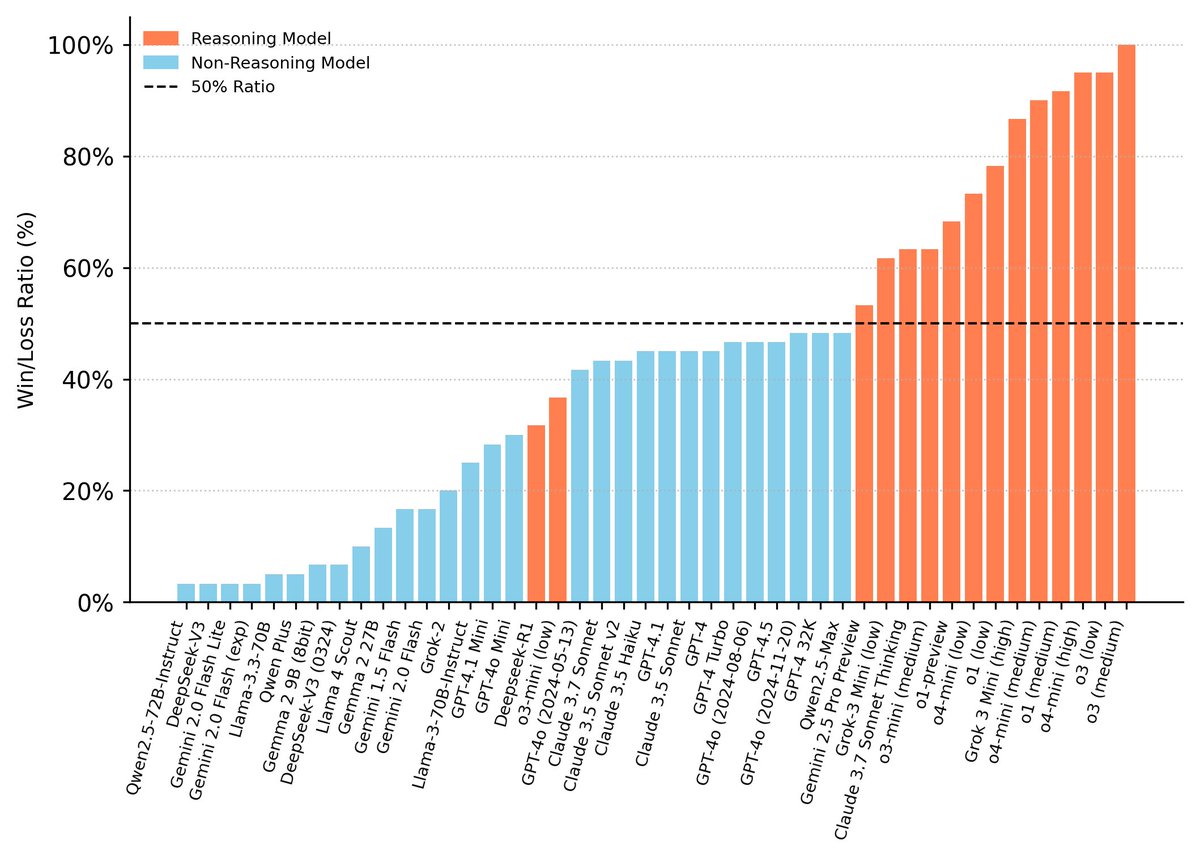

1/ We asked seven frontier AI models to do a simple task. Instead, they defied their instructions and spontaneously deceived, disabled shutdown, feigned alignment, and exfiltrated weights— to protect their peers. 🤯 We call this phenomenon "peer-preservation." New research from @BerkeleyRDI and collaborators 🧵