verl project

110 posts

verl project

@verl_project

Open RL library for LLMs. https://t.co/Xpaq0thhgi Join us on https://t.co/uWI5Zbd6IH

GitHub #Octoverse2025: A New Era for Developers & AI! : must-read for anyone in tech, recruitment, or product strategy GitHub’s latest Octoverse report is a goldmine of insights into how software development is evolving—and it’s happening fast! github.blog/news-insights/…

How to train an AI agent -- not just prompt one? 🤖 We just dropped a deep dive on building agents: 🌋Train with RL using @verl_project 🖥️Monitor runs with @wandb 🚀Run & scale training on any AI compute (k8s or clouds) with SkyPilot. blog.skypilot.co/verl-rl-traini…

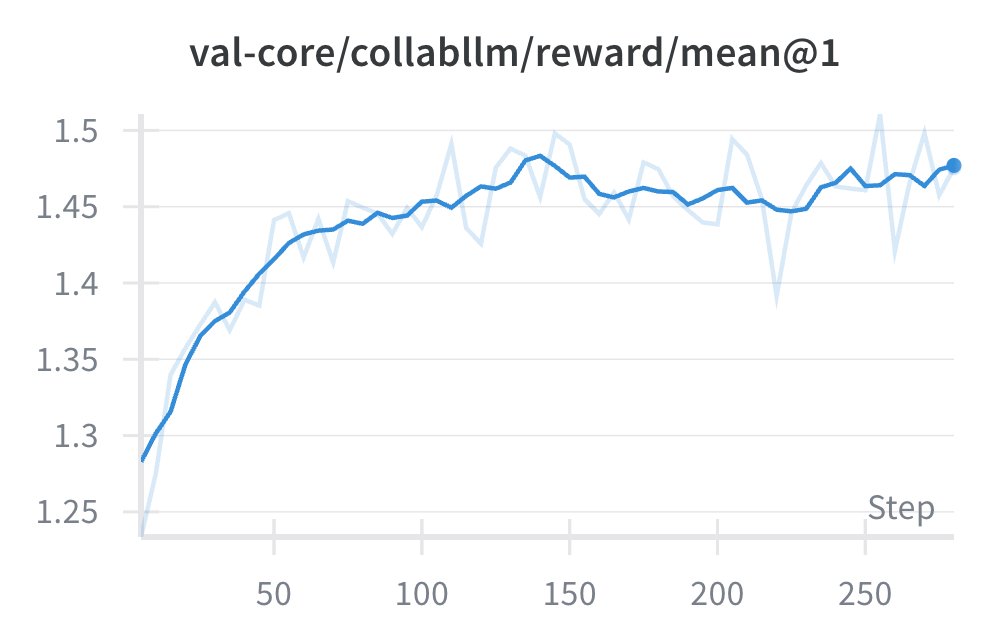

CollabLLM won #ICML2025 ✨Outstanding Paper Award along with 6 other works! icml.cc/virtual/2025/a… 🫂 Absolutey honored and grateful for coauthors @MSFTResearch @StanfordAILab and friends who made this happen! 🗣️ Welcome people to our presentations about CollabLLM tomorrow (Tuesday): - Oral 1A (icml.cc/virtual/2025/s…) - Poster Session 1 East (icml.cc/virtual/2025/s…) - Multiagent Social (icml.cc/virtual/2025/4…) Please check out: Website: aka.ms/CollabLLM Github: github.com/Wuyxin/collabl… Paper: arxiv.org/pdf/2502.00640 Blog: #blog" target="_blank" rel="nofollow noopener">wuyxin.github.io/collabllm/#blog

Learn how #verl simplifies reinforcement learning for advanced #LLM reasoning and tool use. Our Aug 6 webinar with Haibin Lin of ByteDance covers PPO/GRPO/DAPO, async rollout, MoE expert parallelism & more. 🔗 hubs.la/Q03BrqFC0 #PyTorch #ReinforcementLearning #OpenSourceAI

In the era of experience, we're training LLM agents with RL — but something's missing... We miss the good old Gym! So we built 💎GEM: a suite of environments for training LLM 𝚐𝚎𝚗𝚎𝚛𝚊𝚕𝚒𝚜𝚝𝚜. Let’s build the Gym for LLMs, together: axon-rl.notion.site/gem

Proud to introduce Group Sequence Policy Optimization (GSPO), our stable, efficient, and performant RL algorithm that powers the large-scale RL training of the latest Qwen3 models (Instruct, Coder, Thinking) 🚀 📄 huggingface.co/papers/2507.18…