Kyle Waters

568 posts

Kyle Waters

@kylewaters_

Co-founder @PortexAI | measuring AI progress with novel evals

Day 2 of #PyTorchCon 🔥 What a ride. Talked with folks using #PyTorch to fine-tune models for drug discovery, cancer research, autonomous vehicles and, of course, customer support! Thanks @PyTorch for having us!

Ilya Sutskever made a rare appearance at NeurIPS. He said the internet is the fossil fuel of AI, that we are at peak data, and that 'Pre-training as we know it will unquestionably end'.

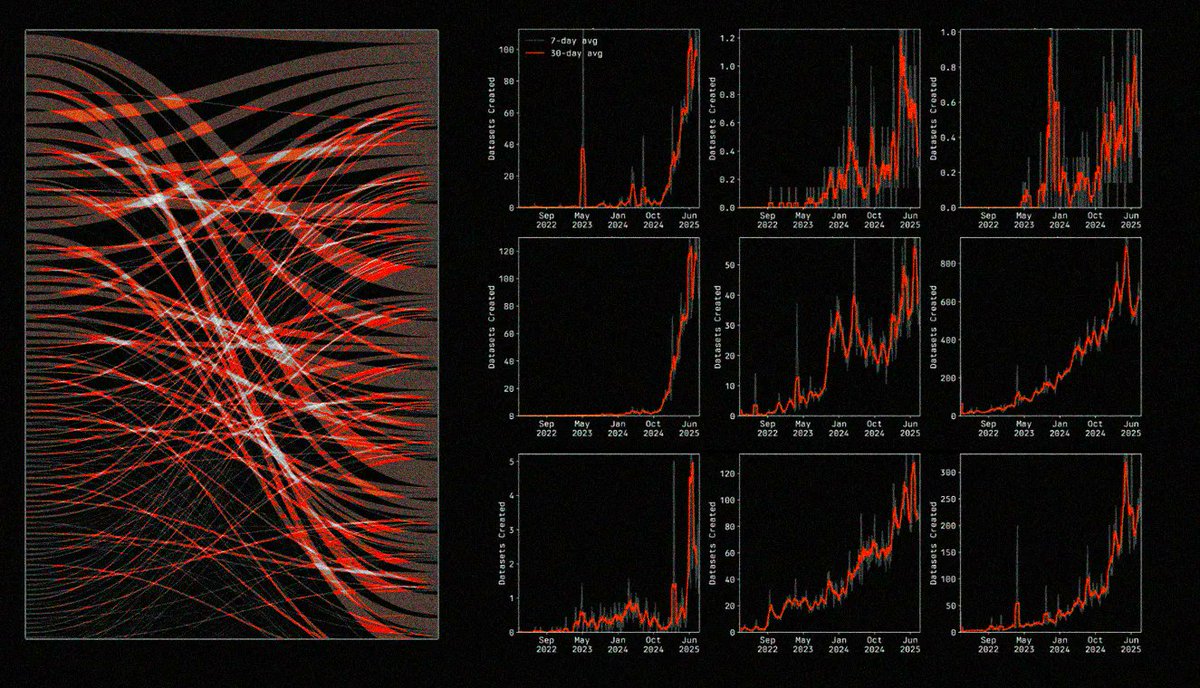

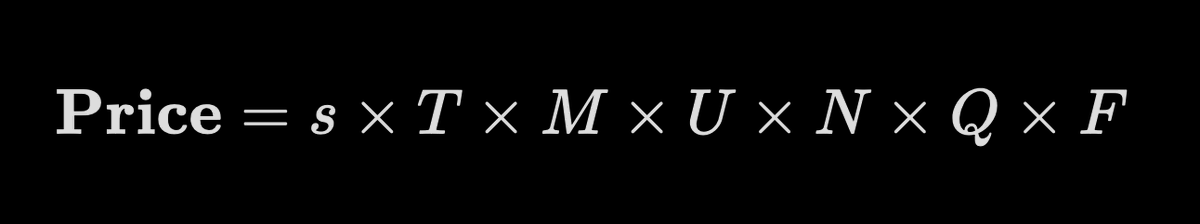

AI has kicked off a gold rush for data, with OpenAI alone projecting $8B in data-related expenses by 2030. The challenge now is finding a reliable way to value data in this era. Our latest on data valuation techniques: research.portexai.com/data-valuation…

1/ Introducing Isaac 0.1 — our first perceptive-language model. 2B params, open weights. Matches or beats models significantly larger on core perception. We are pushing the efficient frontier for physical AI. perceptron.inc/blog/introduci…