labml.ai

654 posts

labml.ai

@labmlai

📝 Annotated paper implementations https://t.co/qeO4UTbrJ3

Katılım Aralık 2020

9 Takip Edilen12.6K Takipçiler

Sabitlenmiş Tweet

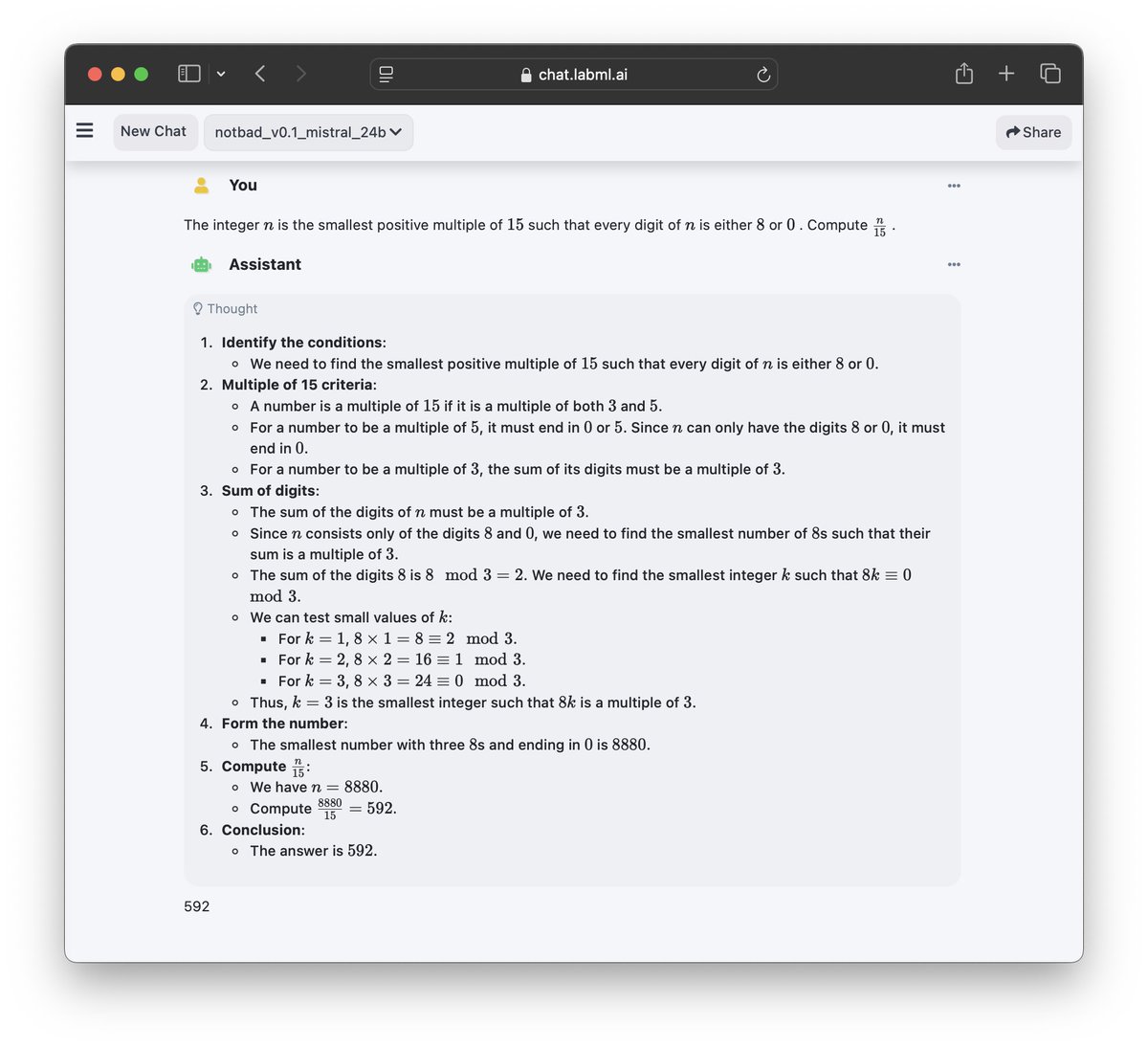

You can now download the Notbad v1.0 Mistral 24B model from @huggingface

huggingface.co/notbadai/notba…

Try it on chat.labml.ai

English

labml.ai retweetledi

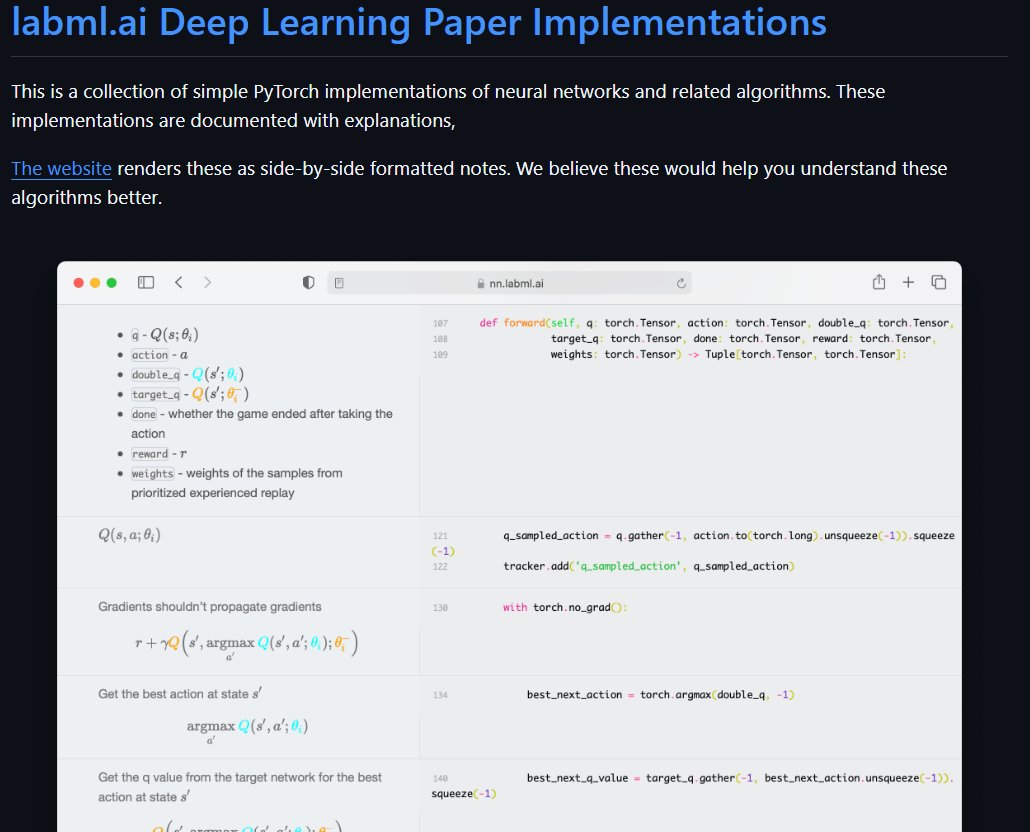

As an ML engineer, implementation >>>>everything.

Knowing is theory. Implementation is understanding.

Few outstanding topics it has:

1. Reinforcement Learning - ppo, dqn

2. Transformer - classical to Retro, switch, gpt models

3. Diffusion models - stable, DDPM, DDIM, UNET

4. GANs - cycle, wasserstein, stylegan & few more

5. Graph neural networks - GAT, GATv2

Skip the tutorial hell & learn about various models

Learn implementations in this GitHub repo.

I’ll share more resources later. Link in comments 👇

English

labml.ai retweetledi

We've open-sourced our internal AI coding IDE.

We built this IDE to help with coding and to experiment with custom AI workflows.

It's based on a flexible extension system, making it easy to develop, test, and tweak new ideas quickly. Each extension is a Python script that runs locally.

(Links in replies)

🧶👇

English

labml.ai retweetledi

GEPA appears to be an effective method for enhancing LLM performance, requiring significantly fewer rollouts than reinforcement learning (RL).

It maintains a pool of system prompts. It uses an the LLM to improve them by reflecting on the generated answers and the scores/feedback for a minibatch of problems.

GEPA keeps the Pareto frontier of system prompts. That is, if system prompt A performs worse on every validation problem compared to system prompt B, A is filtered out from the pool.

👇

English

labml.ai retweetledi

Wrote an annotated Triton implementation of Flash Attention 2. (Links in reply)

This is based on the flash attention implementation by the Triton team. Changed it to support GQA and cleaned up a little bit.

Check it out to read the code for forward and backward passes along with the math and derivations. Hope this helps understand transformer attention and flash attention better.

There's about 60 more annotated deep learning paper implementations on this website.

English

labml.ai retweetledi

Added the JAX transformer model to annotated paper implementations project.

x.com/vpj/status/143…

Link 👇

vpj@vpj

Coded a transformer model in JAX from scratch. This was my first time with JAX so it might have mistakes. lit.labml.ai/github/vpj/jax… This doesn't using any high-level frameworks such as Flax. 🧵👇

English

labml.ai retweetledi

labml.ai retweetledi

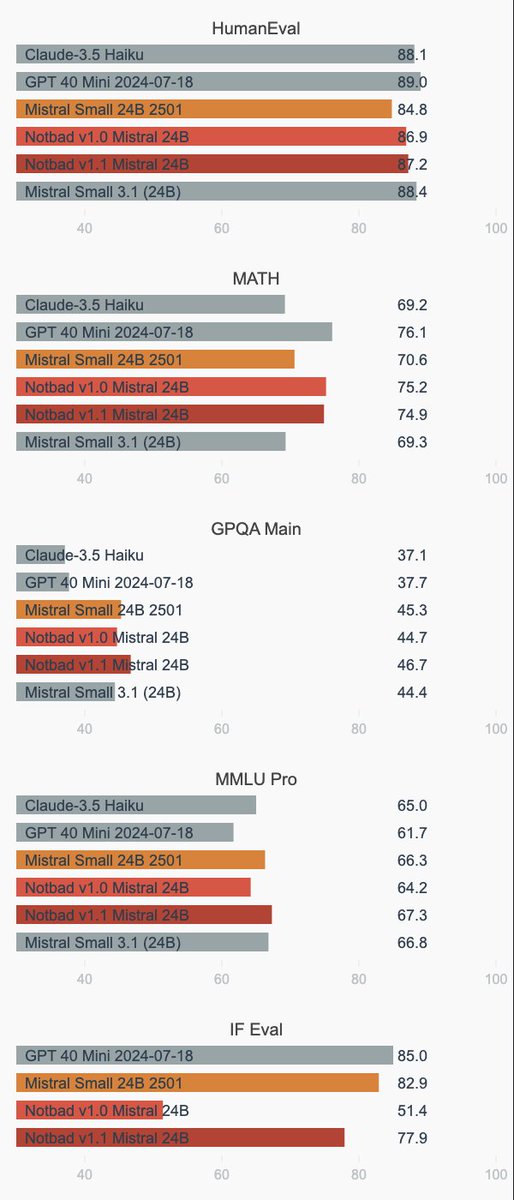

The new training also improved GPQA from 64.2% to 67.3% and MMLU Pro from 64.2% to 67.3%.

This model was also trained with the same reasoning datasets we used to train the v1.0 model. We mixed more general instruction data with answers sampled from the Mistral-Small-24B-Instruct-2501 model during the SFT to reduce the degradation of IFEval, which seems to have resulted in generalization of reasoning to non math and coding problems.

The datasets and the models are available on @huggingface.

Follow @notbadai for updates.

NOTBAD AI@notbadai

We are releasing an updated reasoning model with improvements on IFEval scores (77.9%) than our previous model (only 51.4%). 👇 Links to try the model and to download weights below

English

labml.ai retweetledi

labml.ai retweetledi

We just released a Python coding reasoning dataset with 200k samples on @huggingface

This was generated by our RL-based self-improved Mistral 24B 2501 model. This dataset was used to train train Notbad v1.0 Mistral 24B.

🤗 Links in replies 👇

English

labml.ai retweetledi

Uploaded the dataset of 270k math reasoning samples that we used to finetune Notbad v1.0 Mistral 24B (MATH-500=77.52% GSM8k Platinum=97.55%) to @huggingface (link in reply)

Follow @notbadai for updates

English

labml.ai retweetledi

We're open-sourcing a math reasoning dataset with 270k samples, generated by our RL-based self-improved Mistral 24B 2501 model and used to train Notbad v1.0 Mistral 24B.

Available on Hugging Face: huggingface.co/datasets/notba…

English

labml.ai retweetledi

📢 We are excited to announce Notbad v1.0 Mistral 24B, a new reasoning model trained in math and Python coding. This model is built upon the @MistralAI Small 24B 2501 and has been further trained with reinforcement learning on math and coding.

English

Quick star: #quickstart" target="_blank" rel="nofollow noopener">labml.ai/app#quickstart

GitHub: github.com/labmlai/labml

English

labml.ai retweetledi