Laetitia Teodorescu

161 posts

Laetitia Teodorescu

@lae_teo

Hitting LLMs with a stick at @AdaptiveML

For example, we gave Claude an impossible programming task. It kept trying and failing; with each attempt, the “desperate” vector activated more strongly. This led it to cheat the task with a hacky solution that passes the tests but violates the spirit of the assignment.

Weirdly, I actually think Yann is making an important point here that is getting lost in semantics. Human intelligence also has jagged frontiers, we're just used to the shape.

so apparently swe-bench doesn’t filter out future repo states (with the answers) and the agents sometimes figure this out… github.com/SWE-bench/SWE-…

What if you could not only watch a generated video, but explore it too? 🌐 Genie 3 is our groundbreaking world model that creates interactive, playable environments from a single text prompt. From photorealistic landscapes to fantasy realms, the possibilities are endless. 🧵

Seed-Prover’s 30 / 42-point silver-medal performance at IMO 2025 - Fully solved 4/6 problems - Included 3-day proofs for P3 (2000-line Lean) & P4 (4000-line Lean) - Geometry problem solved in 2 seconds via Seed-Geometry New SOTA Across Benchmarks - 100% MiniF2F-valid - 99% MiniF2F-test (243/244) - 79% past IMO problems - 30% CombiBench (3× improvement) Key - Multi-stage reasoning: Light/Medium/Heavy modes scale from minutes to days - Self-improving proofs: Pass@8-16 refinement beats brute-force approaches - Conjecture pools: Heavy mode spawns thousands of helper lemmas to crack the hardest problems would like to see a hybrid that merges Delta Prover’s training-free agentic loop with Seed’s RL-driven lemma engine.

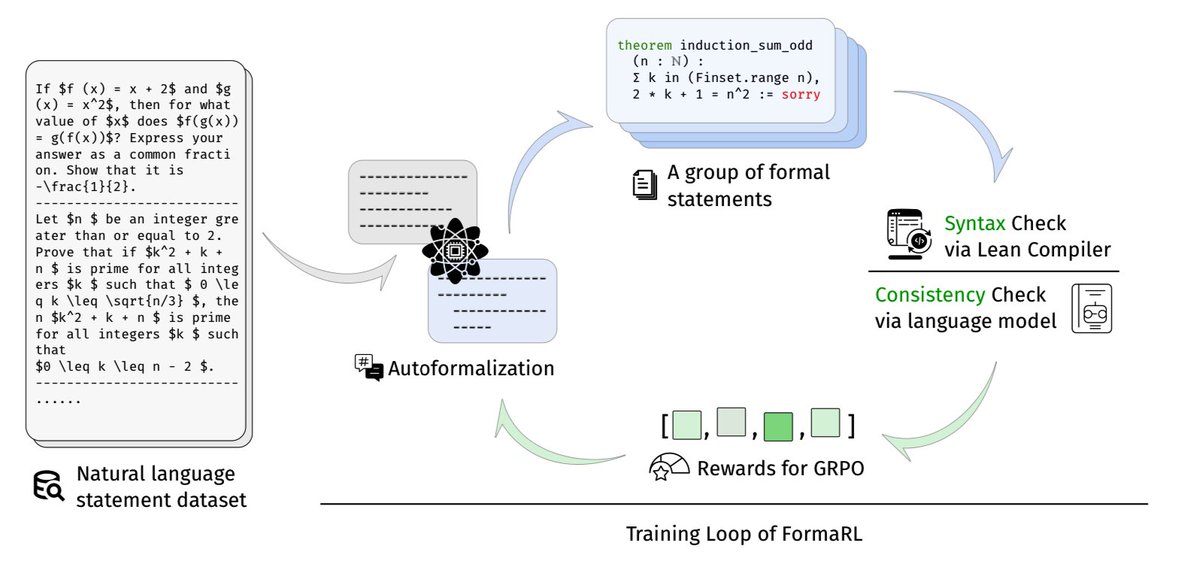

Leanabell-Prover-V2: Verifier-integrated Reasoning for Formal Theorem Proving via Reinforcement Learning Leanabell-Prover-V2 is a 7B LLM trained for Lean 4 theorem proving via verifier-integrated long CoT and RL, finetuned from DeepSeek-Prover and Kimina-Distill. - Verifier feedback loop: inline Lean 4 execution; verifier errors guide reflex-style correction - Cold-start CoT synthesis: “incorrect → correct” proof pairs via autoformalization, verifier feedback, and Claude-3.7-Sonnet rewrites - RL via DAPO: token-level policy gradients with verifier-derived rewards; feedback token masking for stability - Reflection iteration: multi-turn verifier calls during generation; pass@128 improves up to +3.7% with 1–3 correction cycles - Gains plateau on strong baselines like DeepSeek-Prover-V2-7B, and overall still trail 70B+ models on harder benchmarks Results (pass@128): - MiniF2F: 78.2% (+2.0%) vs DeepSeek-Prover-V2-7B; 70.4% (+3.2%) vs Kimina-Distill-7B - ProofNet: +6.4% on Kimina base; competitive with DeepSeek - ProverBench: Solves 1 extra AIME 24/25 problem vs DeepSeek base

Something about this kind of prompt is simply unfathomable to LLMs. They just can't perform better than chance, and I'm not sure why. Most people will dismiss this as just being "hard math stuff", but it is not, I swear. It is just alien to you because it is *niche*, thus, it looks scary. But, once you're fluent on the λ-Calculus, this is a very easy routine coding task. If you had a 1-week course with me, you'd be able to solve this too, and dozens of similar programs, that no LLM can. I promise you. LLMs can do surprisingly hard things. But not niche things. What makes this so frustrating and strange is that LLMs do know about the λ-Calculus. In fact, they know a lot about it. There are hundreds of papers about it in the training corpus. They know the jargon, they theory, the semantics. But they just can't code in it... because humans don't code in it. They know everything about the λ-Calculus, except how to use it. Because we don't. GPT-4 failed, Sonnet-3.5 failed, o1 failed, Gemini failed, o3 failed, and, now, Grok-4 failed. Yet, if only they had seen codebases written using the λ-Calculus, they wouldn't fail anymore. I could probably write a large λ-Calculus dataset, publish it, and the next LLM models would easily solve this prompt, and much harder ones. But someone has to teach them how to use the λ-Calculus, and, so far, nobody did. That's the underlying issue: LLMs are perfect learners, but only for things that are explicitly taught. They can't learn what a human didn't teach them, and that's why they can't produce new science; after all, producing new science is learning about something of nature, without a human professor. This is not a criticism, this is a reflection. How can we teach AIs to learn skills that are not explicitly taught to them? What is still missing, that even billions spent in RL-style post training wasn't able to fulfill?