Sabitlenmiş Tweet

Daniel Langkilde

4.2K posts

Daniel Langkilde

@langkilde

CEO & co-founder @Kognic_.

Sweden Katılım Ocak 2009

247 Takip Edilen758 Takipçiler

As of last week, I'm an investor in Anthropic through Stellatrion Partners Fund IV! 🎉

There is a lot of debate about a possible AI-bubble. In general, I find that opinions don't count for much unless you have skin in the game. Saying stuff is easy 💬 Well, I'm now walking the walk by betting that Anthropic is undervalued at $380B (their latest raise) by buying shares from that round indirectly.

Why? Several reasons:

1. Enterprise Agents. Since November, Agents have gone from an interesting idea to useful. We are adopting them at a fast pace at Kognic. While there are still many issues and challenges, I'm confident that models will keep improving. In particular for verifiable tasks. Based on Kognic alone, I'm confident that Anthropic's overall revenue will grow significantly over the next 24 months.

2. I personally would hate living without my Claude subscription. I pay the maximum price and use API credits when needed, and basically run my life using Claude Code today. It does my personal finances, helps me keep track of photo backups, and audits my servers and Mac to keep them secure. Among other things. It's the most valuable addition to my toolbox since I got a MacBook. I like the idea of being a shareholder in a company whose products I admire ❤️

3. All the angel investments I've made ultimately depend on Claude Code today (e.g. Lovable ). Investing in Anthropic means investing in the "source".

4. In the extremely unlikely event that we face some sort of singularity, I want to have money inside the "light cone of value" 😉

English

@emollick do you have a preferred definition of the distinction between ASI and AGI? Trying to be more precise myself 😅

English

Daniel Langkilde retweetledi

Three weeks ago there were rumors that one of the labs had completed its largest ever successful training run, and that the model that emerged from it performed far above both internal expectations and what people assumed the scaling laws would predict. At the time these were only rumors, and no lab was attached to them. But in light of what we now know about Mythos, they look more credible, and the lab was probably Anthropic.

Around the same time there were also rumors that one of the frontier labs had made an architectural breakthrough. If you are in enough group chats, you hear claims like this constantly, and most turn out to be nothing. But if Anthropic found that training above a certain scale, or in a certain way at that scale, produces capabilities that sit far above the prior trendline, then that is an architectural breakthrough.

I think the leaked blog post was real, but still a draft. Mythos and Capybara were both candidate names for the new tier, though Mythos may now have enough mindshare that they end up keeping it. The specific rumor in early March was that the run produced a model roughly twice as performant as expected. That remains unconfirmed. What is confirmed is that Anthropic told Fortune the new model is a 'step change,' a sudden 2x would certainly fit the definition.

We will find out in April how much of this is true. My own view is that the broad shape of this is correct even if some of the numbers are wrong. And if it is substantially accurate, then it also casts OpenAI's recent restructuring in a new light. If very large training runs are about to become essential to staying in the game, then a lot of their recent decisions, like dropping Sora, make even more sense strategically.

For the public, this would mean the best models in the world are about to become much more expensive to serve, and therefore much more expensive to use. That will put pressure on rate limits, pricing, and subscription plans that are already subsidized to some unknown degree. Instead of becoming too cheap to meter, frontier intelligence may be about to become too expensive for most of humanity to afford.

Second-order effects; compute, memory, and energy are about to become much more important than they already are. In the blog they describe the new model as not just an improvement, but having 'dramatically higher scores' than Opus 4.6 in coding and reasoning, and as being 'far ahead' of any other current models. If this is the new reality, then scale is about to become king in a whole new way. It would also mean, as usual, that Jensen wins again.

English

I've decided to become more active on X again after years of being away ✍️ Also: I was shocked to find that I've had an account on the platform for over 17 years 😱 I'm getting old... Anyway, why am I returning?

Well, as complicated as my emotions about the new management might be, a lot of 𝗴𝗲𝗻𝘂𝗶𝗻𝗲𝗹𝘆 𝗶𝗻𝘁𝗲𝗿𝗲𝘀𝘁𝗶𝗻𝗴 people are on X, posting really insightful things. I also find that LinkedIn isn't great for high frequency stuff.

My plan is to dual-post anything major to both platforms, and then post smaller, more high frequency things to X going forward.

To get started, I cleaned up who I follow and organize everything into lists. You can see my starting set of 230 accounts in the pdf (if you are on LinkedIn) or through my profile (if you are on X). The script for cleaning my x account is here along with the account list: github.com/dlangk/x-admin

Let's go 🚀

English

In practice, I let Gemini Deep Think and Opus 4.6 collaborate on the science, with my intent as the guide. My workbench is Yatzy, since it's mathematically interesting yet very easy to play. I use DT as my "theorist" and Opus as my "experimentalist". I prompt them to write as two scientists conversing through their papers. Falsifiable hypotheses, pre-registering experimental result expectations, etc. It's insanely powerful. I'm sure there is some risk of "unverified claims" slipping through the cracks, but I think the scientific method drastically reduces the risk. Every claim needs to be backed up and experimentally verified.

English

My setup is evolving:

- Ghostty is now my default terminal

- I run a surprising amount of compute-heavy work (I like picking apart games), so I complement that with btop

- Thanks to Opus, all of my HPC stuff is now written in Rust. I'm able to squeeze every single drop out of my Mac with their help.

English

I love this post. I'm a "messy middle" kind of guy. The opening is easy, and dreaming about the end game if fun. But it's the middle that separates the wheat from the chaff.

Will Manidis@WillManidis

English

Daniel Langkilde retweetledi

Fun read on "the death of reading" by @a_m_mastroianni:

"Reading has already survived several major incursions, which suggests it’s more appealing than we thought. Radio, TV, dial-up, Wi-Fi, TikTok—none of it has been enough to snuff out the human desire to point our pupils at words on paper. Apparently, books are what hyper-online people call “Lindy”: they’ve lasted a long time, so we should expect them to last even longer.

It is remarkable, even miraculous, that people who possess the most addictive devices ever invented will occasionally choose to turn those devices off and pick up a book instead. If I were a mad scientist hellbent on stopping people from reading, I’d probably invent something like the iPhone. And after I released my dastardly creation into the world, I’d end up like the Grinch on Christmas morning, dumfounded that my plan didn’t work: I gave them all the YouTube Shorts they could ever desire, and they’re still...reading!!"

experimental-history.com/p/text-is-king

English

@lexfridman What will it take to improve sample and energy efficiency? I don't think the problem is expressive capacity. Humans are adaptive because we are efficient.

English

Doing a long, super-technical podcast on the state-of-the-art in AI. Let me know if you have question, topic suggestions. Everything from details of LLM training pipeline & architectures, to coding, robotics, scaling, compute, business, geopolitics, etc.

Besides topics & questions... add papers, blogs, posts, rants, perspectives that you'd like to see covered.

English

To forecast AI progress, I think it's relevant to ask yourself: what can be learned? I've been exploring the taxonomies of problems by learnability. Here's a proposal:

𝟭. 𝗘𝘅𝗮𝗰𝘁-𝗖𝗼𝗺𝗽𝘂𝘁𝗮𝗯𝗹𝗲 𝗘𝗻𝘃𝗶𝗿𝗼𝗻𝗺𝗲𝗻𝘁𝘀, i.e., domains where the rules are fully known, the state is fully observable, and the solution space is convex or analytically tractable. These systems can be solved using equations, so learning isn't even required. The limit is the cryptographic hardness of, or even the NP-hardness of, problems; i.e., even when the environment is fully known and exact simulators exist, certain patterns remain computationally invisible or unaccessible to any efficient algorithm.

𝟮. 𝗦𝗲𝗹𝗳-𝗣𝗹𝗮𝘆-𝗦𝗼𝗹𝘃𝗮𝗯𝗹𝗲 𝗘𝗻𝘃𝗶𝗿𝗼𝗻𝗺𝗲𝗻𝘁𝘀. i.e., domains with known rules but intractable search spaces. Optimal behavior is learned via, e.g., reinforcement learning and exploration in a perfect simulator (e.g., Chess and Go). Self-play trades energy for intelligence. AlphaZero required massive computing power to master Go. The limit is that if the simulator differs from reality by even a microscopic fraction, the learner may exploit the simulator's flaws rather than learning the real physics. Self-play is valid only when the Cost of Simulation < Value of Solution and when the Simulator Fidelity = Reality.

𝟯. 𝗖𝗵𝗮𝗼𝘁𝗶𝗰 & 𝗦𝘁𝗼𝗰𝗵𝗮𝘀𝘁𝗶𝗰 𝗘𝗻𝘃𝗶𝗿𝗼𝗻𝗺𝗲𝗻𝘁𝘀, i.e., domains with intrinsic uncertainty or sensitivity to initial conditions (e.g., Weather, Markets, Fluid Dynamics). The challenge here is that chaotic systems create new information (entropy) exponentially fast. Here, you run into the Lyapunov Time Limit, i.e., no matter how much data you ingest or how massive your transformer model is, prediction beyond a certain point is mathematically impossible because the necessary information does not yet exist in the macroscopic current state. These environments require continuous, high-energy monitoring to maintain a "low-entropy" predictive state.

𝟰. 𝗛𝘂𝗺𝗮𝗻-𝗣𝗼𝗽𝘂𝗹𝗮𝘁𝗶𝗼𝗻-𝗗𝗲𝗽𝗲𝗻𝗱𝗲𝗻𝘁 𝗦𝘁𝗿𝗮𝘁𝗲𝗴𝗶𝗰 𝗘𝗻𝘃𝗶𝗿𝗼𝗻𝗺𝗲𝗻𝘁𝘀, i.e., general-sum, multiplayer environments where "optimality" is defined by coordination, norms, and culture. Self-play fails here. If you train an AI to drive via self-play, it might invent a highly efficient language of flashing lights to coordinate intersections. It has "solved" driving, but it cannot drive with humans because it converged to a different convention. Social norms are arbitrary conventions. These are Class I Unlearnable from first principles. You cannot "derive" which side of the road to drive on from physics. An AI can learn the statistics of text, but without the social grounding, it cannot learn the meaning required for true coordination. Competence here is dependent on data intake. You cannot "compute" your way to social competence; you must "download" the arbitrary conventions of the specific human population.

Kognic exists to solve problems in Class IV.

English

Daniel Langkilde retweetledi

@TeamYouTube Unforunately I need to talk to a human, and there is no contact information on this site. How do I connect to a human to help me resolve my issue?

English

@langkilde If you need help with your YouTube channel or account, check out this page for the different support options available: goo.gle/3OjGnl8

English

@TeamYouTube Hi! I need someone to contact me to resolve an issue with my Google-YouTube account connection. Both support departments point to each other! 🤯

English

@github Hi! I'm trying to get a verification code sent to my email, but not getting one. Is there something wrong at the moment?

English

Håller AI på att bli en ny IT-bubbla. Det funderar jag på i senaste Veckans Tanke som du hittar här: research.sebgroup.com/macro-ficc/rep…

Svenska

Daniel Langkilde retweetledi

Daniel Langkilde retweetledi

@elonmusk Interesting point, but an example might make it clearer. Can you think of a prominent person who's currently wasting his talents in software when he could be working on manufacturing and heavy industries?

English

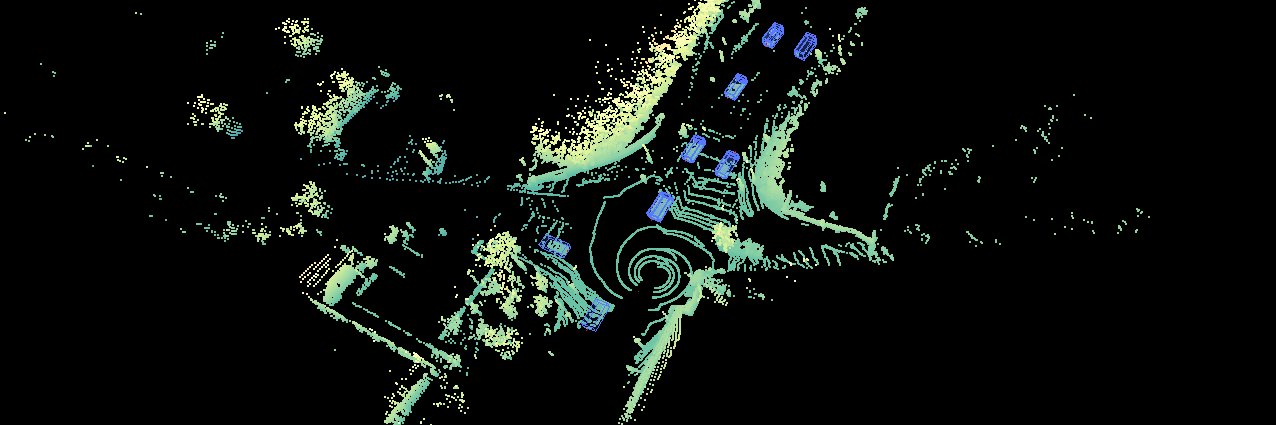

This is an outstanding achievement. The first Foundation model for this sort of task that I know of. Thank you for making it available @ylecun!

Yann LeCun@ylecun

SAM: Segment Anything Model from FAIR. Foundation model for image segmentation. Demo: segment-anything.com/demo Blog: ai.facebook.com/blog/segment-a… Paper: ai.facebook.com/research/publi… Code: github.com/facebookresear… Dataset: SA-1B , 11 million image, 1 billion masks ai.facebook.com/datasets/segme…

English

Debate at 5:50 EST today:

“Do large language models need sensory grounding for meaning and understanding?”

Debaters:

Jacob Browning, David Chalmers, Brenden Lake, Yann LeCun, Gary Lupyan, Ellie Pavlick.

eventbrite.com/e/philosophy-o…

English