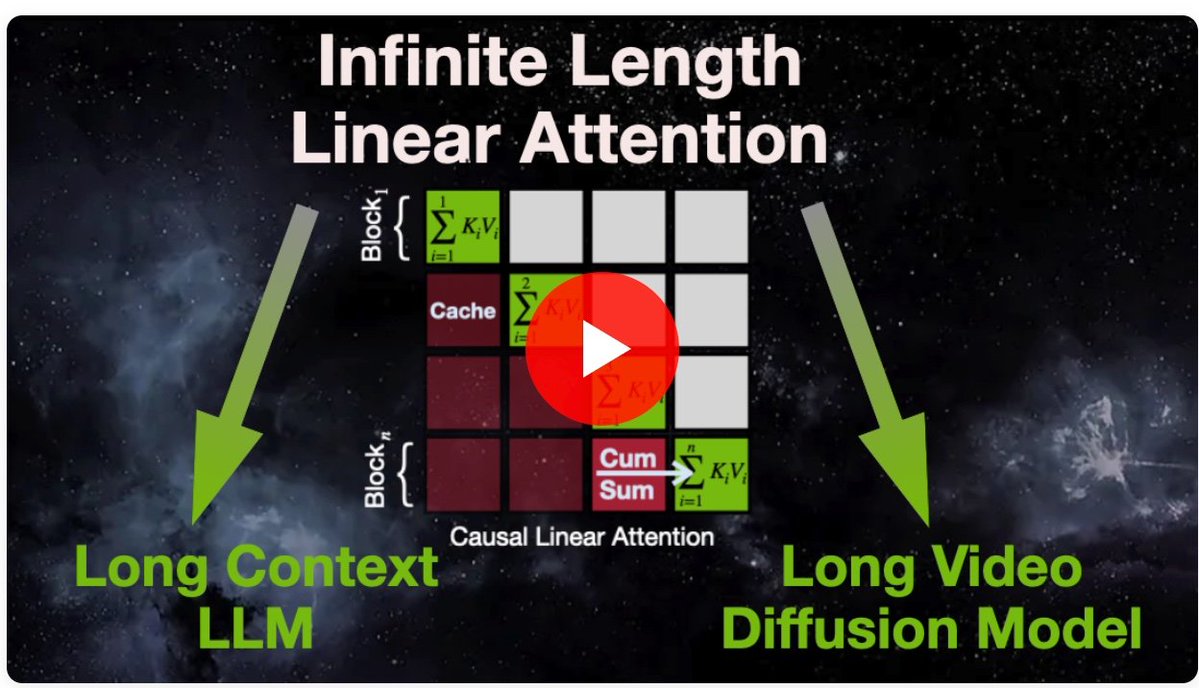

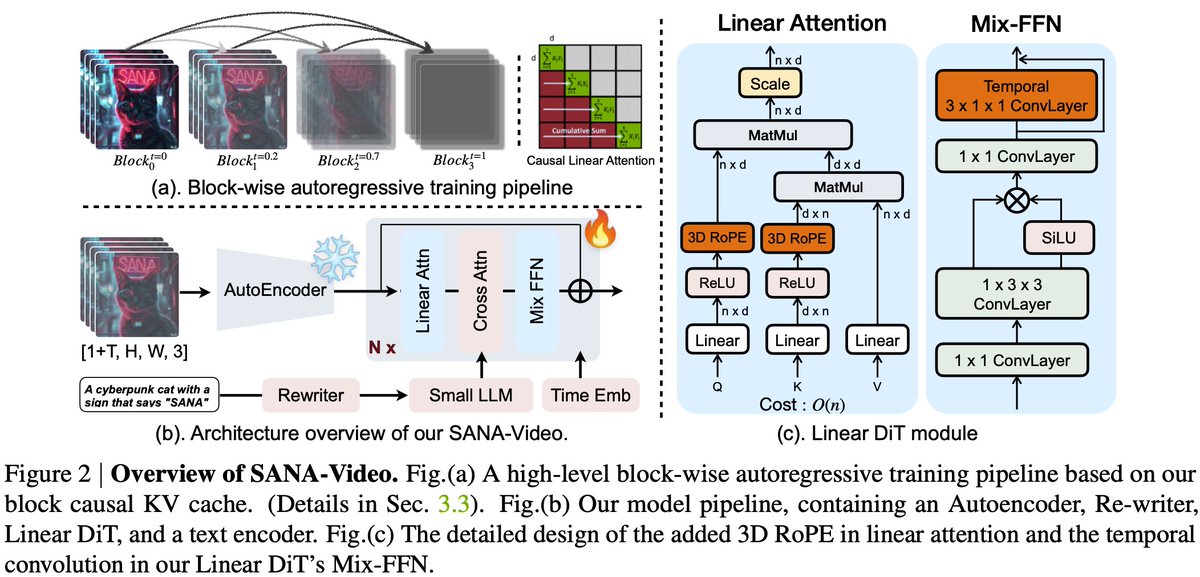

Exciting updates for SANA Video! 🚀 1️⃣ 4-Step Video Generation: Our new demo is live! By combining DMD distillation + TVAE, we’ve achieved incredibly fast inference in just 4 steps. ⚡️ Try it here: 🔗 sana-video.hanlab.ai 2️⃣ LongSANA is Open Source: We’ve officially released the training and inference code for LongSANA. Dive into the tech and build with us! 📦 Code & Docs: 🔗 nvlabs.github.io/Sana/docs/long…