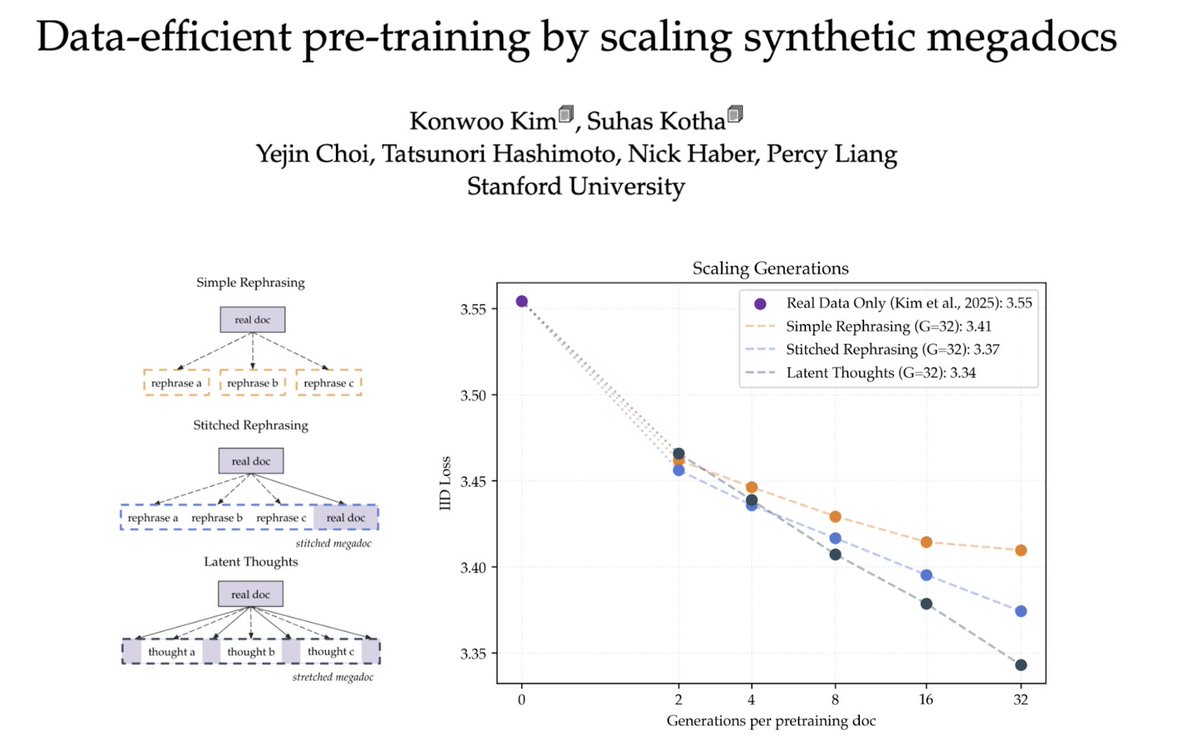

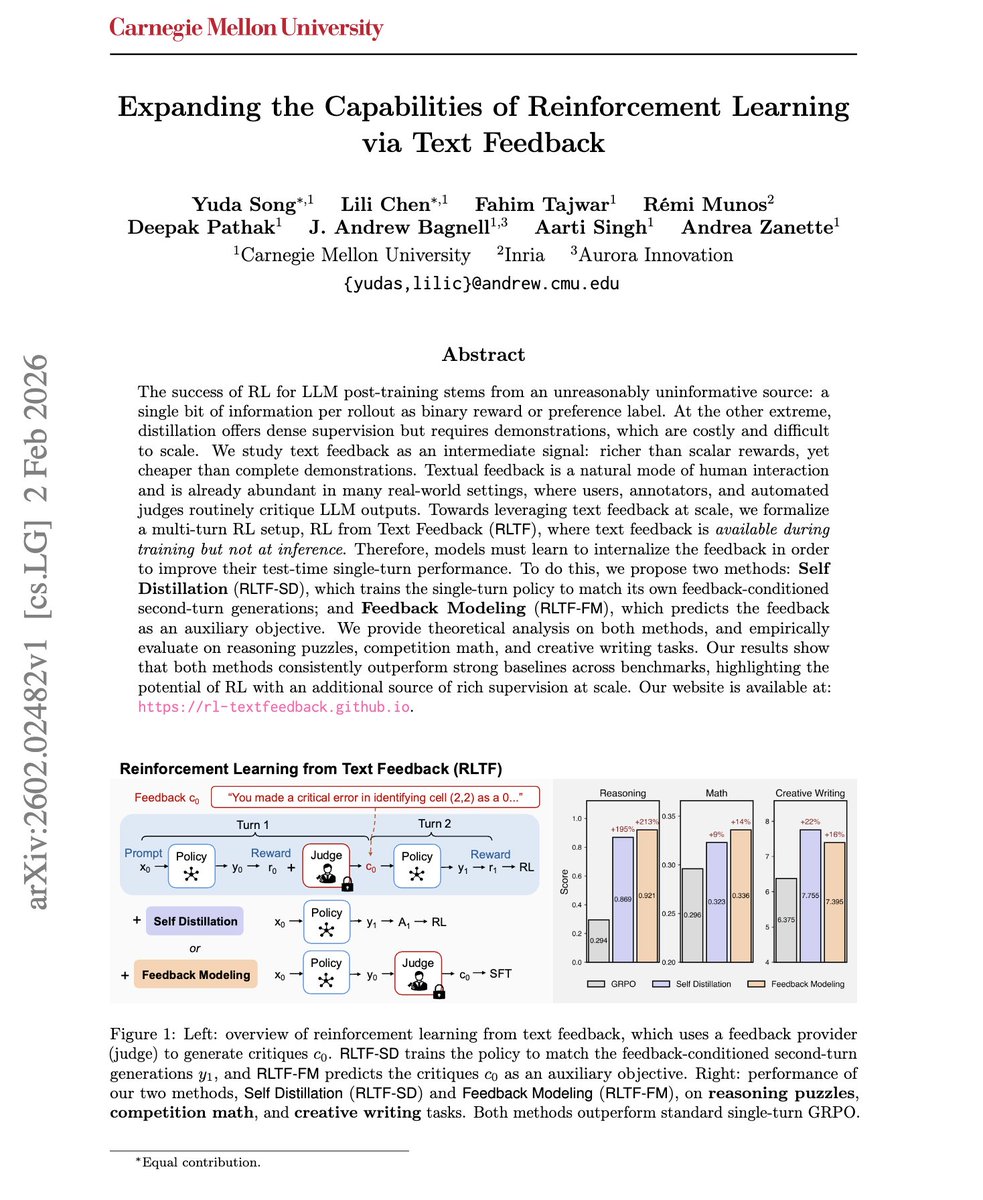

RL on LLMs inefficiently uses one scalar per rollout. But users regularly give much richer feedback: "make it formal," "step 3 is wrong." Can we train LLMs on this human-AI interaction? We introduce RL from Text Feedback, with 1) Self-Distillation; 2) Feedback Modeling (1/n) 🧵