Luca Ecari

4.5K posts

Luca Ecari

@lecaritweets

I post about my 2 places of the heart: Italy & Switzerland. (Moving to Switzerland: Your 90-Day Survival Plan https://t.co/qItlqqZ1oL)

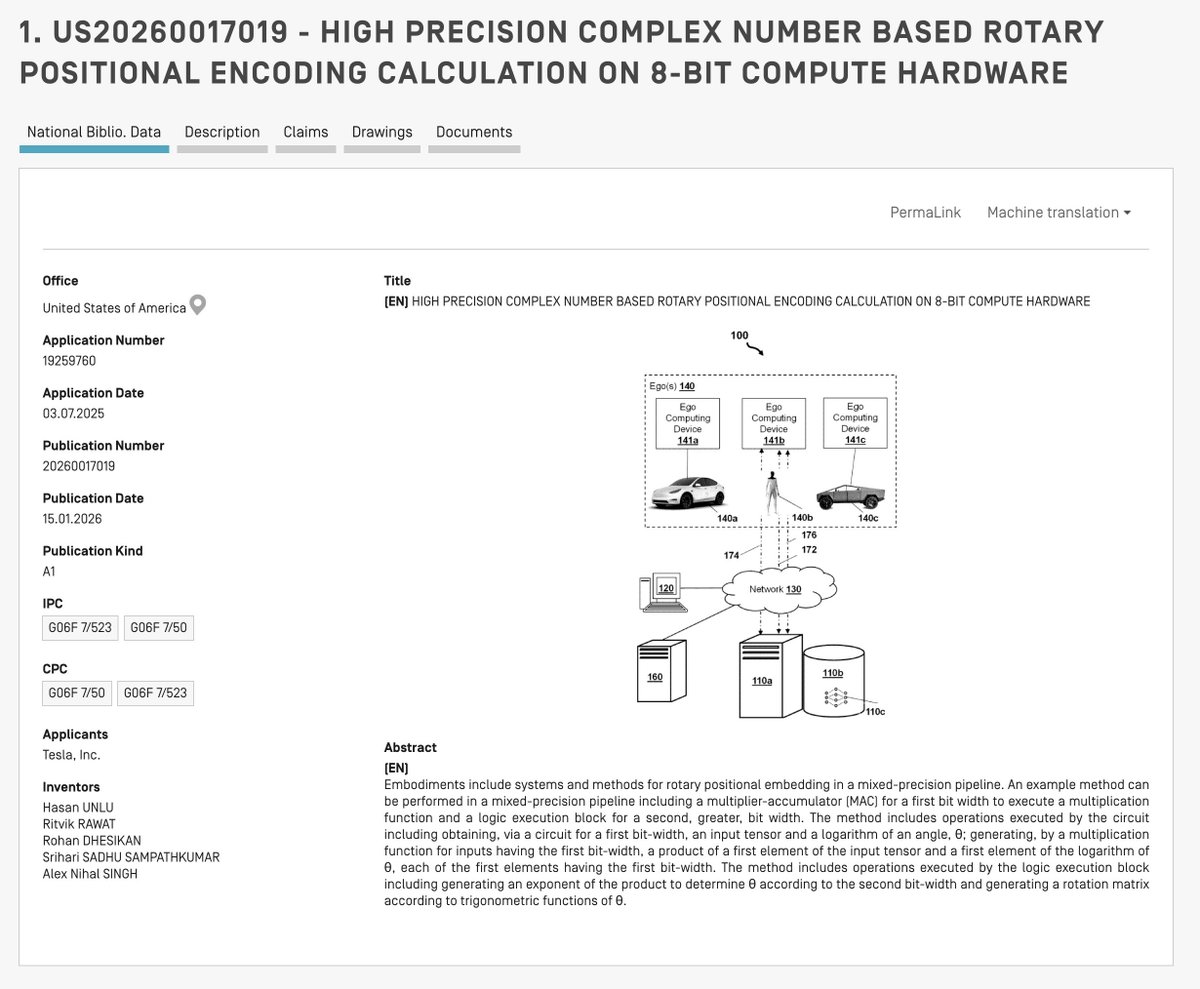

What are some movies that had no right being as good as they turned out to be?

🇪🇸🇺🇸 SPAIN HANDED TESLA THE KEYS TO EUROPE: UNLIMITED NATIONWIDE FSD TESTING, NO DRIVER REQUIRED Yes, this is massive news for Tesla. Spain quietly dropped the mother of all regulatory gifts in July 2025: the ES-AV framework that puts the country straight into Phase 3. Remote monitoring allowed, no mandatory safety driver, full public road access. Tesla immediately got approval for 19 vehicles with unlimited testing across the entire country. This isn't some small pilot program in a parking lot. This is real-world, all-roads, no-human-behind-the-wheel testing at scale, exactly what Tesla needs to train FSD Supervised and Robotaxi to European driving chaos. Spain just became Tesla's European data goldmine overnight. While California is still choking on red tape and Germany moves at a bureaucratic snail's pace, Spain said, "Come get your miles." 2026 Robotaxi fleets in Madrid and Barcelona suddenly look a lot more real. Is Europe waking up? If so, Tesla is the alarm clock. Source: ES-AV Framework Programme (July 2025), @KRoelandschap, @Tesla, @teslaeurope