Levi

1.1K posts

For Agentic tasks, Oracle-level performance is the maximum performance a system can achieve, assuming it is able to retrieve all relevant documents perfectly, every time. We're proud to show that Mixedbread Search approaches the Oracle on multiple knowledge intensive benchmarks.

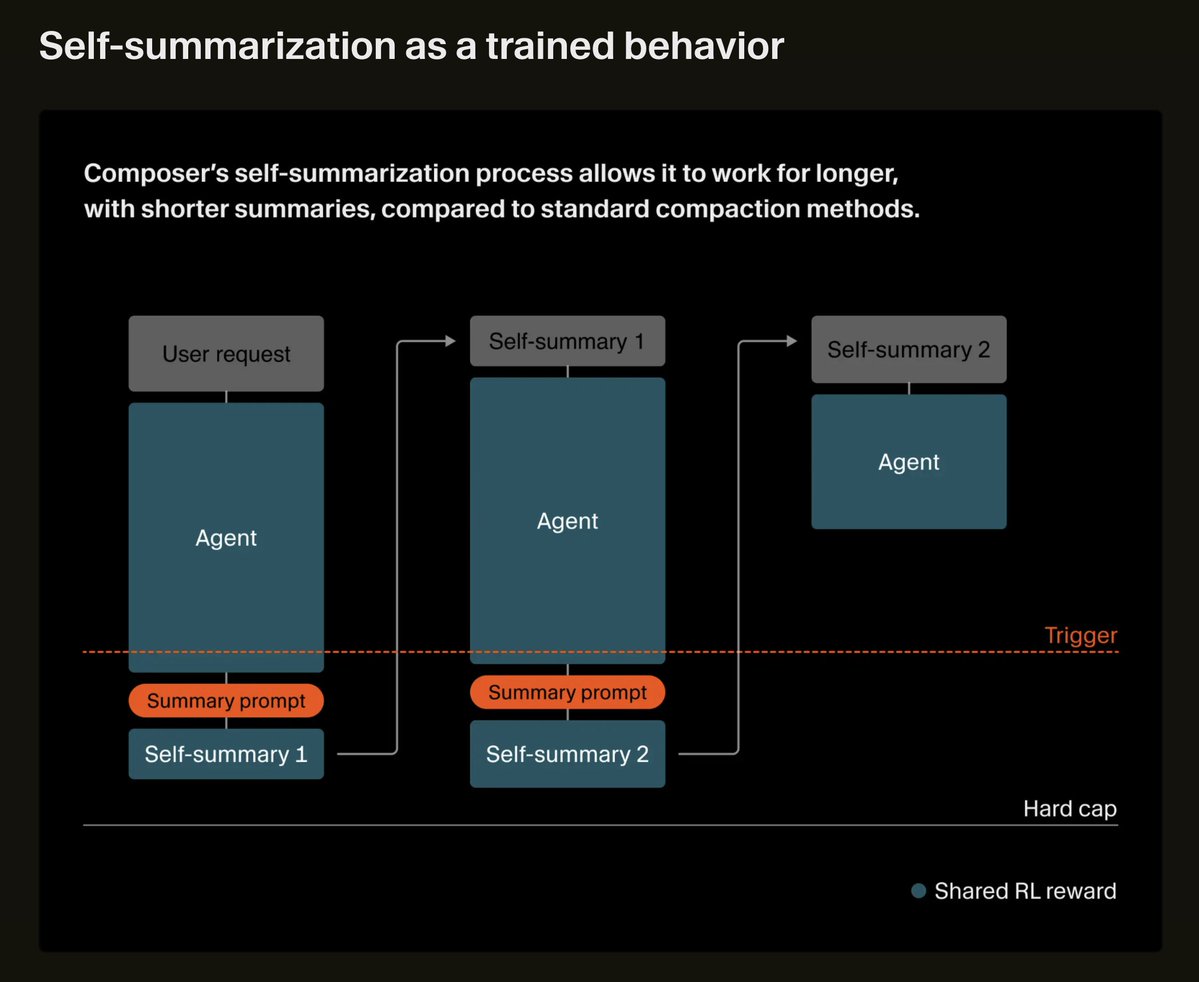

We trained Composer to self-summarize through RL instead of a prompt. This reduces the error from compaction by 50% and allows Composer to succeed on challenging coding tasks requiring hundreds of actions.

The Cursor vs. Claude/Codex feels very flawed and missing the bigger picture The labs have ~infinite money and specialized talent, and are going to win on coding models – that's a runaway train. Composer is impressive, but ultimately more for margin protection / defense than playing to win that game. But to quote Stringer Bell, "there are games beyond the game" and I believe Cursor's destiny is different: becoming the new Github – the place where the whole engineering process lives. Their real competition is with them and Linear, not the labs. Bugbot is a great start. We find it super valuable, no matter what coding agent is used, and is a nice wedge into Cursor getting beyond the coding itself. And of course acquiring @graphite. PR review is the single most essential workflow in Github and very ripe for disruption – Cursor is in an amazing position for this. More on the horizon. The cloud sandbox thing is going to be huge. The new Automations thing aims at GH Actions. And it wouldn't surprise me to see them start getting more into security, observability, etc. Could Anthropic/OpenAI try to compete here? Sure. But I don't think customers want them to. I want my coding agent to be a coding agent and would be happy to pay for another model-agnostic system that sits across the whole menagerie and help me manage it. My prediction is that in a year we'll look back at the "Claude Code is great, therefore Cursor is cooked" discourse as misguided, and understand Cursor as playing a different game entirely.

Shipping today: Small but meaningful updates to Claude in Excel & PowerPoint! We obviously want Claude to be helpful in your work lives across a wide range of apps and data - and with this change, PowerPoint & Excel can share context and gain support for Skills. claude.com/blog/claude-ex…