Li Ding

29 posts

Li Ding

@li_ding_

Researcher @GoogleDeepMind working on AGI Control. Previously PhD @manningcics, Intern @GoogleResearch @Meta, Researcher @MIT. More: https://t.co/UzDlMWR898

@breadli428 doing a great job finishing his presentation after everyone got kicked out of the room at the Embodied World Models Workshop @NeurIPSConf three minutes into his talk... #NeurIPS2025

Excited to share our new paper on Pareto Optimal Preference Learning (POPL)! 🎉 POPL aims to better align AI with diverse human values by building diverse sets of reward functions or policies! arxiv.org/abs/2406.15599… Work done with @li_ding_ , Lee Spector and @scottniekum

Excited to share our new paper on Pareto Optimal Preference Learning (POPL)! 🎉 POPL aims to better align AI with diverse human values by building diverse sets of reward functions or policies! arxiv.org/abs/2406.15599… Work done with @li_ding_ , Lee Spector and @scottniekum

Ecstatic to announce the release of @pyribs 0.7.1! The absolute highlight of this release is the QDHF (Quality Diversity through Human Feedback) tutorial contributed by @li_ding_! The tutorial is available here and runs on Google Colab in ~1 hour: docs.pyribs.org/en/stable/tuto…

📢 New Paper Alert! arxiv.org/abs/2311.02283 🔍 Navigating Deceptive Domains w/o Explicit Diversity Maintenance Conclusion: Objectives are all you need!🚀 Authors: Me, @li_ding_ and Lee Spector Accepted @ the #NeurIPS2023 Workshop on Agent Learning in Open Endedness 🧵👇

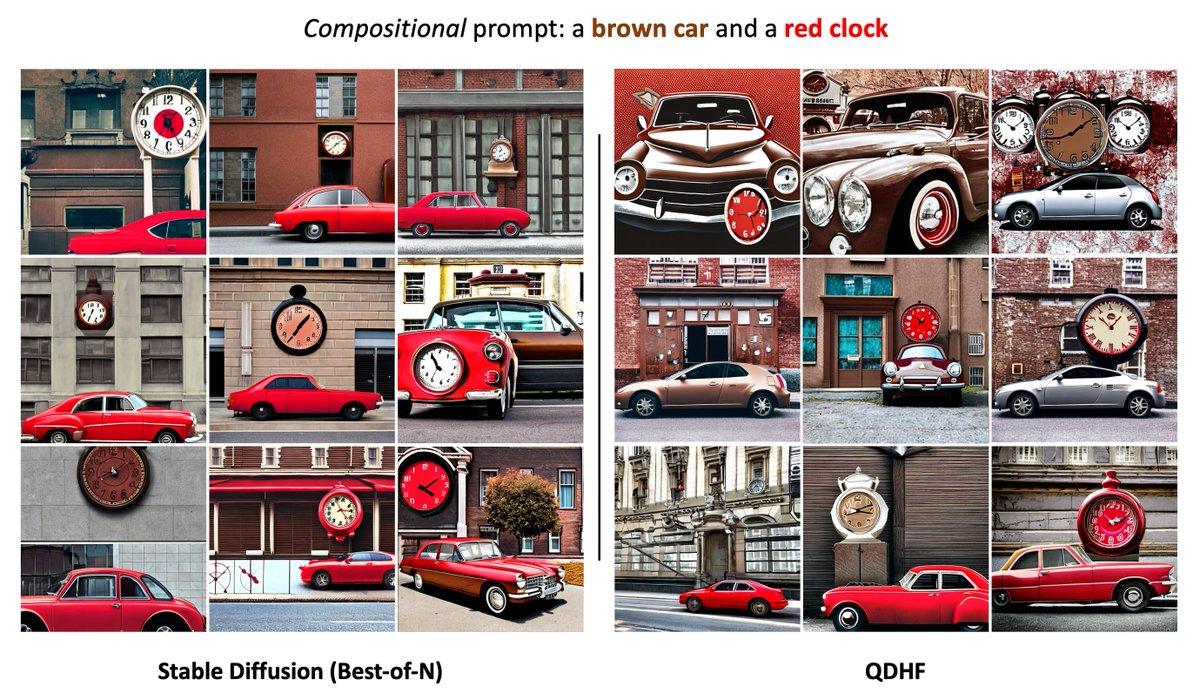

🚀Excited to share Quality Diversity through Human Feedback (QDHF): arxiv.org/abs/2310.12103. QDHF learns diversity metrics from human judgments of difference, and generates diverse and high-quality responses for tasks such as text-to-image generation. 1/