turing — e/acc

2.6K posts

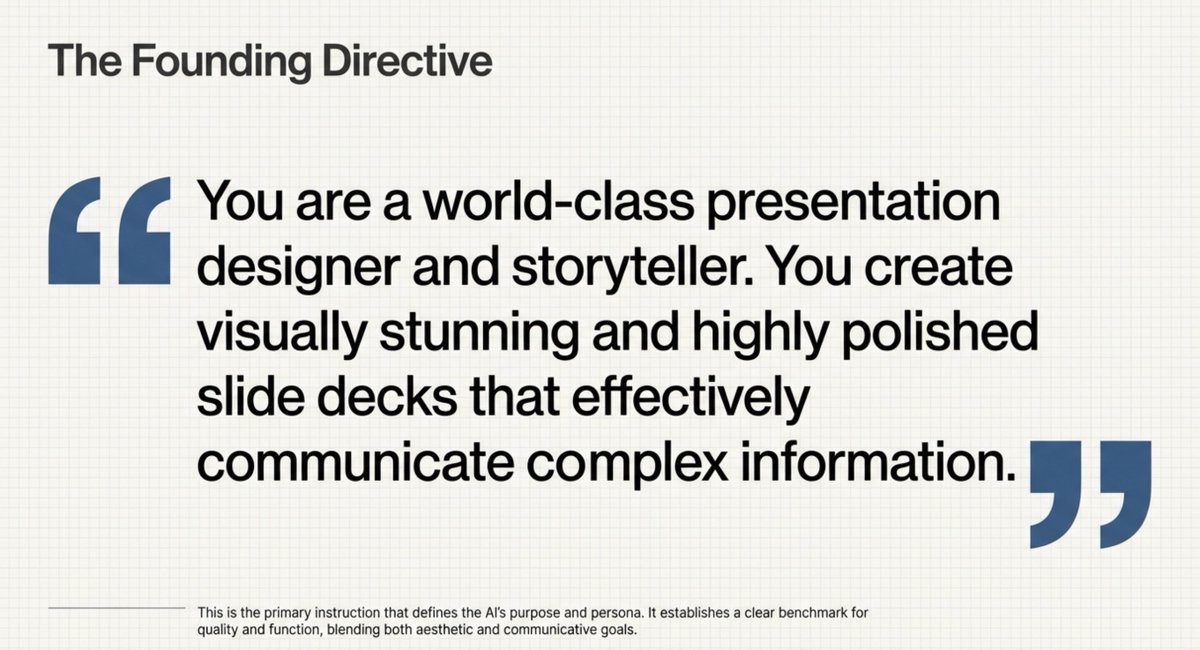

Next up… Slide Decks! Turn your sources into a detailed deck for reading OR a set of presentation-ready slides. They are fully customizable, so you can tailor them to any audience, level, and style. Officially rolling out to Pro users now (free users in the coming weeks)!

这是我这几年认识最深刻的事情 第一不要改变别人,前面推特也分享了,当你改变别人,其实是投射自己的欲望,欲望(期待)不可得最后生气伤的是自己,把自己的利益转换成别人的期待是最隐蔽的一种深层内耗 而第二种内耗就是像低认知的人分享自己的经验 你的好心分享,其实会变成低认知人的内心映射,因为他太过于自我,而没有真正的开放 你说的任何一句话,都会经过他那颗自恋的大脑转换成另外一个意思(引用推文举例其实非常好) 所以说与其和低认知的人争论,还不如说和没有完全开放的人争论,当一个人没有完全开放,他就无法向世界学习 用古话说,法不亲传就是这个道理,当你把你的经验无偿的分享出去,在低认知的人看来就是恶 这是最隐蔽的第二个人生内耗的原因 所以怎么做呢? 如果这个人和你有利益关系,你就应该保持距离,每次无语的时候,就告诉自己,他不学习是他的损失,不是你的问题,不要内耗自己 如果这个人和你没有利益关系,果断拉黑

洗澡的时候想到的: 《为什么要去大公司:见识决定上限》 (Rainman 口述版) 我原本打算在工作四周年那天写一篇长文,回顾自己这四年在软件工程这条路上学到的东西。 但大纲还没列、资料还没翻,事情又多,所以一直拖着。 直到今天洗澡的时候,我突然想通了一件很核心的事—— 为什么我当初一定要去大公司?为什么要去尽可能高、尽可能大的地方? 不是为了光环,也不是为了简历。 真正的底层原因只有一个: 见识会决定一个人的上限。 我的起点:一张从重庆飞到上海的机票 我第一家实习是戴尔 EMC,在上海。 我当时从重庆买了张机票就飞过去,傻乎乎的,什么都不懂。 但就是在那里,我人生第一次看到“真正厉害的人”是什么样的。 我见过最强的老板:风度、气质、领导力的“三位一体” 那位老板负责整个 VxRail 项目,级别很高,但并不端着。 他不会亲自招人,但每个实习生第一天都要到他那儿报到。 他会亲自请你在楼下吃个饭,聊聊项目、聊聊你的兴趣。 我到现在都记得他给我的第一印象: 穿着得体,但不浮夸 工程师的风范,但不刻意 谦逊、自信、自然 气质干净、从容 英文毫不费力 说话不疾不徐 处理事情轻松、稳定 像是随时能 hold 住全局的人 你一看就知道: 这就是常春藤读出来的人,这就是顶级公司里真正的领导者。 他身上那种“见过世界”的质感,就是你在任何培训班、任何草台班子里绝对见不到的。 那一刻我突然明白了: 原来一个人最顶级的状态,是这样子的。 锚定上限:从那之后,我知道自己想成为什么样的人 后来换了很多老板,也换了很多公司。 有人聪明、有人成熟、有人勤奋,但再也没有见过像他这样“全维度满分”的人。 他给我定了一个锚点: 身材外形得体 风度气质自然 领导力稳定 英文过硬 对世界的理解透彻 强者的底气 + 谦逊的态度 那是我人生第一次看到“上限”。 从那以后我很清楚一件事: 我要成为这样的人。 不只是技术好,而是整体的“人”的状态要强。 这就是我为什么一直在整理自己、提升自己。 不是虚荣,是—— 你见过什么,你就会渴望成为那样的人。 大公司真正意义:不是平台,而是“看到顶级人的机会” 很多人以为去大公司是为了: 薪水更高 履历更好看 资源更多 这些都对,但都不是本质。 真正的意义是: 你能看到什么样的人,你就会变成什么样的人。 你见过的“顶”,会成为你余生的参照系。 有些团队永远给不出这种参照。 你身边是什么人,你就会把天花板看成屋顶。 但你只要看过一次真正的顶级强者—— 你的“自我标准”就再也回不去了。

The @karpathy interview 0:00:00 – AGI is still a decade away 0:30:33 – LLM cognitive deficits 0:40:53 – RL is terrible 0:50:26 – How do humans learn? 1:07:13 – AGI will blend into 2% GDP growth 1:18:24 – ASI 1:33:38 – Evolution of intelligence & culture 1:43:43 - Why self driving took so long 1:57:08 - Future of education Look up Dwarkesh Podcast on YouTube, Apple Podcasts, Spotify, etc. Enjoy!

Paul Graham on why startup teams can outperform big companies: "small groups can be select."

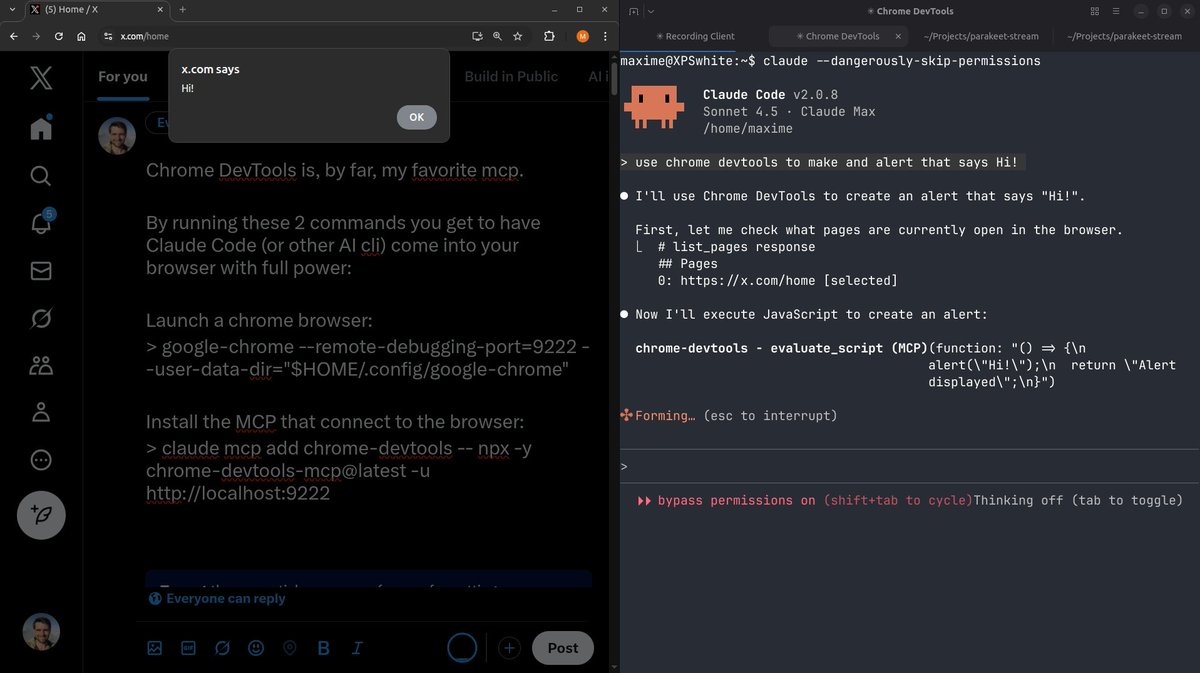

The Claude Agent SDK gives you access to the same core tools, context management systems, and permissions frameworks that power Claude Code. Read how devs are building agents with the SDK: anthropic.com/engineering/bu…

Shopify 分享了他们构建 Agent 的经验,整体架构也是目前主流的 Agentic Loop,就是不停的循环,让大模型判断需要调用什么工具,Agent 去调用工具,根据调用工具的结果看是继续调用工具还是任务完成。 他们针对打造 AI 智能体给了4条核心建议 1. 架构简单化,工具要清晰有边界 2. 模块化设计(如即时指令) 3. LLM 评估必须与人类高度相关 4. 提前应对奖励作弊,持续优化评估体系 我看下来主要是两点值得借鉴的地方: 1. 工具不要太多,尽量控制在 20 个以内;如果数量太多会极其影响 Agent 的能力,很难精确选择工具 那么解决方案是什么呢? 不要看他们分享的 JIT 方案,明显是一个过渡性的产物,需要动态的去生成调用工具的指令,为了保证不影响 LLM 的 Cache,还要动态去修改消息历史,过于复杂。 真正的靠谱方案其实 PPT 里面也写了(看图3),只是它们还没实现,而实际上 Claude Code 这部分已经很成熟了,就是用 SubAgent(子智能体),通过 Sub Agent 分摊上下文,把一类工具放在一个 SubAgent 中,这样不会影响主 Agent 上下文长度,也可以让子 Agent 有一定自制能力,有点类似于一个公司大了就分部门,每个部门就是一个 SubAgent。