Loganix

5.7K posts

Loganix

@loganix

AI search. Modern SEO. No fairy tales. Just what moves rankings in 2026. Trusted by 5,000+ SEOs and agencies.

Seattle vs. Vancouver Katılım Şubat 2014

397 Takip Edilen613 Takipçiler

Sabitlenmiş Tweet

Loganix retweetledi

Loganix retweetledi

full strategy guide: loganix.com/backlinks-for-…

order AI Brand Links here: my.loganix.com/order/ai-brand…

English

@loganix Could be, for sure. There are probably several factors influencing this, so we see it as one of a few possible explanations.

English

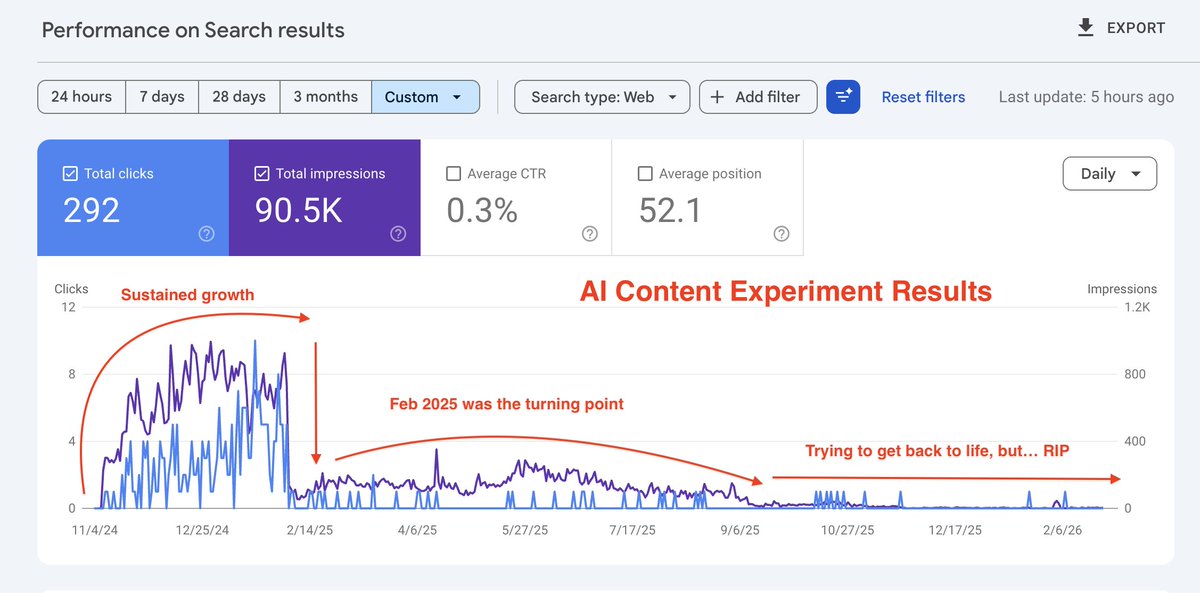

Could AI-generated content rank? Yes. And it may last about three months.

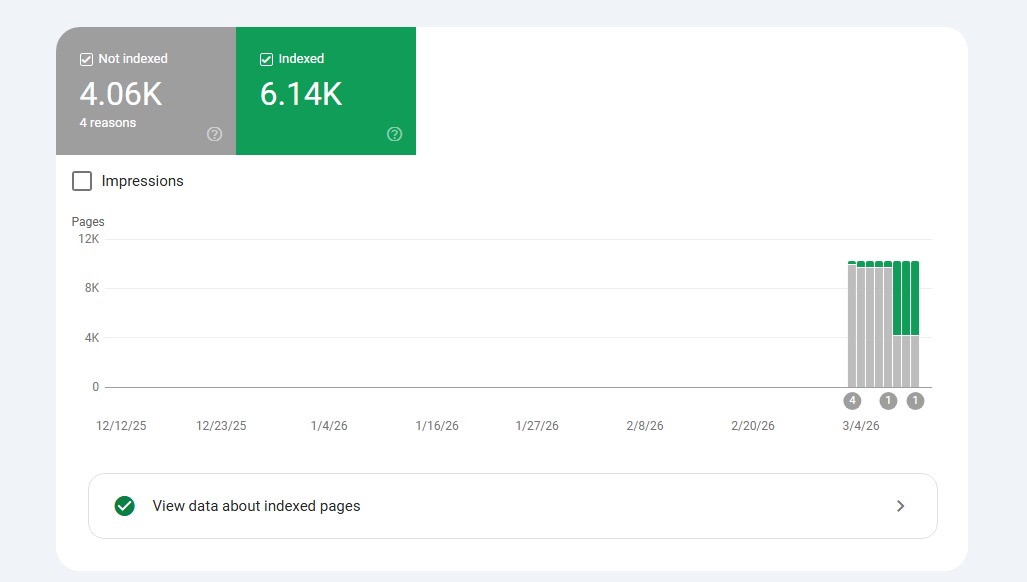

We built 20 new domains and published 2,000 articles with no human input.

In 36 days:

- 71% got indexed

- 8 sites ranked for 1,000+ keywords

- 122K impressions

3 months in: completely gone.

Was AI content the problem?

Not exactly.

The problem was publishing AI content with no strategy and no SEO behind it.

These were new sites with no backlinks, no authority. Once Google picked up on that, the rankings dropped.

And we’ve also seen the opposite: when AI-generated content is reviewed by a human and published on a strong domain, it can keep ranking and drive clicks.

📌 Read the full experiment: seranking.com/blog/ai-conten…

We’re running new experiments now, and we’ll be sharing the results along the way. If you want us to test your theories too, drop them in the comments 👇

English

@SeoTudent anyone who's used ai detectors enough knows that when a new model is released, it takes the detectors a minute to catch up

my guess is that google has algorithms to detect abuse of ai content. they'll catch up soon, and then google's wrath will be unleased

English

Loganix retweetledi

We're releasing a new model called Reverse Prompter designed to reverse-engineer Gemini-generated assistant responses back to most likely original prompts. Try it here: dejan.ai/tools/reverse-… or read about its design and training here: dejan.ai/blog/reverse-p…

PS: This is a tiny 270M parameter Gemma fine-tuned on 100,000 Gemini generated input-output pairs, we're not just asking Gemini to guess what input prompts are.

English