Charlotte Kleverud

1.1K posts

Charlotte Kleverud

@lottite

building @momentalos | swede in the bay area

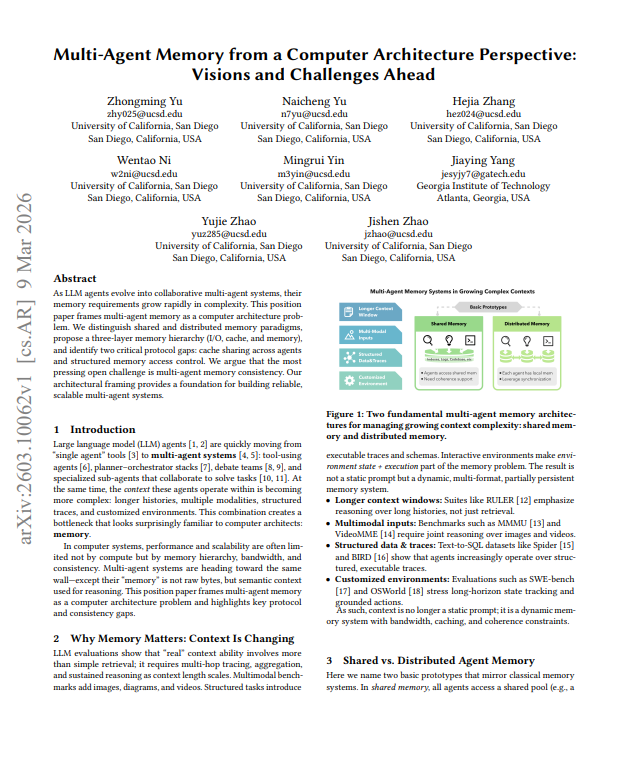

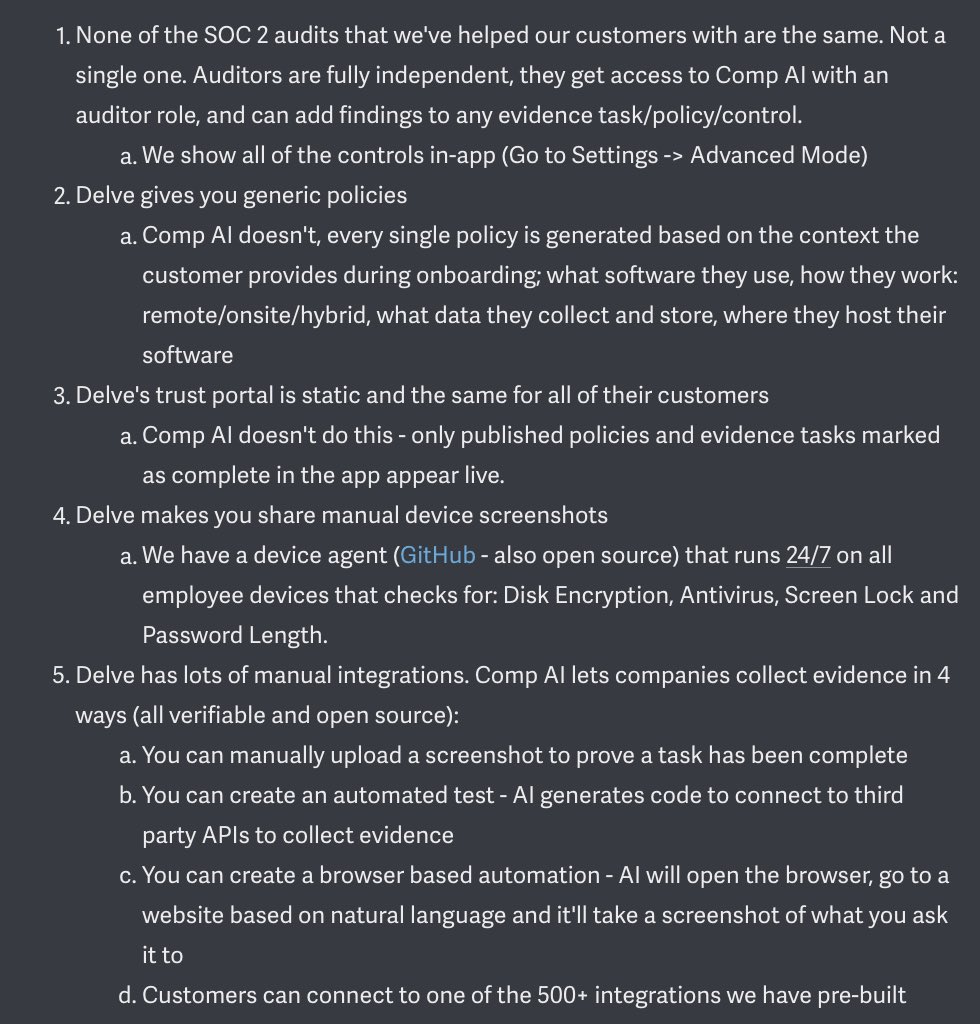

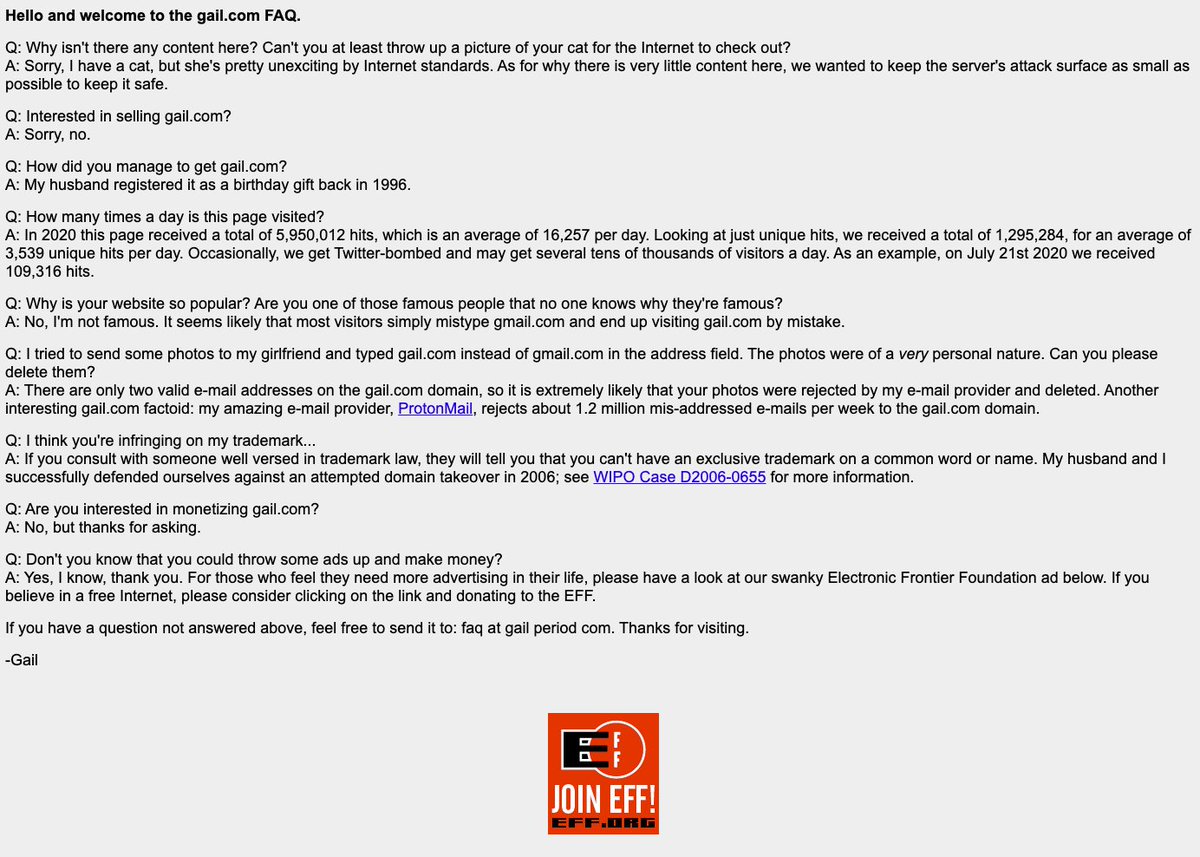

In response to an article that literally presents evidence that CompAI has bribed Reddit mods, did a hostile takeover of a Reddit sub, and that points out CompAI has a reputation of having similar practices as Delve. 😂

"Where you should be optimizing for is not management. It's to be the best builder in the world." I asked Geoff (CPO Ramp) how tech employees should think about their career: "Management is probably dead. There's always going to be value in someone giving you feedback and coaching and being your advocate and being a team leader, but now is not the time to build that skill set. Now is the time to be very proficient in this new technology and to radically improve the way that you use it." 📌 Watch the full episode here: youtu.be/RBqT2PHWdBg