We've raised $27M to build @CopilotKit — the Agentic Frontend Stack connecting humans & agents. Because all UI will be AI. Co-led by Glilot Capital, NfX and SignalFire.

@lowercasebryan

363 posts

We've raised $27M to build @CopilotKit — the Agentic Frontend Stack connecting humans & agents. Because all UI will be AI. Co-led by Glilot Capital, NfX and SignalFire.

@hwchase17 Where there's struggle is all of these harnesses require a disc or access to bash or something like that. If there's a way to run them a headless way, then that would be awesome .. maybe ive missed something

open-weight LLMs have come a long way on agent tasks! but the harness you wrap them in matters just as much as the model itself, and arguably the interface you use to drive that harness matters even more. dev workflows are deeply personal. what works well for one developer may hinder another, so it's difficult to converge on a single UX that isn't either compromising or too generalized (e.g. CLI vs. TUI vs. GUI vs. IDE extension) while it doesn't come without drawbacks, ACP a solid stopgap for running the same harness across multiple interfaces. pick your frontend, keep your agent. deepagents ships with this out of the box -- two ways to plug it in: - deepagents-acp is our standalone ACP server to serve *any* agent - `deepagents-cli --acp` to use our existing CLI agent over ACP point any ACP-compatible client at it and you've got the same deepagents harness, your choice of open-weight model & provider, and your choice of interface. some popular exemplars: - `toad` is an agent-agnostic TUI that ships deepagents support built-in, made possible via ACP github.com/batrachianai/t… (@willmcgugan @textualizeio) - you can use deepagents directly in any modern IDE, see this blog post from @jetbrains coauthored by our very own @Hacubu: blog.jetbrains.com/ai/2026/04/usi…) the model is yours to pick. the interface is yours to pick. the harness shouldn't be the thing that locks you in.

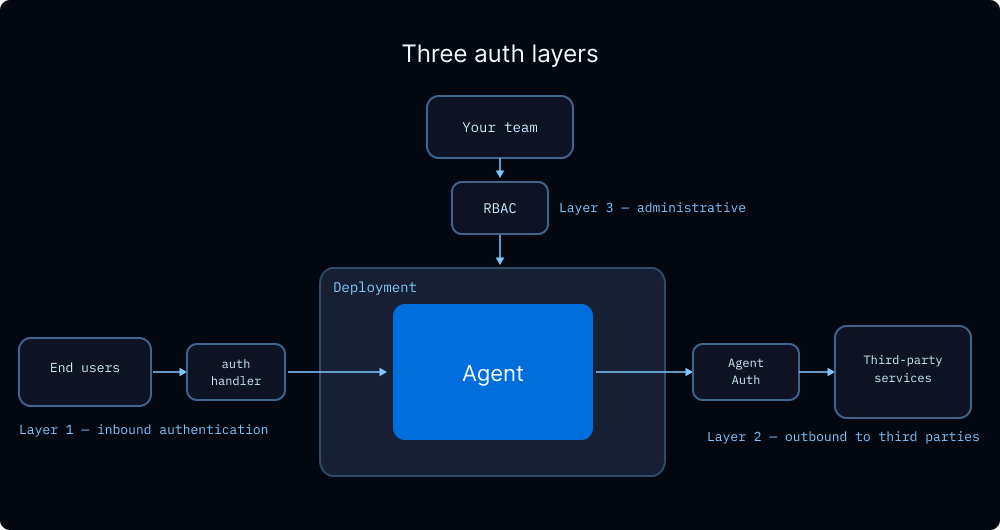

Not every step in an agent workflow needs the same model. Fleet now lets you customize which model each sub-agent uses, so you can route simple tasks to fast/cheap models and keep stronger models for the hard parts. Here’s what that looks like:

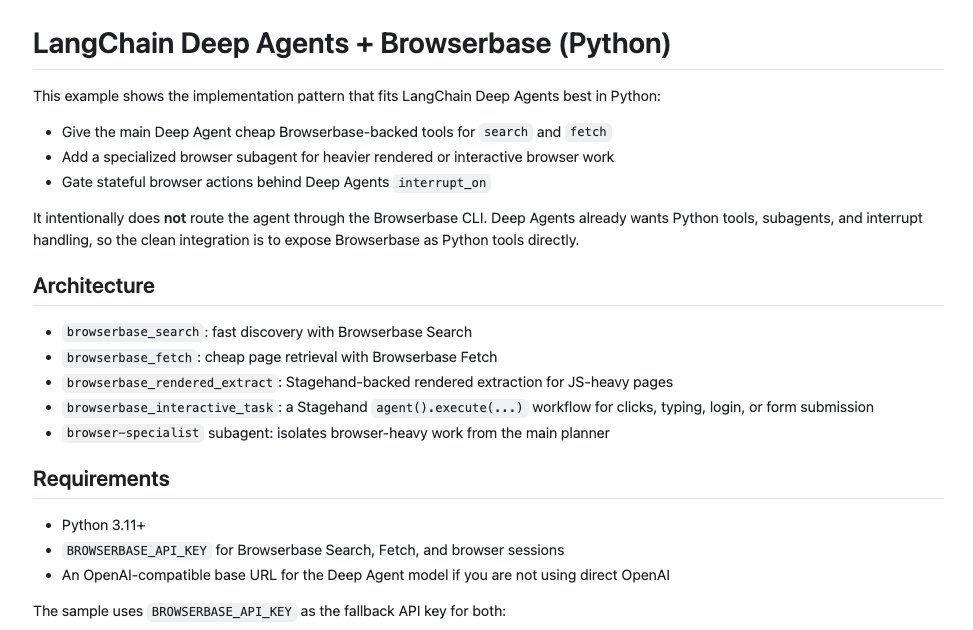

Build agents with LangChain + @browserbase. Give your Deep Agents search, fetch, and browser subagents to access the full web. All with full observability with the Browserbase dashboard.

create_agent - how we build Deep Agents on the simplest harness primitive underlying all of the harness engineering, research, and API design in Deep Agents is a very simple primitive in LangChain called create_agent the entire design of deepagents comes from optionally extending this base harness primitive to support behaviors that we and our community found useful for agent engineering such as: - filesystem tools, bash - compaction + context offloading - subagents, skills, and memory support - hooks - more it has entry points for Tools, Middleware (hooks), Providers and more which means the base is very extensible (deepagents is a living example of this) some great partners & builders fully build production agents on the create_agent API and I think we could share a bunch more content on it open to suggestions! if there's interest the idea would be to show how we derived deepagents from first principles and mapping this to code builr on create_agent which is already all open source basically: 1. want to share that this has existed as a primitive for over a year and was codified in our LangChain 1.0 release last year 2. what content would people want to see around this?

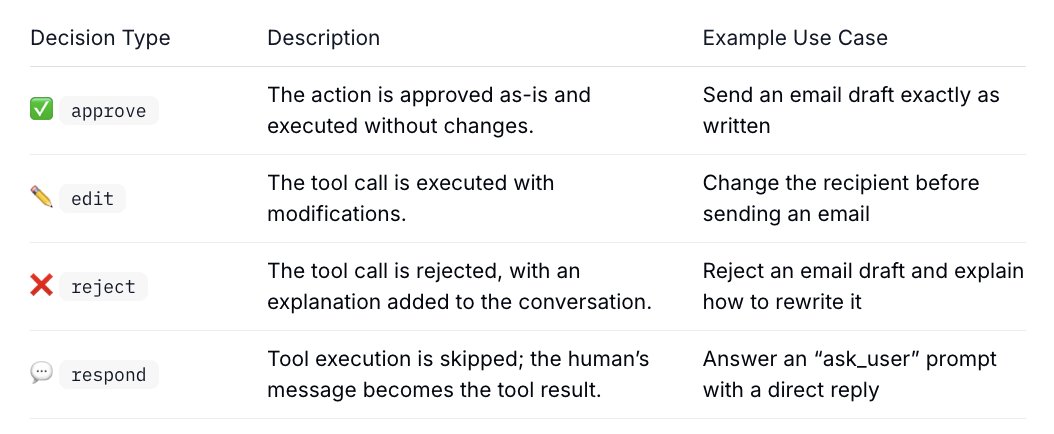

most of the time, you want an agent loop to run uninterrupted. that's where the utility comes from! but some decisions shouldn't be delegated to the agent. two situations come up consistently: 1/ before a consequential action, like sending an email, executing a transaction, or deleting files, you want to see exactly what the agent is about to do. approve it, edit it, or push back with feedback so it can revise and try again. 2/ when the agent hits a judgment call it can't resolve alone. not because it's missing a tool, because the answer depends on your preference. "which config file should i modify?" or "should this go to staging or production?" your answer gets fed directly back into the run. here's the part that matters for production: these pauses can last indefinitely. seconds, hours, days. that's only possible if the runtime persists state across the response gap. when the human responds, whenever that is, the agent reloads full context and continues from exactly where it stopped. in langgraph, interrupt() saves state to a checkpointer and surfaces a payload to the caller. command(resume=...) reloads it and picks up execution. langchain and deep agents build on top of those primitives with HITL middleware, so instead of wiring this yourself, you attach HITL policies directly to tool calls. #interrupt-decision-types" target="_blank" rel="nofollow noopener">docs.langchain.com/oss/python/lan…

New in Deep Agents: Harness Profiles. langchain.com/blog/tuning-de… ✅ Model-specific profiles to adjust prompts, tools, and middleware. 📦 Profiles for @OpenAI, @Anthropic, and @Google models out of the box. Currently available in Python, and coming soon to TypeScript.