Luke Parrish

20.3K posts

Luke Parrish

@lsparrish

Generalist currently specializing in antimatter production https://t.co/AysUJWYBea

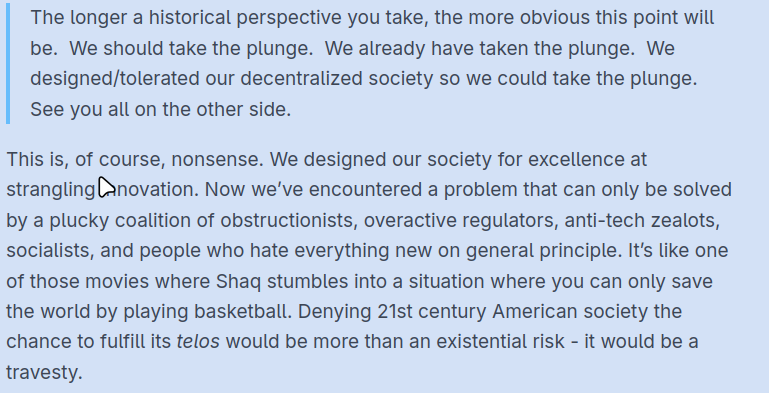

A lot of people on here think they’re secret geniuses whose tweets don’t get views because the timeline only rewards low-IQ slop. It’s mostly a cope. If your ideas are actually good, they will eventually resonate. You’re just not as smart as you think.

It looks to me like telegrams were the optimal level of social communication technology, and society began to disintegrate after voice radio was invented, though not to the same extent as it began to collapse after television.

Will AI become smarter than humans? If so, is humanity in danger? I went to Silicon Valley to ask some of the leading AI experts that question. Here’s what they had to say:

@jonathanstray I think the close readings of the contract language is a nerd trap when the counterparty is the pentagon rather than like Goldman Sachs

New Paper💡 Have you ever heard of grokking, a sudden transition from memorization to generalization? People have attributed grokking to weight decay, Fourier structure, optimization regimes, phase transitions, numerical effects… These can shape the training dynamics, but they don’t answer the core question: "what determines which representation the model learns, and why it generalizes?" We argue the key is intrinsic task symmetries. Paper: arxiv.org/pdf/2603.01968