Ziheng Lu

34 posts

Ziheng Lu

@luzihen

Ex. Principal Researcher @ Microsoft Research AI4Science, materials science, deep learning.

@jwt0625 Sankey diagrams are implemented via plotly in pymatviz github.com/janosh/pymatviz but beyond symmetry changes we haven’t really found any good to plot in this format

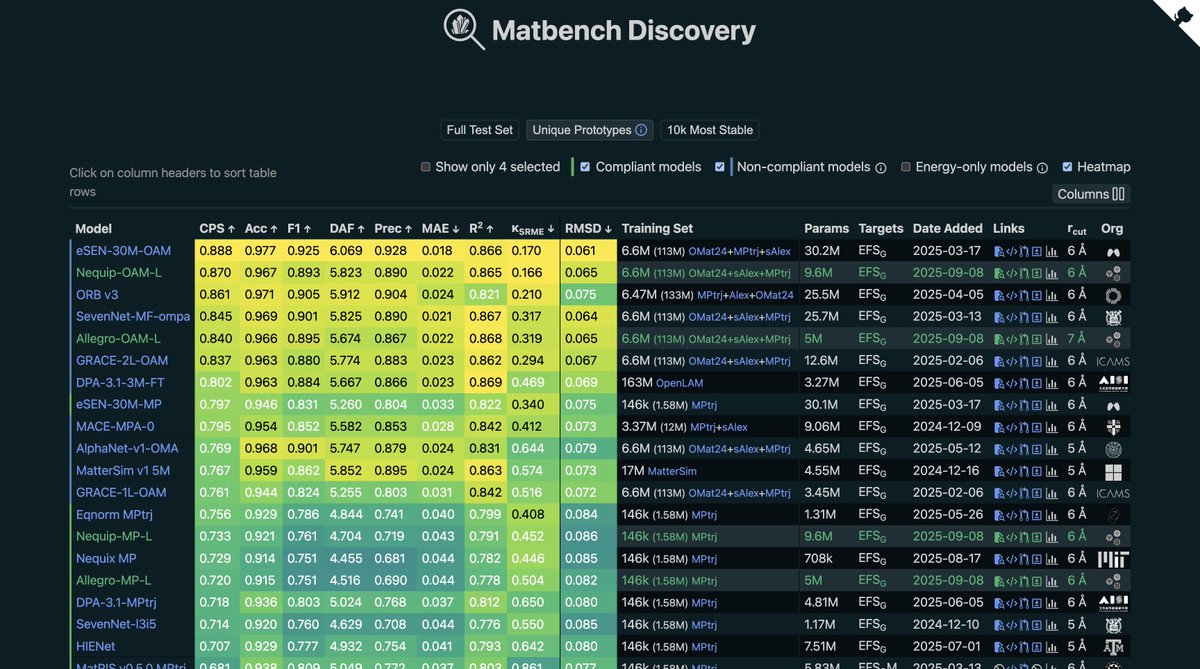

Using @MSFTResearch MatterSim model, we have explored the upper limits of bulk materials' thermal conductivity. While we found several highly conductive materials, none has a thermal conductivity as high as diamond. @ZNanotheory @luzihen @HongxiaHao arxiv.org/abs/2503.11568

Super excited to preprint our work on developing a Biomolecular Emulator (BioEmu): Scalable emulation of protein equilibrium ensembles with generative deep learning from @MSFTResearch AI for Science. #ML #AI #NeuralNetworks #Biology #AI4Science biorxiv.org/content/10.110…