Michael Eli Sander

213 posts

@m_e_sander

Research Scientist at Google DeepMind

1/ If you’re familiar with RLHF, you likely heard of reward hacking —where over-optimizing the imperfect reward model leads to unintended behaviors. But what about teacher hacking in knowledge distillation: can the teacher be hacked, like rewards in RLHF?

I'm excited to share a new paper: "Mastering Board Games by External and Internal Planning with Language Models" storage.googleapis.com/deepmind-media… (also soon to be up on Arxiv, once it's been processed there)

📽️On a interviewé @SibylleMarcotte , doctorante @ENS_ULM, membre de l'équipe Ockham, lauréate 🏆du prix Jeunes Talents France 2024 L'Oréal - @UNESCO #ForWomenInScience ▶️ses recherches et ses conseils pour les filles souhaitant devenir #scientifiques :) @UnivLyon1 @ENSdeLyon

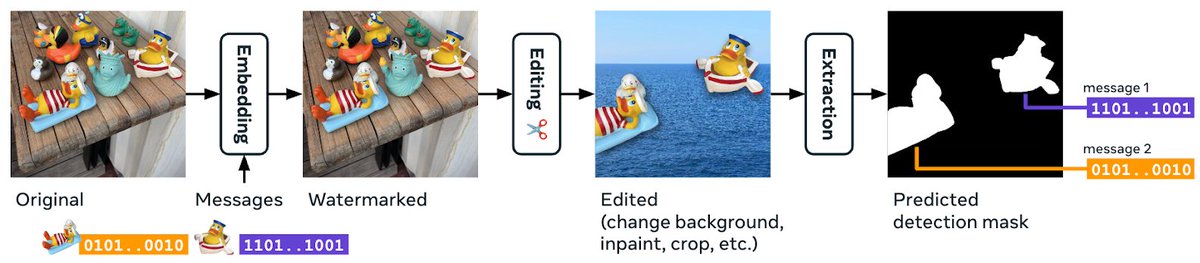

OpenAI may secretly know that you trained on GPT outputs! In our work "Watermarking Makes Language Models Radioactive", we show that training on watermarked text can be easily spotted ☢️ Paper: arxiv.org/abs/2402.14904 @pierrefdz @AIatMeta @Polytechnique @Inria

#FWIS2024 🎖️@SibylleMarcotte, doctorante au département #mathématiques et applications de l'ENS @psl_univ, figure parmi les lauréates du Prix Jeunes Talents France 2024 @FondationLOreal @UNESCO #ForWomenInScience @AcadSciences @4womeninscience Félicitations à elle !!! 👏

🥳 I’m very happy to announce our preprint biorxiv.org/content/10.110… ! scConfluence combines uncoupled autoencoders with Inverse Optimal Transport to integrate unpaired multimodal single-cell data in shared low dimensional latent space. @LauCan88 @gabrielpeyre

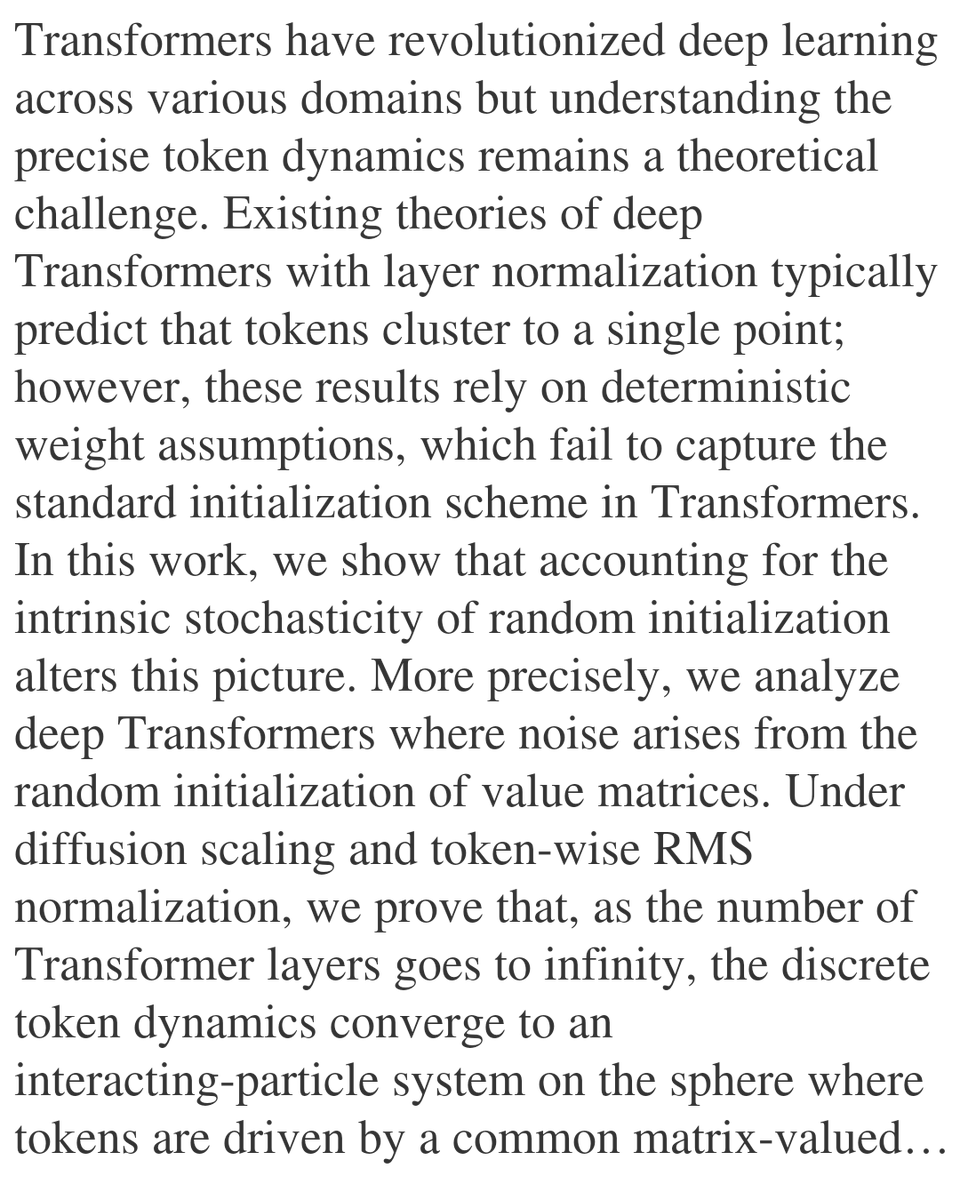

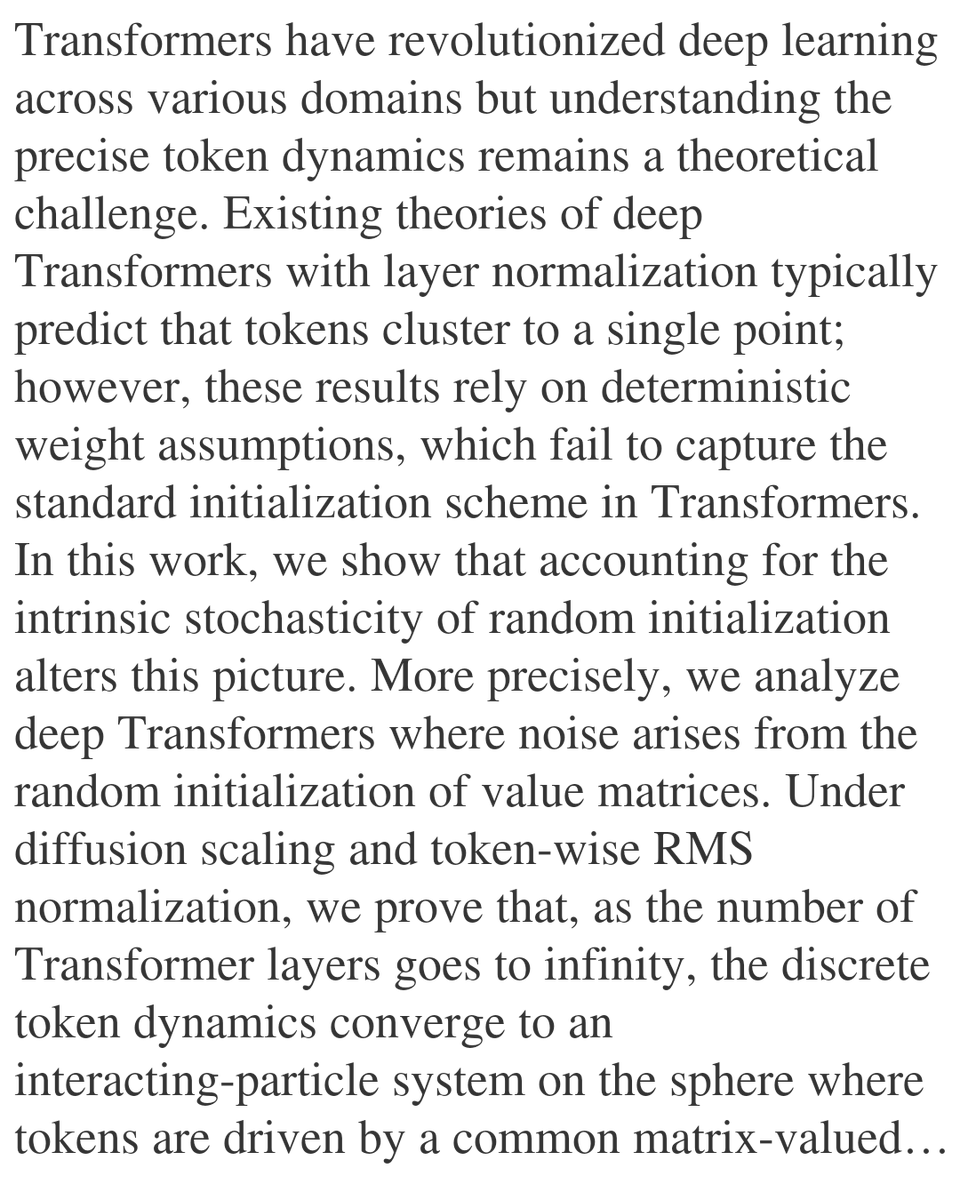

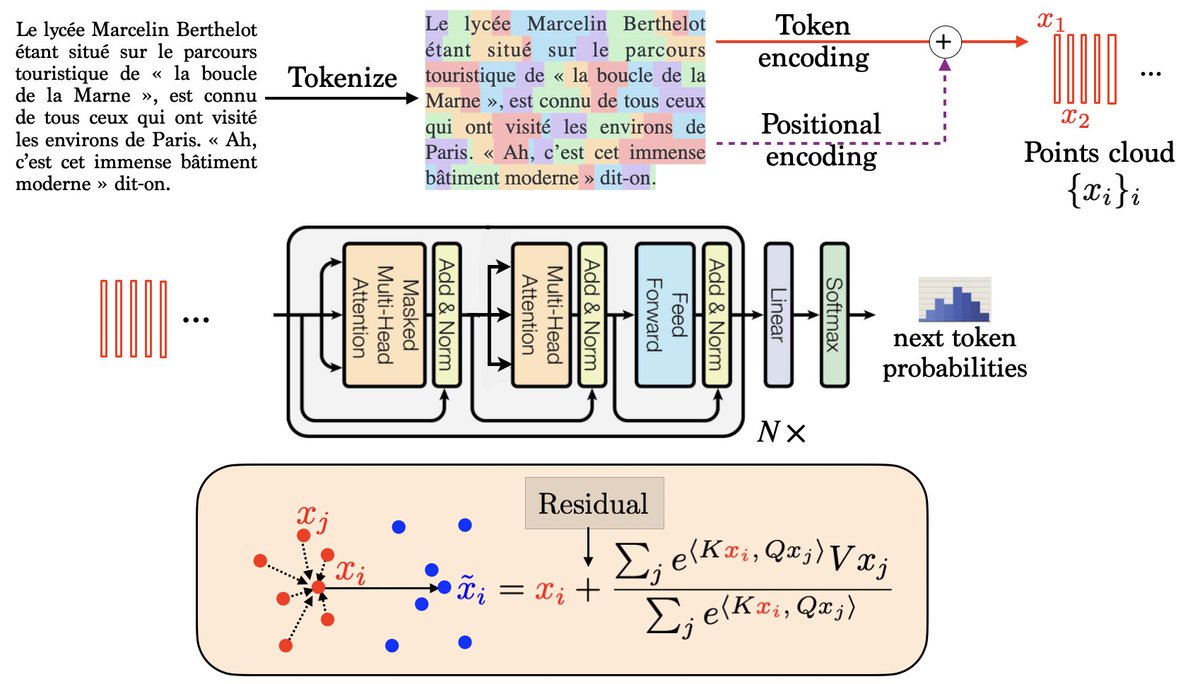

🚨🚨New ICML 2024 Paper: arxiv.org/abs/2402.05787 How do Transformers perform In-Context Autoregressive Learning? We investigate how causal Transformers learn simple autoregressive processes or order 1. with @RGiryes, @btreetaiji, @mblondel_ml and @gabrielpeyre 🙏