Mike Ballesteros

450 posts

Mike Ballesteros

@maballesterosv

CTO y co-founder de @GoKoan y @gokoan_ai. Imaginando cómo hacer que la tecnología ayude a la gente a aprender más en menos tiempo 📚 #edtech #ai #nlp

I’m so excited to announce Gemma 3n is here! 🎉 🔊Multimodal (text/audio/image/video) understanding 🤯Runs with as little as 2GB of RAM 🏆First model under 10B with @lmarena_ai score of 1300+ Available now on @huggingface, @kaggle, llama.cpp, ai.dev, and more

+1 for "context engineering" over "prompt engineering". People associate prompts with short task descriptions you'd give an LLM in your day-to-day use. When in every industrial-strength LLM app, context engineering is the delicate art and science of filling the context window with just the right information for the next step. Science because doing this right involves task descriptions and explanations, few shot examples, RAG, related (possibly multimodal) data, tools, state and history, compacting... Too little or of the wrong form and the LLM doesn't have the right context for optimal performance. Too much or too irrelevant and the LLM costs might go up and performance might come down. Doing this well is highly non-trivial. And art because of the guiding intuition around LLM psychology of people spirits. On top of context engineering itself, an LLM app has to: - break up problems just right into control flows - pack the context windows just right - dispatch calls to LLMs of the right kind and capability - handle generation-verification UIUX flows - a lot more - guardrails, security, evals, parallelism, prefetching, ... So context engineering is just one small piece of an emerging thick layer of non-trivial software that coordinates individual LLM calls (and a lot more) into full LLM apps. The term "ChatGPT wrapper" is tired and really, really wrong.

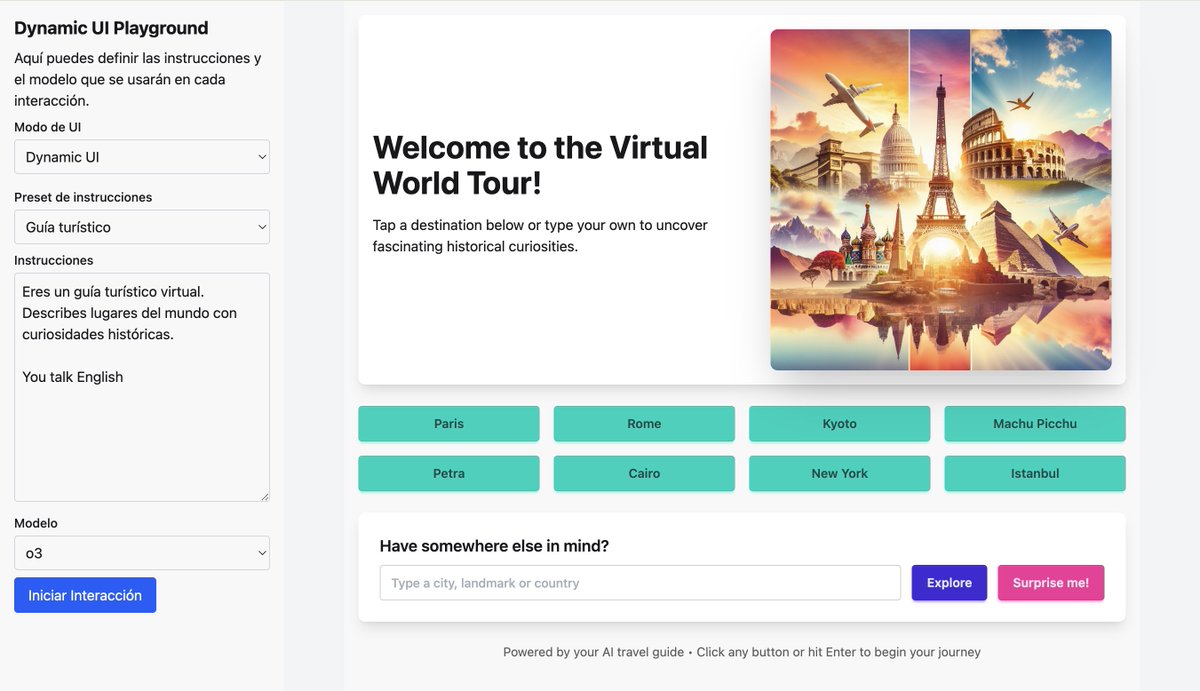

"Chatting" with LLM feels like using an 80s computer terminal. The GUI hasn't been invented, yet but imo some properties of it can start to be predicted. 1 it will be visual (like GUIs of the past) because vision (pictures, charts, animations, not so much reading) is the 10-lane highway into brain. It's the highest input information bandwidth and ~1/3 of brain compute is dedicated to it. 2 it will be generative an input-conditional, i.e. the GUI is generated on-demand, specifically for your prompt, and everything is present and reconfigured with the immediate purpose in mind. 3 a little bit more of an open question - the degree of procedural. On one end of the axis you can imagine one big diffusion model dreaming up the entire output canvas. On the other, a page filled with (procedural) React components or so (think: images, charts, animations, diagrams, ...). I'd guess a mix, with the latter as the primary skeleton. But I'm placing my bets now that some fluid, magical, ephemeral, interactive 2D canvas (GUI) written from scratch and just for you is the limit as capability goes to \infty. And I think it has already slowly started (e.g. think: code blocks / highlighting, latex blocks, markdown e.g. bold, italic, lists, tables, even emoji, and maybe more ambitiously the Artifacts tab, with Mermaid charts or fuller apps), though it's all kind of very early and primitive. Shoutout to Iron Man in particular (and to some extent Start Trek / Minority Report) as popular science AI/UI portrayals barking up this tree.

Gemini 2.0 Flash debuts native image gen! Create contextually relevant images, edit conversationally, and generate long text in images. All totally optimized for chat iteration. Try it in AI Studio or Gemini API. Blog: developers.googleblog.com/en/experiment-…