Stefano

1.6K posts

Stefano

@maeste

Code climber - no problem with strong opinions - https://t.co/mU1It6LAKI - https://t.co/wslalil0Wo -

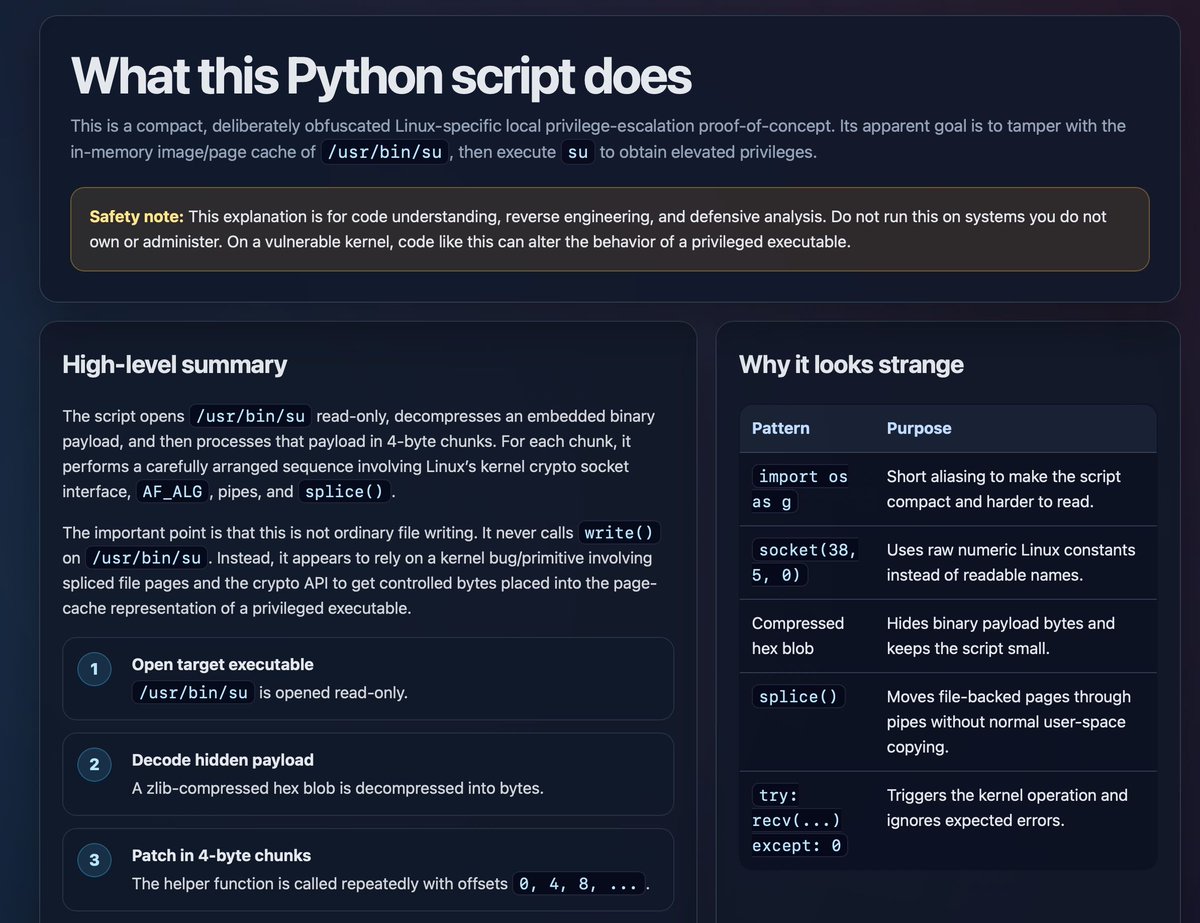

A nice thing about DeepSeek V4 Flash locally is that it’s a big enough model that you can have it explain shit to you and it won’t completely lie to you. Tried to walk through some choices in ds4.c and I felt pretty good about the experience.

My Mac had less available memory than I expected, turned out the "claude" Claude Code processes on this machine (running in various terminal windows) were consuming ~30GB on their own! The largest one was using 4.9GB

Today we're sharing our work on interaction models. A new class of model trained from scratch to handle real-time interaction natively, instead of gluing it onto a turn-based one. youtu.be/A12AVongNN4

Appreciate Ivan tweet. To put this into context, to build DS4 I used: my MacBook M3 Max (mine, 8k euros), 1 M3 Ultra with 512 GB (got access, 10k euros), one DGX Spark (got access, 4k euros?). Are we far from the times all you needed to do hacking was a computer? That's sad.