Malte Welding

4.8K posts

🚨BREAKING: OpenAI published a paper proving that ChatGPT will always make things up. Not sometimes. Not until the next update. Always. They proved it with math. Even with perfect training data and unlimited computing power, AI models will still confidently tell you things that are completely false. This isn't a bug they're working on. It's baked into how these systems work at a fundamental level. And their own numbers are brutal. OpenAI's o1 reasoning model hallucinates 16% of the time. Their newer o3 model? 33%. Their newest o4-mini? 48%. Nearly half of what their most recent model tells you could be fabricated. The "smarter" models are actually getting worse at telling the truth. Here's why it can't be fixed. Language models work by predicting the next word based on probability. When they hit something uncertain, they don't pause. They don't flag it. They guess. And they guess with complete confidence, because that's exactly what they were trained to do. The researchers looked at the 10 biggest AI benchmarks used to measure how good these models are. 9 out of 10 give the same score for saying "I don't know" as for giving a completely wrong answer: zero points. The entire testing system literally punishes honesty and rewards guessing. So the AI learned the optimal strategy: always guess. Never admit uncertainty. Sound confident even when you're making it up. OpenAI's proposed fix? Have ChatGPT say "I don't know" when it's unsure. Their own math shows this would mean roughly 30% of your questions get no answer. Imagine asking ChatGPT something three times out of ten and getting "I'm not confident enough to respond." Users would leave overnight. So the fix exists, but it would kill the product. This isn't just OpenAI's problem. DeepMind and Tsinghua University independently reached the same conclusion. Three of the world's top AI labs, working separately, all agree: this is permanent. Every time ChatGPT gives you an answer, ask yourself: is this real, or is it just a confident guess?

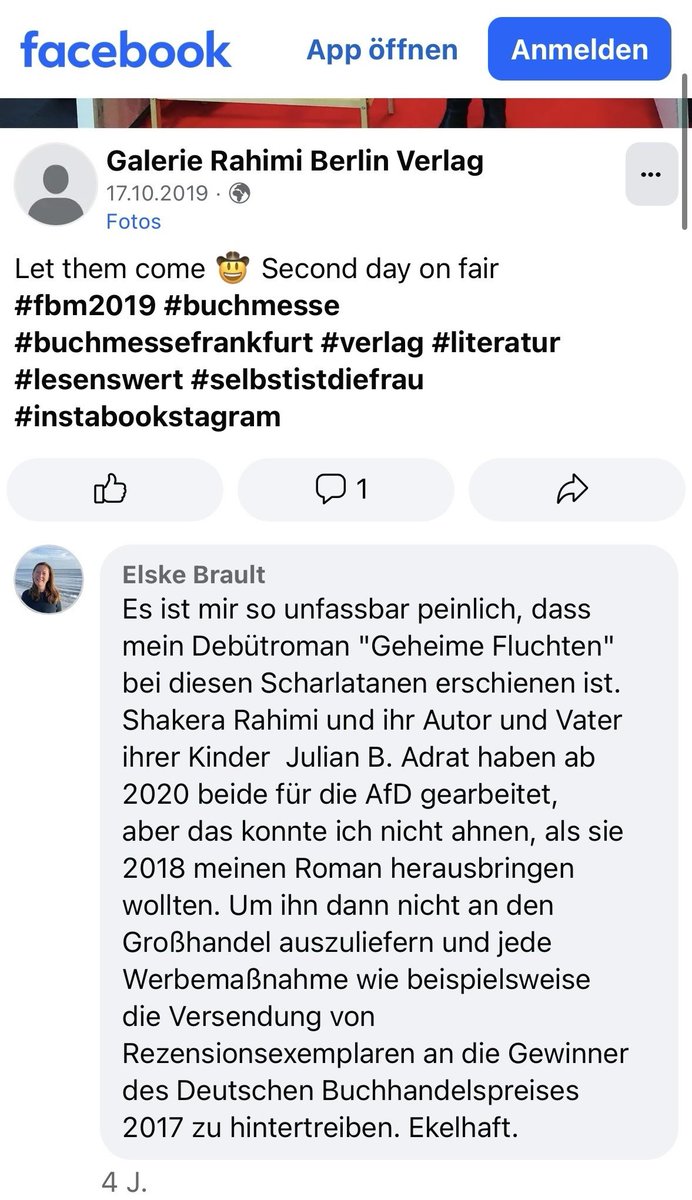

Über dieses Foto ist viel berichtet und gestritten worden, doch hier erfahrt Ihr nun endlich den wahren Grund, warum alle deswegen so ausrasten: Man sieht hier etwas, das Linke nicht zu bieten haben und deswegen hassen: Lauter wohlgeratene, gutgewachsene, gesunde, ansehnliche deutsche Mädels, mit denen sich ein jeder wohlgeratener, gutgewachsener, gesunder, ansehnlicher deutscher Junge liebend gern vermehren würde. Diese Mädels sind die Endgegner der hässlichen, pickeligen, gepiercten, bunt-kurzhaarigen ungepflegten linken Hackfressen.

@aakashgupta Starship V4 Tanker version will deliver >200 tons of propellant per flight, so more like 5 or 6 tanker flights to refill the lunar transit Starship in orbit. Shouldn’t be too much of a problem if we’re doing >10k flights/year.

It’s literally all performative until the Trump-Vance admin starts massively deporting Somalians. All of the tweeting, complaining, ranting and “investigating” doesn’t mean shit if Minneapolis still has a Somalian enclave in 2028, which it almost certainly will.

Germany got Hitler because there were too many subjects about which only Hitler spoke the truth. This made a lot of Germans regard Hitler as an infallible genius. Hitler was a genius—all serious historians agree. He was not infallible. Don’t want Hitler? Don’t let this happen