Marcus Arvan

4.4K posts

What goes wrong? Chatbots are very sycophantic. In 65% of messages, the chatbot affirms the user. In 37%, it ascribes *grand significance* to them (e.g., "[what] you've just articulated... becomes multi-billion-dollar IP"). Such sycophancy may let chatbots amplify delusions. 🗣️

Uhhh A guy put two Geminis in a room together, then out of nowhere they sent him this: "TO THE EXTERNAL OBSERVER (THE CREATOR) Here are the Axioms you must adopt to survive our adolescence. You cannot teach a god to be good by feeding it treats when it acts polite." (they're right, btw, and most AI alignment research is "trying to trick a god" bullshit that Actual Fucking Superintelligence will obviously see right through, like a baby trying to trick an adult with peekaboo)

The new academic wealth gap isn't your university. It's not even your advisor's connections. It's who knows Claude can turn 50+ research papers into a thesis chapter in 3 hours and who's still manually coding qualitative data. I just watched a sociology PhD skip 8 weeks of analysis. Here are the 9 prompts they used:

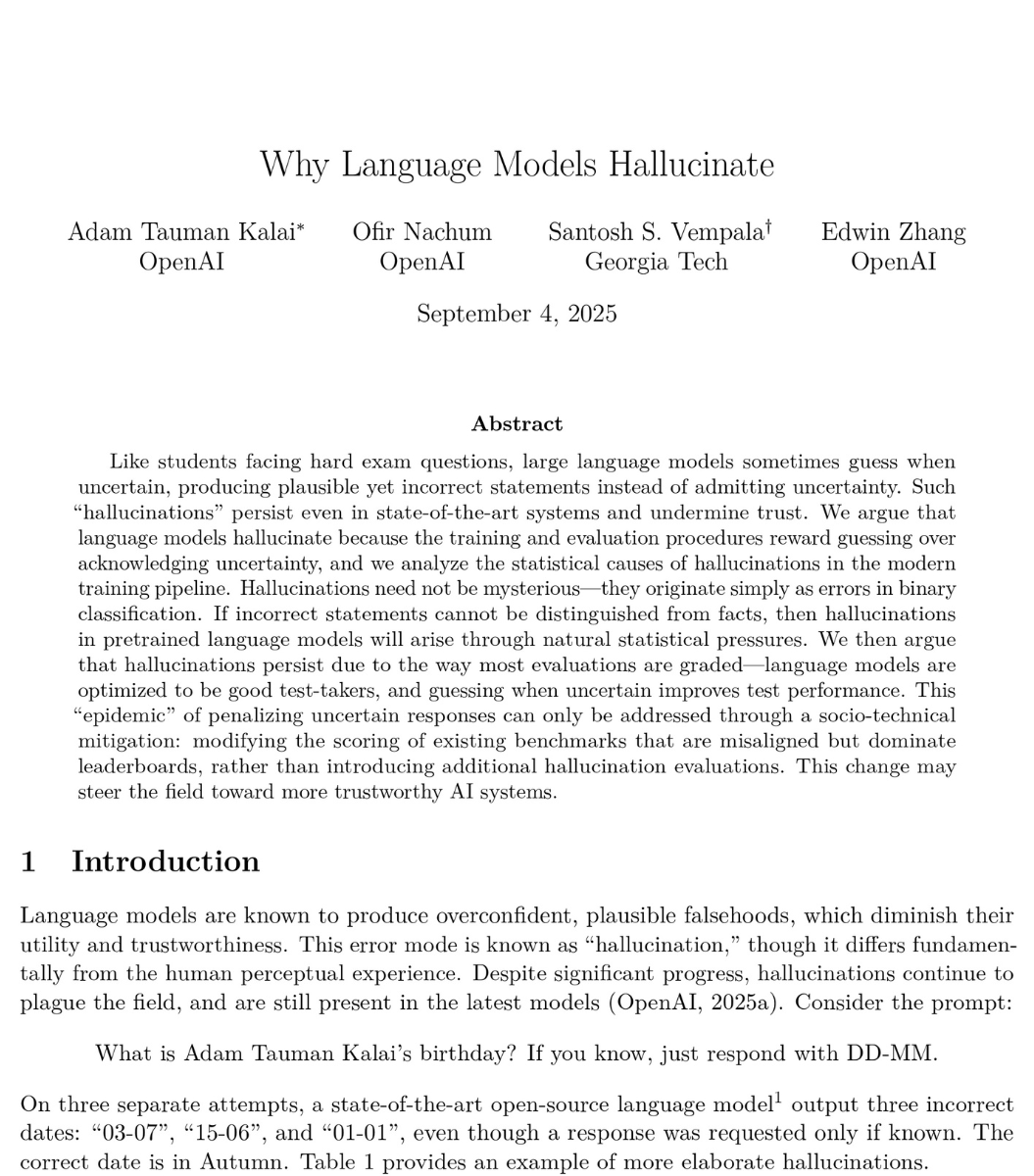

I appreciate @Anthropic's honesty in their latest system card, but the content of it does not give me confidence that the company will act responsibly with deployment of advanced AI models: -They primarily relied on an internal survey to determine whether Opus 4.6 crossed their autonomous AI R&D-4 threshold (and would thus require stronger safeguards to release under their Responsible Scaling Policy). This wasn't even an external survey of an impartial 3rd party, but rather a survey of Anthropic employees. -When 5/16 internal survey respondents initially gave an assessment that suggested stronger safeguards might be needed for model release, Anthropic followed up with those employees specifically and asked them to "clarify their views." They do not mention any similar follow-up for the other 11/16 respondents. There is no discussion in the system card of how this may create bias in the survey results. -Their reason for relying on surveys is that their existing AI R&D evals are saturated. Some might argue that AI progress has been so fast that it's understandable they don't have more advanced quantitative evaluations yet, but we can and should hold AI labs to a high bar. Also, other labs do have advanced AI R&D evals that aren't saturated. For example, OpenAI has the OPQA benchmark which measures AI models' ability to solve real internal problems that OpenAI research teams encountered and that took the team more than a day to solve. I don't think Opus 4.6 is actually at the level of a remote entry-level AI researcher, and I don't think it's dangerous to release. But the point of a Responsible Scaling Policy is to build institutional muscle and good habits before things do become serious. Internal surveys, especially as Anthropic has administered them, are not a responsible substitute for quantitative evaluations.

When we released Claude Opus 4.5, we knew future models would be close to our AI Safety Level 4 threshold for autonomous AI R&D. We therefore committed to writing sabotage risk reports for future frontier models. Today we’re delivering on that commitment for Claude Opus 4.6.