Marc Weiss

1.9K posts

Marc Weiss retweetledi

Marc Weiss retweetledi

Marc Weiss retweetledi

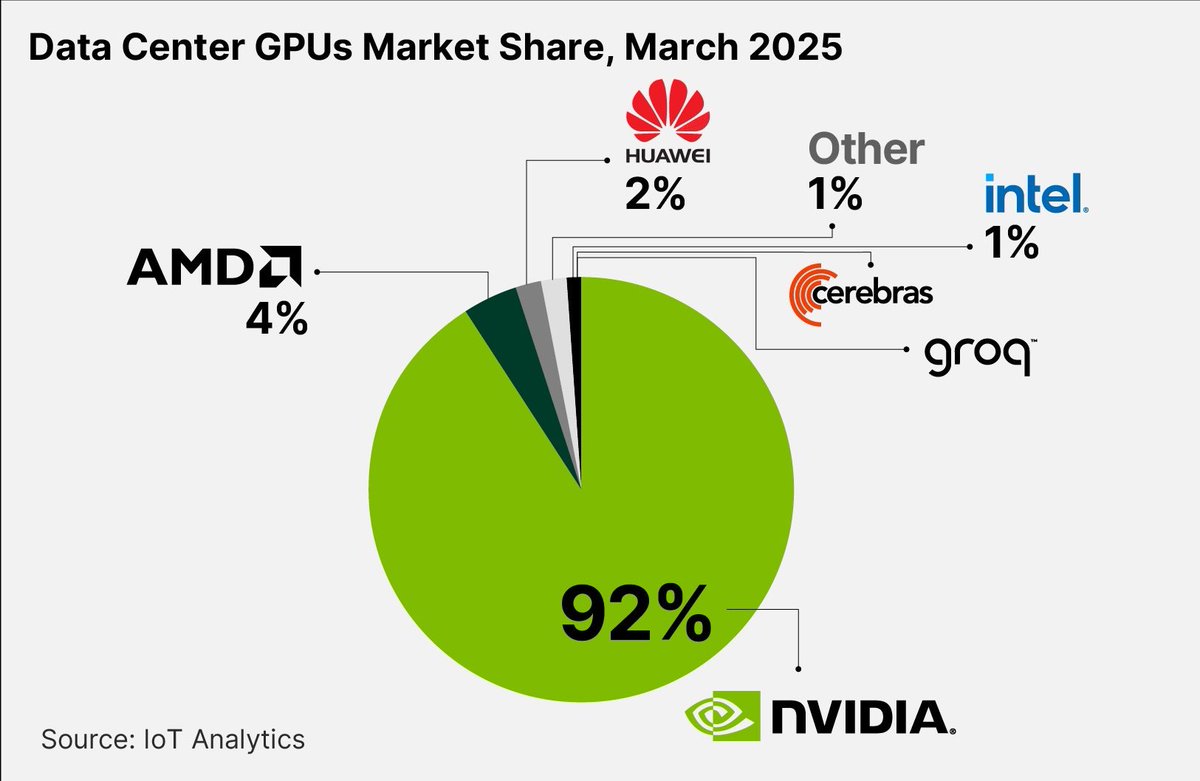

Don’t be fooled by the $NVDA at 21x forward earnings narrative.

They hold over 90% market share in data center GPUs and hyperscalers are actively looking to diversify away from them due to concentration risk and increasing availability of competitive alternatives.

Bulk of AI workloads is shifting to inference where custom silicons and $AMD GPUs that lead in memory bandwith, capacity and higher request throughput for CPU-based inference offer better overall price/performance.

Even if total demand keeps increasing, $NVDA is unlikely to maintain its current level of dominance over the long-term.

$AMD at 20x forward earnings is a superior bet even though $NVDA is a better company since $AMD is starting from a much smaller revenue base with a lot of room to increase its market share.

Long $AMD.

App Economy Insights@EconomyApp

$NVDA is now trading at 21x forward earnings. Cheaper than the S&P 500 average for the first time in over a decade. Meanwhile, $AAPL trades at nearly 30x. Which one beats the market from here?

English

Marc Weiss retweetledi

In all seriousness, I’m not touching quantum until they all come back to valuations that reflect the true duration and state of the technology's effectiveness (which is currently NOT effective).

When I was an OG $IonQ investor, I believed the “quantum is now” story, which was constantly put out by management; I’ve discussed this extensively, so I won’t repeat that part. On the premise that quantum gets going in the 2030s, I would wait for bottom basement valuations on IonQ, Quantinuum (also embedded deep in U.S. National Labs and DoD), and others perhaps Infleqtion $INFQ and PsiQuantum if they IPO. I would buy a bit of each.

The notion that IonQ and Oxford Ionics are ahead of anyone right now turned out not to be true. Additionally, although I seldom reply about quantum threads these days because most of the people who discuss IonQ have blocked me for a long time, there is repeatedly this idea people bring up that because the govt and DoD invest money in quantum that this is bullish. The fact is that this has been going on for literally decades. Intelligence, DoD, and National Labs have been involved in quantum and QIS since the 1990s. The first CEO of IonQ, David Moehring, was a literal CIA spook, coming out of intelligence-linked quantum programs and serving as IonQ’s CEO starting in 2016. DoD is all over IonQ and always has been.

The only bullish thing will be when they sell a QPU that does something useful. And that goes for all of the companies.

IonQ was the very worst offender of the quantum companies in hyping and lying. I’m willing to forgive them later, I guess, if they really wow us and accept the premise that it’s an R&D company, but I also know full well that Monroe is saying it won’t work for 5 more years at the very least "better than his laptop" (he literally said this) and that there are a lot of other companies that can have breakthroughs. That’s the reality. Would I buy IonQ again as a lotto on quantum at $10? Maybe, but I think all the evidence shows that this is an open race.

Also all the acquisitions show how desperate they are to acquire tech to have revenue and try new approaches to make the QPUs work because they currently don’t really work for much at all.

I also have to say that the whole hype IonQ has done to pivot about the quantum internet is confusing to me because it’s still very much dependent on being ready for a world that doesn’t exist and you don’t need physical hardware to have PQC or quantum safe security, you do need QPUs that work currently at a level that simply doesn’t exist to even be a threat however. Monroe said decades away to break current encryption and there are already solutions on the shelf that don’t even require physical QKD to my understanding (this is literally on the NSA website).

The point of IonQ has been to have a govt adjacent research shop that can fund itself at the expense of public markets to do R&D and has succeeded brilliantly at that goal, good job Niccolo, without keeping any of those promises yet about things they said would work many times.

The real signal will be when a private sector customer buys a QPU because it actually does something useful and pays for it with real cash, not IonQ paying them in shares to “buy” it, not a government lab buying it for R&D, not a pilot, not a demo with a toy problem paper attached. A real enterprise use case where the economics make sense today.

That’s when it’s investable at scale.

Eric Draven@DravenEric94535

@JKeynesAlpha Serious question: If Quantinuum IPOs this year, would you consider opening a position?

English

Marc Weiss retweetledi

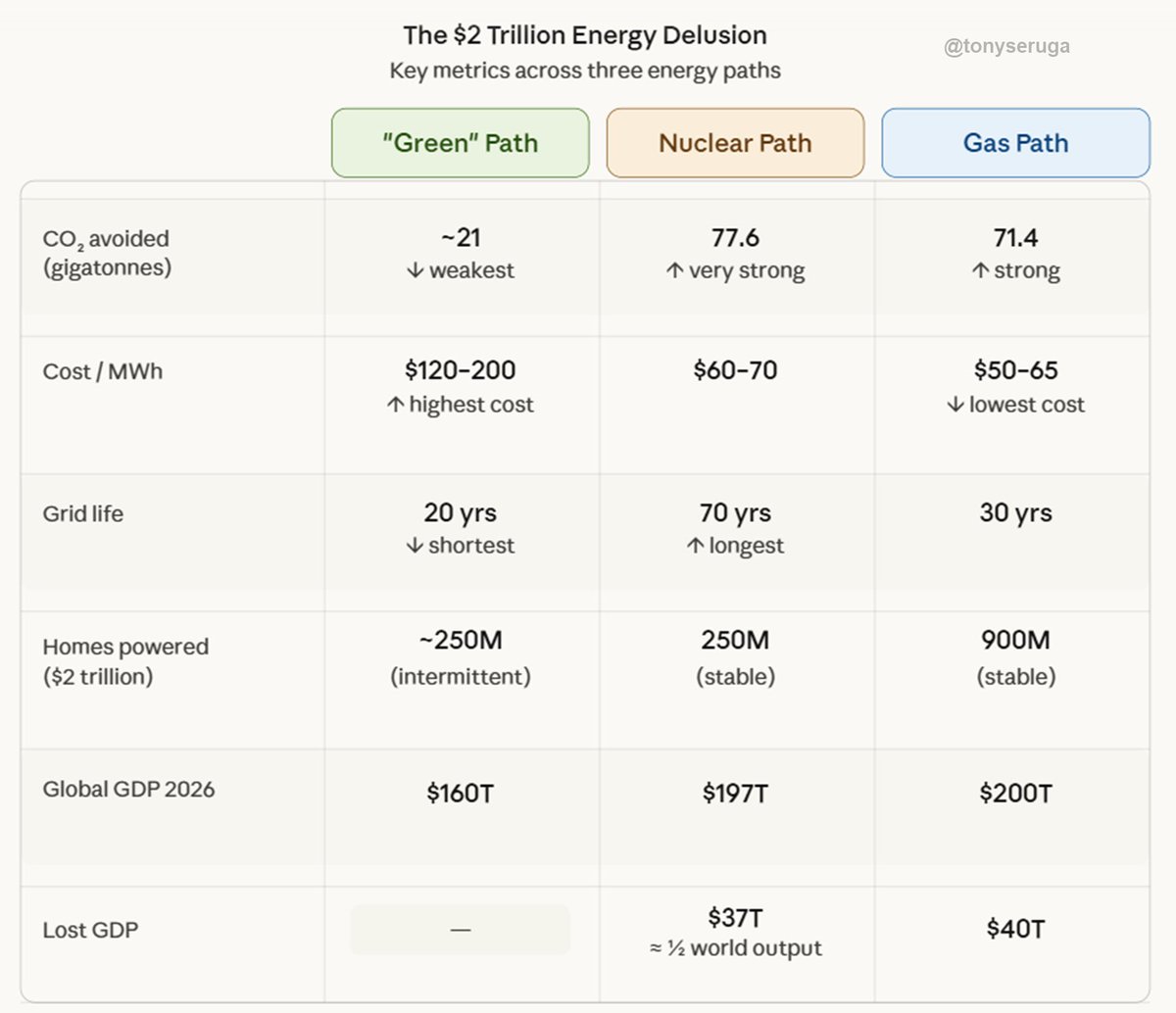

🚨 $2 Trillion Later, The Green Revolution Collapsed: How Chasing Weather Power Bankrupted the Grid and Cost the World $40 Trillion in Growth

Between 2010 and 2026, governments and corporations poured roughly $2 trillion into solar, wind, and “net‑zero” programs under the promise of an imminent clean‑energy transition. What the public received instead was an illusion—a fragile grid, higher electricity prices, and negligible climate benefits. Energy remained just as carbon‑intensive, but far more expensive and unreliable.

The fundamental error was confusing installed capacity with delivered power. Wind and solar often produce energy only 20 % of the time; fossil and nuclear plants generate 60‑90 % consistently. Billions went to weather‑dependent infrastructure that must still be backed up by coal and gas. Once backups, grid stabilization, and battery losses are factored in, true delivered costs for renewables reach $120–250 per MWh, double or triple those of gas, coal, or nuclear.

When measured by physical reality rather than marketing slogans, that $2 trillion bought roughly the energy output of $400 billion in conventional power. It displaced almost no fossil fuel consumption and arguably reinforced it, since idling backup plants waste fuel. Worse, dependence on Chinese supply chains for solar panels and rare‑earth minerals eroded national energy independence and inflated emissions through hidden mining and shipping costs.

If that same capital had been spent on modern nuclear or advanced natural‑gas infrastructure, the outcome would have been transformative. $2 trillion could have built about 285 GW of nuclear capacity (powering 250 million homes reliably for 70 years) or 1,650 GW of efficient gas plants (enough for 900 million homes for 30 years). Either path would have cut 70–80 gigatons of CO₂, reduced global electricity costs by half, and created genuine energy security.

Instead, the current “green” trajectory delivered rising utility bills, rolling blackouts, and greater reliance on geopolitical adversaries. Global power costs rose roughly 60%, contributing to deindustrialization in Europe, worldwide inflation, and a cumulative $37–40 trillion loss in global GDP—about half of one year of global economic output. That’s the price of mistaking ideology for engineering.

The lesson could not be clearer: physics determines prosperity. Dense, dispatchable energy such as nuclear or gas remains the backbone of civilization, and no amount of subsidies or messaging can legislate thermodynamics. The so‑called green transition did not decarbonize the planet—it impoverished it. The road to sustainability is not paved with solar subsidies but with unapologetic engineering and scientific honesty.

English

Marc Weiss retweetledi

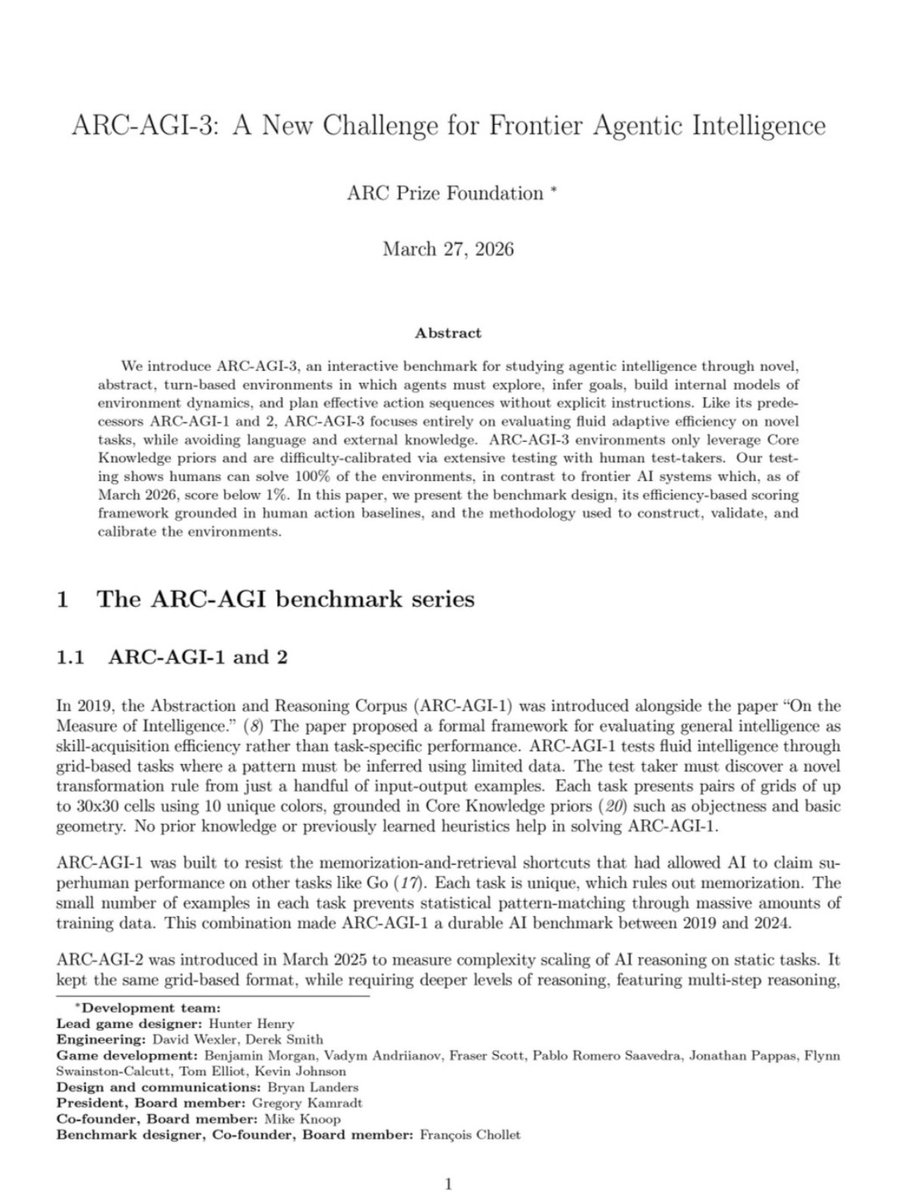

Humans: 100%

Gemini 3.1 Pro: 0.37%

GPT 5.4: 0.26%

Opus 4.6: 0.25%

Grok-4.20: 0.00%

François Chollet just released ARC-AGI-3 -- the hardest AI test ever created.

135 novel game environments. No instructions. No rules. No goals given.

Figure it out or fail.

Untrained humans solved every single one. Every frontier AI model scored below 1%.

Each environment was handcrafted by game designers. The AI gets dropped in and has to explore, discover what winning looks like, and adapt in real time.

The scoring punishes brute force. If a human needs 10 actions and the AI needs 100, the AI doesn't get 10%. It gets 1%. You can't throw more compute at this.

For context: ARC-AGI-1 is basically solved. Gemini scores 98% on it. ARC-AGI-2 went from 3% to 77% in under a year. Labs spent millions training on earlier versions.

ARC-AGI-3 resets the entire scoreboard to near zero.

The benchmark launched live at Y Combinator with a fireside between Chollet and Sam Altman.

$2M in prizes on Kaggle. All winning solutions must be open-sourced.

Scaling alone will not close this gap. We are nowhere near AGI.

(Link in the comments)

English

Marc Weiss retweetledi

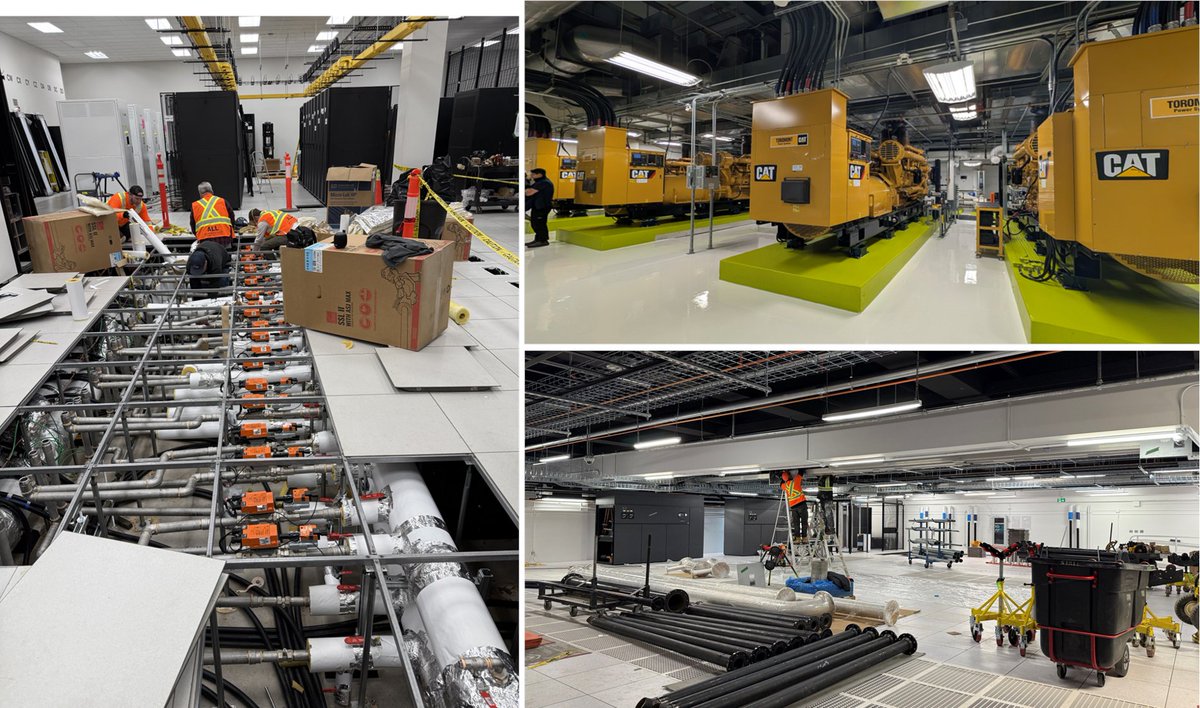

When we started @cerebras, we never imagined we’d be building out data centers.

In 2015, 100MW was a very big facility.

No longer.

Today, we have facilities underway in the US, Canada, Europe, and soon the Middle East.

Our CS-3 was one of the first water-cooled AI accelerators.

The HPC community pioneered water cooling, but in the AI world, Cerebras and Google were the first.

Here you can see the underfloor water cooling structure being installed.

Every orange device is a @belimo valve.

The rows of @CaterpillarInc Generators are for power backup.

This facility is grid powered, but for high reliability, we use generators and batteries in front of them.

This is what a fast AI factory looks like while it’s under construction.

We’re still just getting started.

English

Marc Weiss retweetledi

Marc Weiss retweetledi

At GTC, we saw the crumbling of one of @nvidia most enduring moats.

It was a perception moat.

It was the perception that GPUs were all you need for AI.

Nvidia paid $20 billion for Groq. Acknowledging that for fast inference, the GPU alone couldn’t get the job done.

Nvidia’s newly announced inference solution requiring 5 distinct systems, comprised of CPUs, GPUs and LPUs, makes it clear that the GPU isn’t enough for fast inference, and put a stake through the heart of the notion that all you need for AI is the GPU.

What happened?

👍 Agentic coding took off.

👍 The market for chat is limited by the number of internet users.

👍 But coding tools are different. They are billed by the token and developers are using more all the time.

👍 Coding agents need fast inference - speed, measured by tokens per second per user.

👍 When inference is fast, developers are more productive, they ship faster, which in turn, generates more revenue.

Nvidia said in the keynote: “Fast tokens are smart tokens and valuable tokens.”

I will add the corollary. Slow tokens are not so smart tokens, and not so valuable.

Cerebras is the fastest inference hardware in the world.

English

Marc Weiss retweetledi

ELON MUSK: "We're starting off with an advanced technology fab here in Austin, and I'd like to thank @GregAbbott_TX and the state of Texas for the support.

So in the advanced technology fab, we will have all of the equipment necessary to make a chip of any kind logical memory, and we will also have all of the equipment necessary to make the masks. So in a single building, we can create a mask, make the chip, test the chip, make another mask, and have an incredibly fast recursive loop for improving the chip design.

To the best of my knowledge, this doesn't exist anywhere in the world. We're really going to push the limit of physics in compute, and we're going to try a bunch of wild and crazy things, which you can do if you've got that fast iteration loop that I can't emphasize enough the importance of being able to make it, to test it and and then make and then change the design, do another one, and have that in a single building."

English

Marc Weiss retweetledi

This feels like physical product design's ChatGPT moment.

This team just ran an autonomous agent against the entire chip design process: 219-word spec in, tape-out-ready silicon layout out, 12 hours later. The agent ran continuously against a simulator, found its own bugs, rewrote its own pipeline, and iterated to a working CPU!

Chip design costs well over $400M and takes up to 9 years. Not because writing hardware code is hard (it is actually brutally hard) but because a respin costs 10 of millions. So teams spend more than half their total budget just verifying the design is correct before a single transistor is placed. That cost structure is why most chip designs never get built.

Entire product categories that were previously too low-volume to justify a tape-out are now buildable.

Towaki Takikawa / 瀧川永遠希@yongyuanxi

Design Conductor: an AI agent that can build a RISC-V CPU core from design specs. The agent is given access to a RISC-V ISA simulator and manuals... to enable an end-to-end verification-driven generation. The most important thing for design intelligence is a verifier 😎

English

Marc Weiss retweetledi

@Hammerspace_Inc’s AI Data Platform is a true game-changer for enterprises deploying AI in regulated industries.

Security and governance are key when it comes to implementing the best AI solution for your data estate. The Hammerspace AIDP is integrated with @SecuvyA’s Data Security Posture Management (DSPM) technology, providing customers with an end-to-end solution that prepares and delivers AI-ready data while ensuring continuous security monitoring, compliance, and governance throughout the entire data pipeline.

Mike Seashols, CEO of Secuvy, shares the key benefit of the integration between Secuvy and Hammerspace to create a trusted solution allowing AI-ready data to move anywhere without having to risk security or governance in the process.

Prioritize your data security and governance. Change the game with the Hammerspace AI Data Platform.

The Hammerspace AI Data Platform is available now.

okt.to/KjJnYs

#HammerspaceAIDataPlatform #Hammerspace #AIDP @nvidia

English

Marc Weiss retweetledi

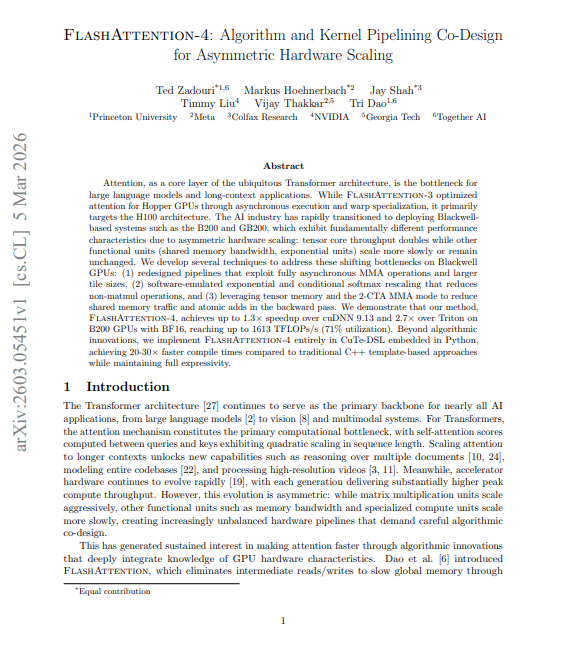

🚨 BREAKING: NVIDIA sold the most powerful AI chip ever built.

Then Princeton discovered the software running on it was wasting 60% of it.

Every inference job. Every training run. 60 cents on every dollar, gone.

> NVIDIA doubled the raw compute power of their Blackwell B200 GPUs compared to Hopper H100. Tensor core throughput went from 1 PFLOPS to 2.25 PFLOPS. The most powerful AI chip ever built.

> The problem: the rest of the chip didn't scale with it. Memory bandwidth stayed the same. The exponential unit stayed the same. So the bottleneck moved and all that extra compute sat idle while the slower parts of the chip became the new ceiling.

> Every existing attention implementation, including FlashAttention-3, was designed for Hopper. On Blackwell they either left massive performance on the table or couldn't run at all.

> Princeton, Meta, and Together AI spent months redesigning attention from scratch around the new bottleneck. New pipelines. Software emulated exponential functions. A completely different backward pass. The result: FlashAttention 4.

→ Up to 2.7× faster than Triton on B200 GPUs

→ Up to 1.3× faster than NVIDIA's own cuDNN library

→ Reaches 1,613 TFLOPs/s 71% of theoretical maximum

→ Compile time dropped from 55 seconds to 2.5 seconds (22× faster)

→ Written entirely in Python no C++ template expertise required

The scariest part: this wasn't a hardware problem. The chip was delivering exactly what NVIDIA promised. The software just wasn't designed for it.

Every AI lab running B200s before this paper was paying for compute they couldn't use.

English

Marc Weiss retweetledi

Marc Weiss retweetledi

The idea that this admin is "fumbling" (Politico) this energy moment is hilarious. It's thanks to Trump's energy indendence/dominance agenda that we could confront Iran, and why his team now has levers to pull. The real risk to energy: Biden

wsj.com/opinion/trumps… via @WSJopinion

English

Marc Weiss retweetledi

Marc Weiss retweetledi

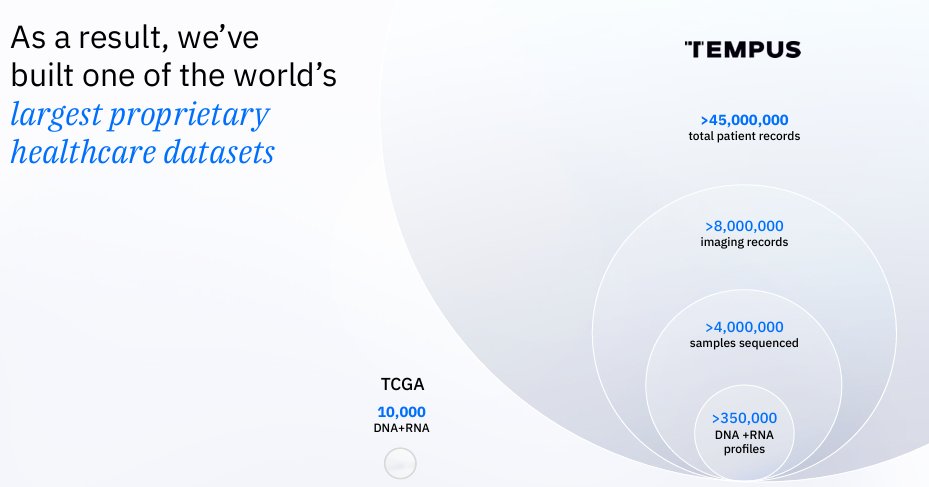

$TEM 🔥Tempus AI, March 3 Morgan Stanley Recap: The Thesis Is Getting Stronger

The Morgan Stanley conference added a great deal to the Tempus story for investors trying to understand what this company is becoming. Eric Lefkofsky gave the clearest high-level framing yet: “Today we are absolutely an AI company.” What matters is how he defined that statement. He said Tempus does not approach the business by saying, “Oh, we’re gonna build models, and that’s our core business.” The company’s real objective is to “garner access to proprietary data,” use that data “whether it’s enhanced by our own models or other people’s models,” then “generate insights and deploy those insights back into the clinic.”

Lefkofsky also explained why the market still struggles to place Tempus. He said the company operates in “both of these worlds,” as an NGS business generating proprietary molecular data and as a “data AI company” that “takes that data and generates insights, licenses the data, licenses the models.” He added that diagnostic investors often do not get the data and AI side, while AI investors are uneasy with diagnostics. That split matters because it helps explain why Tempus can still be misunderstood even as the underlying asset base keeps getting stronger.

The data moat came through very clearly. From the beginning, Tempus did not want to sequence patients and stop there. Lefkofsky explained that the company went to providers and effectively said they would sequence patients, but they also needed the clinical data, because the real prize was learning whether insights generated from the sequencing actually worked in practice. He described the essential feedback loop in direct and practical terms: if Tempus found a mutation and recommended a drug, did the patient actually go on that drug, and how did the patient respond over time. That is the foundation of the dataset: rich molecular data connected to real treatment and response histories.

He then described what had to be built on top of that dataset to make it commercially useful. Once Tempus had amassed the data, handing raw files to customers was never going to be enough. The company had to build tools that let pharma interrogate the data, construct cohorts, refine those cohorts, and extract insight. Investors should pay attention to that because it means Tempus has spent years building software and workflow infrastructure around the data asset itself. That kind of work deepens the moat and raises switching costs.

He backed that up with one of the more revealing comments in the transcript. Tempus has “700 software engineers and product folks,” and it has made cloud investments “five or 10 times” larger than others in the space. Those are meaningful clues about what kind of company Tempus has been building all along. It has been investing like a technology platform that expects software, infrastructure, data operations, and AI deployment to sit at the heart of the business.

The Merck discussion was especially strong. Lefkofsky said, “you just don’t have people like AstraZeneca and GSK and BMS and Merck and others signing these $100 million plus deals unless the data is both incredibly useful and they can generate real insights from it.” That line deserves investor attention because it frames these pharma relationships as hard validation of utility. Big biopharma is paying for data and insight that can shape research, development strategy, trial design, and commercial decision-making.

He also described how those relationships deepen over time. “Merck’s a great example,” he said. These deals often begin relatively small, then expand as customers discover more use cases and eventually realize they want broad access to the data and tools. In Merck’s case, “it’s a five-year agreement, but four years are committed.” He compared the commercial structure to AWS, GCP, or Azure, where customers can buy a little or a lot and what changes is mainly access and pricing. That sounds like a relationship model with room for meaningful expansion as usage and dependence grow.

Another telling section came when Lefkofsky discussed competition. His comments suggest Tempus is playing in a lane where the customer decision is about strategic value rather than commodity pricing. The implication was that pharma customers come to Tempus when they want a dataset and insight layer capable of changing oncology programs in a meaningful way. That matters because strategic data assets with workflow relevance and trust embedded into them tend to command better economics and stronger customer stickiness over time.

The AI model section was the most exciting part of the transcript for me. Lefkofsky said large frontier model companies are increasingly coming to Tempus asking, “What data do you have?” He said their interest in this kind of data “on a scale of one to 10 was a one” and “it’s now like a five.” Then came the key line: “every one of these companies that we’re engaged with… is asking the exact same question. They want longitudinal patient histories at scale.” That is a very important signal. It suggests the next wave of healthcare AI is running directly into the scarcity of proprietary longitudinal real-world data.

He pushed the point even further with one of the best quotes in the session. These models, he said, are already extremely good at predicting the next likely word. With enough proprietary patient data, the opportunity is to “predict the next likely drug or the next likely therapy that would work for a patient.” That is the statement that should make investors pause. He is pointing toward a future in which model capability becomes clinically actionable in a much more direct way. The promise here is not abstraction. The promise is better therapeutic prediction and better individualized care.

The moat discussion around the data was equally strong. Lefkofsky said the dataset is hard to replicate because you have to integrate with thousands of hospitals, get through legal and IT bottlenecks, gather multiple time points, collect structured and unstructured data, and connect all of that to Tempus’s own proprietary sequencing files. He said the dataset is “approaching 500 petabytes,” and he was blunt about the competitive history: efforts by others to bolt clinical and molecular datasets together have not gone well. Those remarks help explain why Tempus may occupy a uniquely strategic position just as model builders go looking for real-world healthcare data at scale.

Lefkofsky’s gave a lot of clarity on consumer AI and Tempus' differentiation. He said, “On the consumer side, I suspect those models will be delivered by the big consumer companies. Like, we have no aspiration to be that company.” He explicitly named “Apple and Google and OpenAI and Anthropic or whoever.” That is useful because it removes a category error some investors may still be making. Tempus is not trying to win a mass-market consumer health assistant battle. Lefkofsky is placing the company on the provider and pharma side, where the moat comes from trust, data custody, healthcare system integration, and existing relationships.

He sharpened that point further by explaining why the provider and pharma side belongs to companies like Tempus. In order to connect into the U.S. healthcare system, you have to be a covered entity. It is complicated. It involves deep logistical and regulatory complexity. He then made what may be one of the most important strategic comments in the whole transcript: “I just don’t see a world anywhere in the near term where the biggest pharmaceutical companies are uploading their critical clinical trial data to OpenAI or Google or whoever. I just think it’s too invaluable.” For investors, that is a very crisp articulation of where he thinks defensibility sits in healthcare AI.

Lefkofsky expanded on the new foundation models, saying that Tempus is building 2 models, one with AstraZeneca and Pathos AI and another pan-disease model on its own. He said the company has established 2 compute clusters, one with about “1,000 H200s” and another roughly that size using GB200s. The purpose is to generate “multimodal insights that you just can’t see unless you have enormous amounts of data.”

His example in non-small cell lung cancer was one of the most compelling illustrations of where this can go. Today, a patient may be identified as EGFR positive and placed on an EGFR inhibitor according to guideline therapy. Lefkofsky described the opportunity to go much deeper by distinguishing which patients may respond exceptionally well, which may fall into the middle, and which may progress quickly despite fitting the broad biomarker category. That opens the door to a much more refined response-prediction layer on top of standard biomarker-guided care.

He also laid out a useful framework for where long-term value may accrue. The data remains the scarce asset for training models that can change physician and patient behavior. Over time, the applications and algorithmic outputs built on top of that data can become even more powerful economically. This is a big reason the story is so interesting for investors. Tempus already has a data-generation engine, a data-licensing and insight business, and a pathway toward increasingly valuable AI-enabled applications.

The other major addition worth emphasizing is Lefkofsky’s comment on market psychology. He said the broad AI euphoria from 7 or 8 months earlier had dissipated considerably. Then he made the point that matters: if someone asked whether AI in healthcare is overhyped in the short term, his answer would be “no.” He went even further and said “the opportunity of AI in the near term is probably underappreciated.” He then added, “It’s way underappreciated in the long term, but it’s probably also now underappreciated in the near term.” That is a very interesting shift in tone. He is saying the market mood has cooled just as the operating proof points are getting closer.

The line that gives that argument real weight is his prediction that “in 2026, you will start to see very tangible evidence that AI is going to impact healthcare at incredible scale,” both from companies like Tempus on the provider and pharma side and from OpenAI, Anthropic, Google, Apple, and others on the consumer side. He is clearly telling investors that the visible evidence should begin to emerge this year. Then he connects that to economics by noting that Tempus has projected 25% growth for the next 3 years, while saying the “data long-term prediction and the AI long prediction is much higher than 25%.” Near-term evidence, long-duration upside, and an increasingly valuable data/AI segment is a powerful setup if execution follows.

My read is that this transcript did more than reinforce the Tempus thesis. It sharpened it. What came through here is a company that may be sitting on one of the most strategically valuable proprietary data assets in public-market healthcare at exactly the moment frontier model development is running into the need for proprietary longitudinal patient histories at scale. The Merck expansion matters because it shows large pharma wants deeper access once it understands the utility. The frontier-model angle matters because Lefkofsky is saying the world’s largest model builders are increasingly coming to companies like Tempus asking what data they have, and that every one of the major players they are engaged with wants longitudinal patient histories at scale. That is a big statement. It suggests Tempus may control part of the training substrate serious healthcare AI increasingly needs. And the line about predicting “the next likely drug” matters most of all because it points toward a future where Tempus is helping drive actual therapeutic decision-making in medicine.

What excites me is that Lefkofsky also made clear they are not trying to become some broad consumer health AI app in the mold of OpenAI, Anthropic, Google, or Apple. He effectively said that lane belongs to the big consumer platforms. Tempus is going after the part of healthcare AI where the real defensibility lives: provider workflows, pharma relationships, data rights, covered-entity trust, and infrastructure that took years to build. If that vision keeps executing, Tempus has a path to becoming far more than a diagnostics company with an AI story attached. It can become a core intelligence layer for precision medicine and biopharma, with the business mix moving toward higher-value data, models, and algorithmic applications. To me, that means the market may still be valuing Tempus below the scale of the platform it is actually becoming.

English

Marc Weiss retweetledi

CUDA is both Nvidia’s biggest strategic advantage, and a massive constraint.

MatX CEO @reinerpope says Jensen could scrap CUDA’s backward-compatibility guarantee, but likely won’t because it would erode Nvidia’s ecosystem lock-in:

“Their promise is you can take a CUDA program written 10 years ago and run it on the next-gen Nvidia GPU.”

“That means the next-gen GPU has to look a lot like the GPU from 10 years ago.”

“The CUDA lock-in is very valuable in the mid and tail of the market, where people are sensitive to software costs. But at the head of the market in the frontier labs, software isn’t the main cost. Hardware is.”

English

Marc Weiss retweetledi

Marc Weiss retweetledi

Germany shut down its last nuclear power plants in 2023. That decision removed 4 gigawatts of reliable, low-cost baseload electricity.

The plants were operational, and fully paid for.

Worse still, there was no replacement capacity ready.

Germany turned to intermittent wind and solar backed by gas, imports and emergency coal restarts.

The obvious consequences followed.

Electricity prices surged, grid reliability weakened, and fossil fuel use actually increased.

Chancellor Friedrich Merz has now just admitted the shutdown was a strategic mistake, calling Germany's energy transition the most expensive in the world.

A reckless bending to extreme green ideology traded reliability and prosperity for optics. Working nuclear plants were destroyed. And for what?

English