yep

113 posts

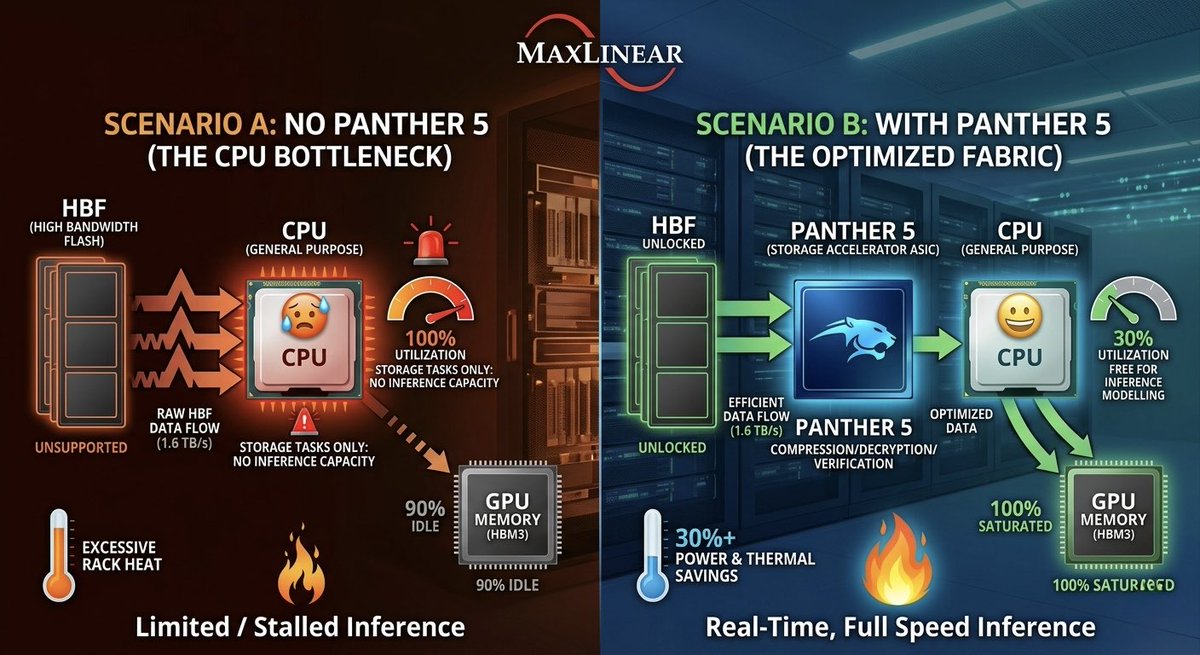

"The Next Bottleneck After HBM Is HBF"... A Computing Pioneer's Prediction "I have been consistently paying close attention to High Bandwidth Flash (HBF). I'm also collaborating with semiconductor companies on this. HBF is highly likely to stand at the center of the next bottleneck — a surge in demand." David Patterson, professor at UC Berkeley, Turing Award laureate, and widely recognized as the architect of RISC (Reduced Instruction Set Computing — an approach that simplifies instructions to improve processing efficiency), made these remarks on April 30 (local time) when he met with reporters in San Francisco immediately after delivering a keynote at the Dreamy Next event. Asked about what comes after HBM (High Bandwidth Memory), which is currently in a supply-constrained bottleneck, Professor Patterson answered that HBF will emerge as the next focus. Specifically, he said, "Although a number of technical challenges still remain, the HBF being developed by companies such as SK hynix and SanDisk is a meaningful alternative in that it can deliver large capacity with low power consumption," adding, "Going forward, how efficiently data can be stored and delivered will become the critical variable." This past March, SK hynix announced that it had joined hands with U.S. flash memory company SanDisk to drive the global standardization of HBF. Unlike HBM, which stacks DRAM, HBF is built by stacking NAND flash — a non-volatile memory. Their roles are also distinct. While HBM serves as a fast computation aid, HBF is focused on storing the vast amounts of data that AI processes at high capacity. HBF is drawing attention as the AI inference market grows. The AI market is broadly divided into learning (training) and inference. Training is the process of feeding massive amounts of data to teach an AI model. Inference is the stage in which results are derived based on the trained data. In inference AI, the ability to continuously store and retrieve vast amounts of intermediate data — such as prior conversations, judgment outcomes, and task context — is crucial. This is because AI carries out reasoning by remembering context and building upon it. The problem is that all of this data is difficult to fit into HBM. Since HBM is optimized for handling data used immediately, its capacity itself is inherently limited. Moreover, given its high price, processing the enormous amounts of context data generated during inference using HBM alone would impose significant cost burdens. As a result, an environment has formed in which both HBM and HBF are needed simultaneously — a kind of division of labor. Domestic experts in Korea also anticipate that the importance of HBF will grow going forward. At an HBF research and technology development strategy briefing held this past February, Kim Jung-ho, professor in the School of Electrical and Electronic Engineering at KAIST, stated, "If the central processing unit (CPU) was the core in the PC era and low-power technology was the core in the smartphone era, memory will be the core of the AI era," adding, "What determines speed is HBM, and what determines capacity is HBF." He further predicted, "From 2038 onward, demand for HBF will surpass that of HBM."

$LPK LPKF Laser broke out the symmetric wedge. This wedge was formed yesterday by establishing a higher low. Price target of the pattern is roughly 21€. It is getting unlikely to see the 12.x€ at this point unless the earrings gonna be really bad. If we close the week above 17€ I see an approach of 28€ as realistic.

Interview with Nebius Co-Founder Roman Chernin Please like & share this video so that all $NBIS investors on X will see it! :) If you prefer watching on YouTube: youtu.be/ZSo4tWCw4NU Timestamps: 00:00 - Why AI Infrastructure Is So Hard to Understand 00:24 - Market Fragmentation and What Actually Differentiates Providers 01:30 - Consolidation, Segmentation, and the Future AI Cloud Landscape 02:56 - What Analysts and VCs Still Get Wrong About AI Infrastructure 05:34 - Nebius Cloud: Product Readiness and Customer Proof Points 07:42 - Why Inference Workloads Are Exploding 09:11 - Training vs. Inference: How AI Models Actually Reach Production 10:10 - Why Inference Market Share May Concentrate Around a Few Winners 12:36 - Customer Use Cases: Coding, Enterprise AI, and Real-World Adoption 14:01 - Why Integrated Training and Inference Matter Strategically 16:01 - Building Scalable AI Infrastructure With High Utilization 18:24 - Token Factory: Inference as a Managed Service 20:24 - Revolut Case Study: AI-Driven Product Enhancements 22:56 - Token Factory Performance Optimization and Competitive Advantage 25:07 - Scale, Capacity, and Efficiency as Growth Drivers 28:36 - Why Inference Capacity Could Become the Next Major Bottleneck 30:10 - How Nebius Benchmarks Performance Across Providers 33:14 - The Future Size and Shape of the Inference Market 36:38 - Value-Based Pricing: Moving Beyond Cost per GPU Hour 40:55 - How Nebius Wins Deals: Quality, Performance, and Customer Experience 44:53 - Autonomous AI Platforms and the Rise of Agent-Based Models 47:28 - Tavily, Agentic Applications, and the Next Layer of the AI Stack 50:45 - Strategic Trade-Offs: Scaling, Product Roadmap, and Customer Relevance 55:40 - Final Thoughts: Adapting to the Next Shift in AI Workloads @nebiusai @romanchernin

$LPK Alright, immediate big update, you can now hover over (most of) the arrows and click on them to see the source URL (usually Korean reporting) for the particular connection

Been digging through European photonics the last few weeks. Most of you already know $AIXA and $SOI. Both still have room. Getting a lot of questions about $LPK.DE lately so I want to address it directly. LPKF is the laser process pioneer for glass substrates. Intel, Samsung, and TSMC are all piloting glass as the next-generation AI packaging material. Glass handles heat, density, and integrated optics better than anything else on the roadmap. The bottleneck is simple. Glass cracks when you drill it. LPKF's LIDE process is one of a very small number of technologies that can structure glass at production scale without cracking. No glass substrate ecosystem gets built without a tool like theirs in the line. Management's own words on the recent call: the question is no longer whether glass breakthrough in advanced packaging happens, but when the ramp-up begins. There are two more names attached to this supply chain that I am still researching and not ready to publish on yet. One is the integrated metrology sitting inside $AIXA's reactors. Trades like a sleepy industrial despite the direct AI attachment. One is the micro-cap making the actual glass wafers. Up 200% already and still small enough that real revenue ramps break it wide open. Besi trades at 77x EBITDA on the advanced packaging theme. These trade at fractions of that one layer upstream. H/T to @AlmaCap114204 who found this one early. More to come.