Max Kaplan

4K posts

Max Kaplan

@maxekaplan

Recovering, exited founder and public company exec | fmr @solstrategies @orangefincrypto (acq) @krakenfx

🚨 OpenClaw Chain Vulnerabilities Expose 245,000 Public AI Agent Servers to Attack Source: cybersecuritynews.com/openclaw-chain… A chain of four critical vulnerabilities discovered in OpenClaw, one of the fastest-growing open-source platforms for autonomous AI agents, has left an estimated 245,000 publicly accessible server instances exposed to remote exploitation, credential theft, and persistent backdoor installation. Shodan and ZoomEye scans as of May 2026 reveal approximately 65,000 and 180,000 publicly accessible OpenClaw instances, respectively, totaling roughly 245,000 exposed servers. What makes this chain especially dangerous is that the attacker weaponizes the AI agent’s own privileges. #cybersecuritynews

🚨 Critical 18-Year-Old NGINX Vulnerability Enables Remote Code Execution Attacks Source: cybersecuritynews.com/18-year-old-ng… A critical heap buffer overflow vulnerability, lurking in NGINX's source code since 2008, has been publicly disclosed. This vulnerability has been publicly disclosed, along with a working proof-of-concept exploit that can enable unauthenticated remote code execution (RCE) against one of the most widely used web servers in the world. Assigned a CVSS score of 9.2, CVE-2026-42945 resides in NGINX's ngx_http_rewrite_module. This engine powers URL rewriting and variable assignment in virtually every modern NGINX deployment. #cybersecuritynews

🚨 Google Project Zero just published a Pixel 10 zero-click to root exploit chain. Two vulnerabilities and less than a day of work to weaponize the second one. Chain: - Stage 1: same Dolby UDC zero-click (CVE-2025-54957) used against the Pixel 9. Patched in January 2026. Only minor offset updates and a tweak around RET PAC needed to port to Pixel 10 - Stage 2: a brand new local privilege escalation in the VPU driver for the Chips&Media Wave677DV on the Tensor G5 Result: arbitrary kernel read/write in 5 lines of code. Full exploit written in under a day.

🥇 Congrats @solstrategies — STKESOL takes #1 on the GDI with a 4.47. A new standard for measuring stake pool contribution to Solana's network security & decentralisation. SOL Strategies delegates algorithmically across dozens of validators — and the geographic spread shows. gdindex.app/pools/StKeDUdS… cc @laine_sa_

@Niyi_0550 If the token is HL‘s differentiator, they will never get to binance levels. Product is 100% the most important thing

The Google Threat Intelligence Group has detected the first known instance of a threat actor using an AI-developed zero-day exploit in the wild. While the attackers planned a wide-scale strike, our proactive counter-discovery may have prevented that from happening. This finding is part of our new report on AI-powered threats.

"AI code is crap." The shit your human engineers get up to:

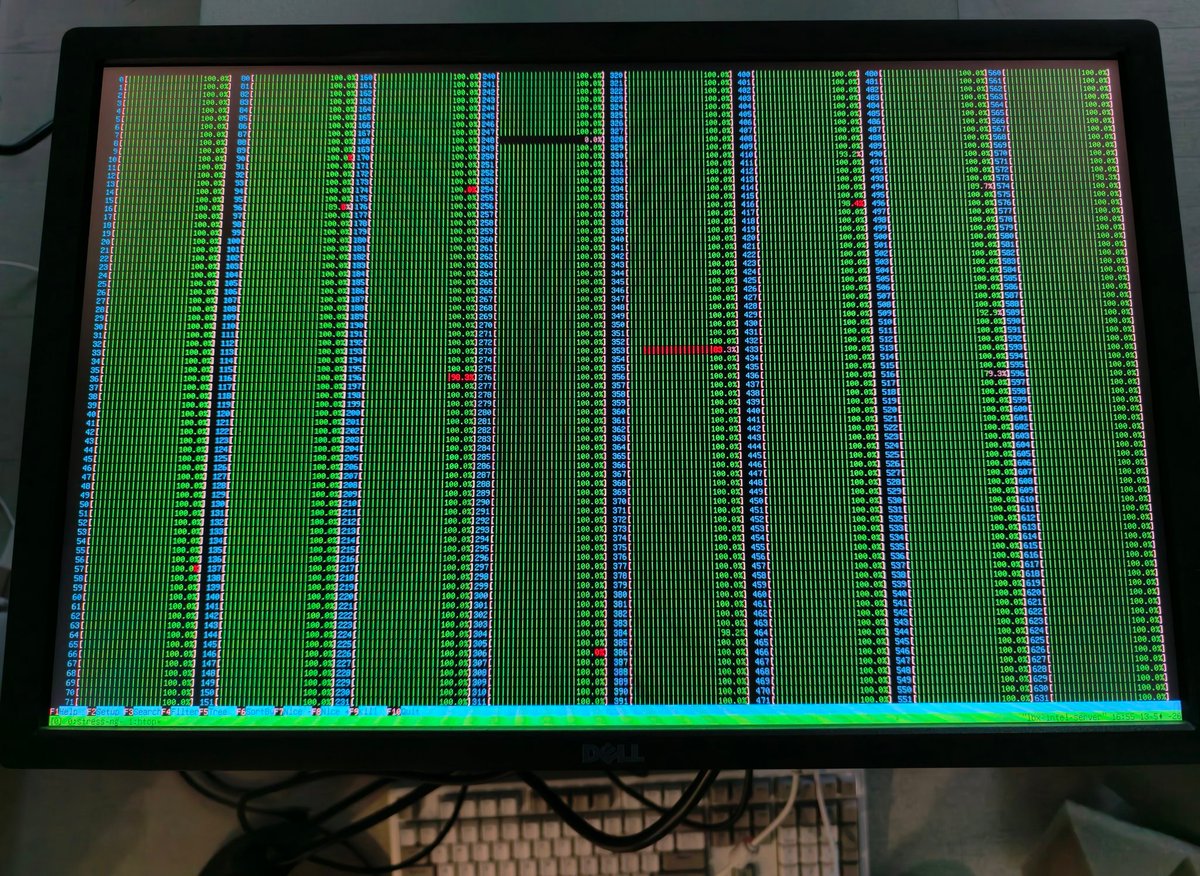

Yesterday @coinbase experienced a multi-hour service disruption affecting trading, exchange access, and balance updates. Here's our initial read from Coinbase engineering on what happened, how we recovered, and what we're addressing. At approximately 23:50 UTC on 2026-05-07, our monitoring detected cascading quote failures from internal services that triggered multiple Sev1 incidents that engineering immediately began investigating. Customer-facing impacts included spot trading, Prime, International and derivative exchanges. Root cause: a thermal event (cooling system failure) inside a subset of racks within a single building in AWS us-east-1. We run a primary replica of our exchange infrastructure in a single zone, consistent with industry standards to reduce latency. To prepare for failures like this, we maintain a distributed standby, but during this incident, failures in the primary zone that were designed to be isolated were not, extending the duration of our outage. The failure cascaded down two paths: 1. Multiple hardware components beneath our exchange’s matching engine failed, requiring recovery and failover 2. Distributed Kafka clusters that manage messaging across Coinbase systems failed to remain available, also requiring partition failovers to new hardware brokers with many TiBs of data After isolating the incident: automated tooling drained ~10 Kubernetes clusters worth of related workloads out of the affected zone to stabilize internal services. Most services were back to normal within ~30 minutes of diagnosis. The two things we couldn't automatically drain: the exchange (dedicated hardware and storage) and Kafka (managed service that was designed to be resilient to this, with unique problems). The exchange matching engine is the core system responsible for processing orders and maintaining order books. It is a distributed cluster and requires quorum to safely elect a leader and continue processing trading activity. During the incident, infrastructure-level constraints in the affected datacenter left only a subset of nodes healthy, preventing the cluster from reaching quorum. As a result, trading across Retail, Advanced, and Institutional exchanges were blocked. Recovery required our oncall and engineering teams to execute our disaster recovery plan, restore quorum safely, and validate system health under constrained infrastructure conditions. The team built, tested, deployed, and validated the fix while continuing to manage the broader incident. Kafka recovery was a much larger scale operation. Our primary managed Kafka partitions process many terabytes of data daily and are designed with resiliency guarantees for uninterrupted operation during a datacenter failure just like this. In this case, those guarantees failed and required manual recovery. We again relied on disaster recovery procedures to recover stuck partitions onto new hardware (brokers) that enabled us to safely bring x-service messaging back online across Coinbase. During the lag, customers saw delayed balance streams which resolved automatically once replication caught up. No data lost. Once the engine came back up as part of our standard runbooks, we re-opened markets carefully: all products to cancel-only mode first, audited product states, then moved all markets to auction mode, before restoring trading on Coinbase Exchange. What went right: the team. Incident response across the company came together within minutes, followed well-rehearsed playbooks and used secure automation tooling to recover all services. We have a strong, senior team at Coinbase that worked through rare failure modes to recover all services. To our customers: losing access to your account, even temporarily, is unacceptable. We know that. We're sorry, and we’ll publish a full root cause analysis in the coming weeks 🙏