Maxim Bobrin

14 posts

Maxim Bobrin

@maxsbob21

Interests: Optimal Transport, RL, Generative Models BSc & MSc in Mathematics;

We scored 36.08% on ARC-AGI-3 in one day using the Agentica SDK.

ARC-AGI-3 scores for GPT-5.4, Gemini 3.1 Pro and Opus 4.6 Gemini 3.1 Pro: 0.37% GPT-5.4: 0.26% Opus 4.6: 0.25% Grok 4.2: 0%

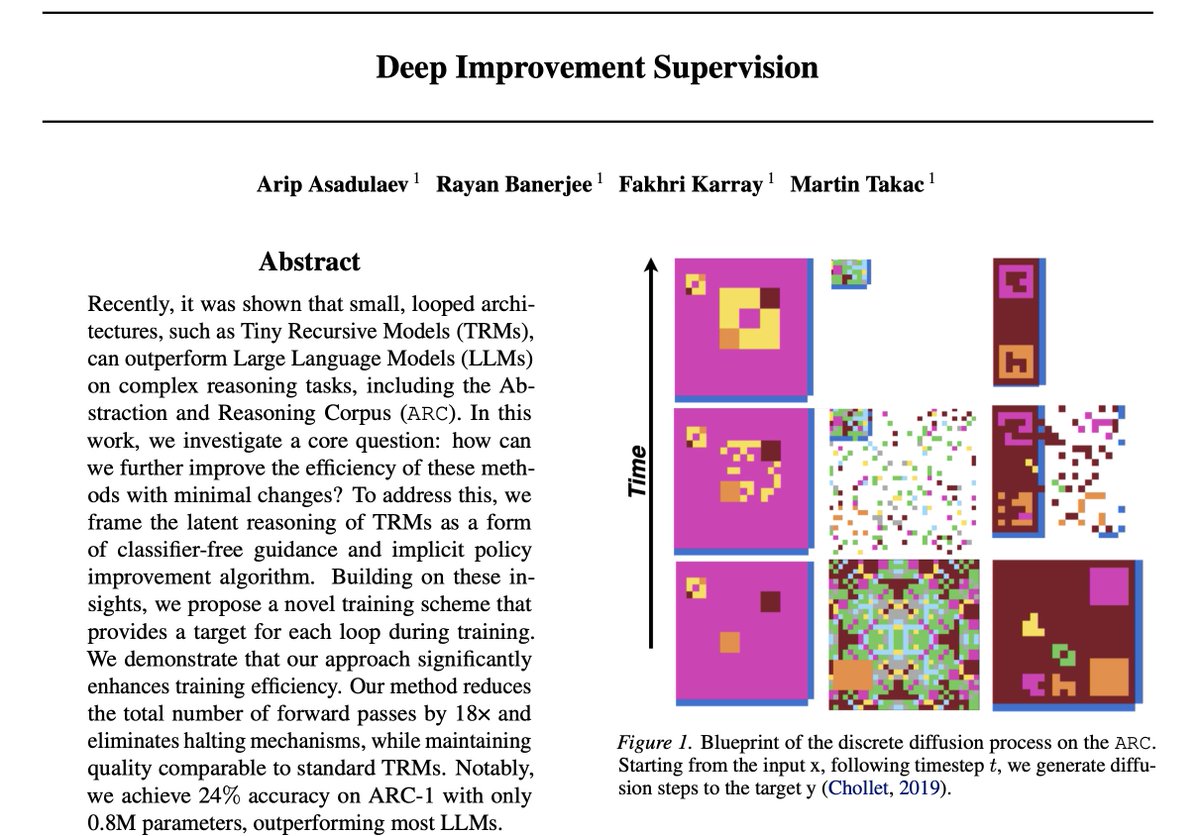

The masculine urge to try to hack a new solution to ARC-AGI benchmarks

This indie dev is making a third-person parkour runner game. If we can’t have Mirror’s Edge 2, then indies will. - Play as Kaia - Use parkour abilities to traverse the world - Move in any way you see fit It's called Tachyon Flow. Would you play this?

20 hours -- 99.93% PPO is all you need. 4 GPUs with 8k envs each. (Slightly better parameters than the current default in ProtoMotions, will update after verifying results are stable)