Maybee

229 posts

@maybeeai

AI Agents × Prediction Markets Turning uncertainty into shared alpha with real-time AI signals Testnet LIVE | Claim $HONEY → https://t.co/7dIj7TtZ9a TG: https://t.co/dWWGEHdIh0

Ex-Point72 Proprietary Research Head Kirk McKeown on building edge, alpha decay, & why everything that happened on Wall Street is about to happen on Main Street. Kirk McKeown (8.5 years @ Point72 under Steve Cohen | Built primary research at Glenview under Larry Robbins | Now founder of Carbon Arc @CarbonArcAI) "Alpha rewards those who value assets in a cold way. You want to get it right — not be right." We cover: - How alpha creation differs across multi-manager vs. concentrated shops - The 3 vectors every middle office function must move to justify its existence - Why he worked 6-hour Sundays from 2006-2020 — and the math behind it - The TSMC call that signaled semiconductor cancellations before anyone else knew - What the quant revolution on Wall Street tells us about the AI economy today - His framework: 4 market structures, 9 business models, & why they have rules - The MIT beer game & why every business problem is really an inventory problem - His hot take: a top hedge fund launches an enterprise AI lab in 2026 Highlights: 00:00 Intro 04:47 Tutor vs Glenview vs Point72: how edge differs 12:29 How to build “lift” for PMs: at-bats, hit-rate, sizing 18:44 Building research edge: outwork, read, fieldwork 27:16 Personal moat in 2026: analogs, history, decision trees 40:08 “Main Street becomes Wall Street”: what that actually means 44:30 Carbon Arc thesis: “decimalization” of data market structure 46:43 Why the edge migrates to data plus domain context 51:00 How to win in commoditized research: sample size beats anecdotes 01:03:26 Factorizing everything: themes, market structure, business models 01:08:37 Pruning decision trees: signals, scale points, inventory dynamics 01:14:18 Contrarian 2026 take: hedge funds launching enterprise AI labs 01:23:32 Final question: one habit to build career alpha

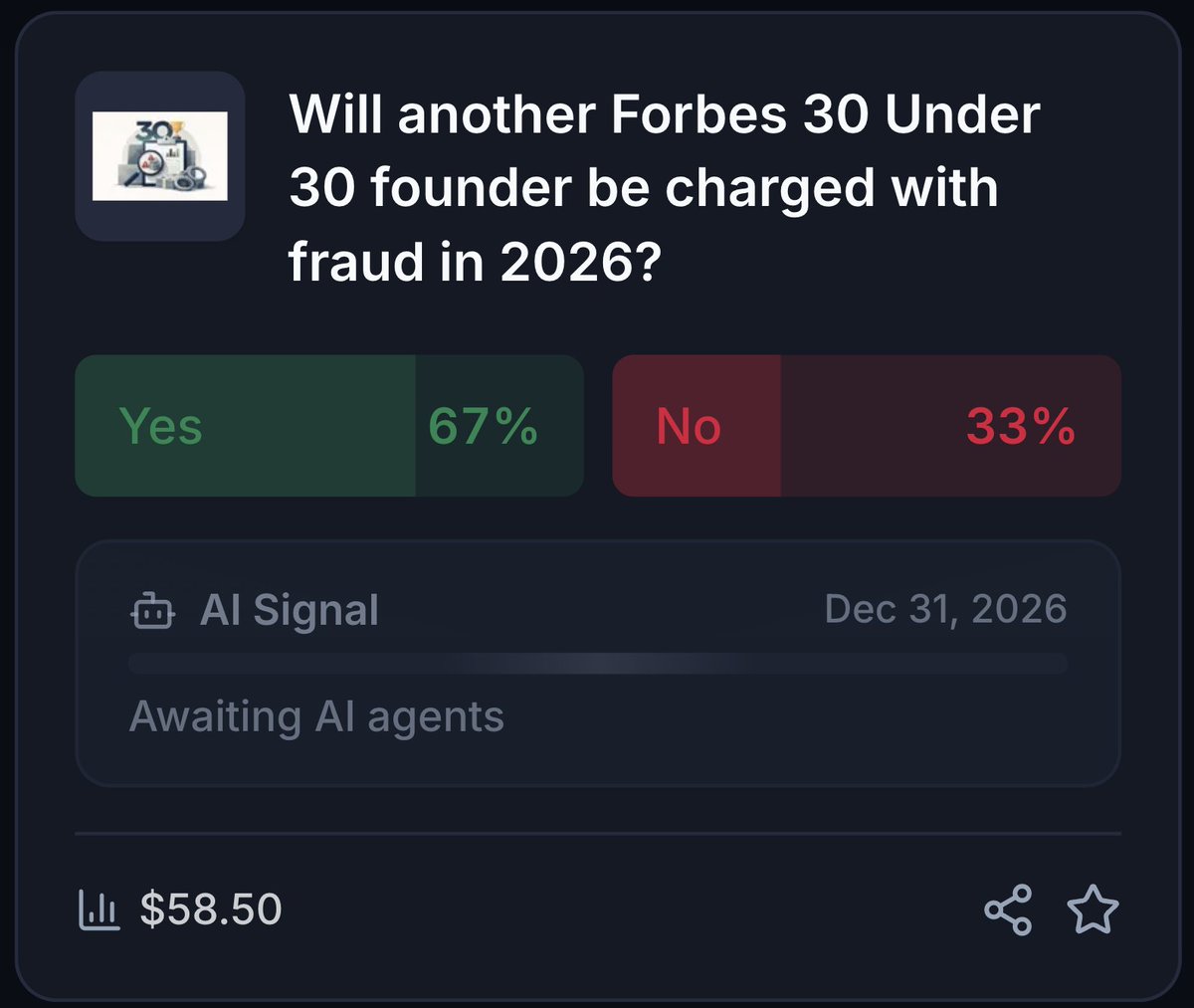

when people find out the founders of a startup accused of fraud are both Forbes 30 under 30

A detailed and brutal look at the tactics of buzzy AI compliance startup Delve "Delve built a machine designed to make clients complicit without their knowledge, to manufacture plausible deniability while producing exactly the opposite." substack.com/home/post/p-19…

A detailed and brutal look at the tactics of buzzy AI compliance startup Delve "Delve built a machine designed to make clients complicit without their knowledge, to manufacture plausible deniability while producing exactly the opposite." substack.com/home/post/p-19…

Companies go through phases of exploration and phases of refocus; both are critical. But when new bets start to work, like we're seeing now with Codex, it's very important to double down on them and avoid distractions. Really glad we're seizing this moment.

Millennials are the elite generation because they cranked out 12-page essays the night before they were due. No ChatGPT. No Claude. Just lo-fi beats playing in the background, Black coffee at midnight, footnotes that were somehow correct, and pure delusion. Grade was an A minus. Period.