Marcin Doliwa

692 posts

Marcin Doliwa

@mdoliwa

I build what I like; I don't build what I don't like.

Białystok, Polska Katılım Temmuz 2019

294 Takip Edilen261 Takipçiler

I regret losing that skill of building stuff for pure fun.

I feel guilty now if I don't work on anything that doesn't grow my MRR.

Was super optimized and focused for too long. Don't know if I will recover.

@levelsio@levelsio

💾 Okay having nobody online to chat on AIM is boring So I asked AI to write an AOL Instant Messenger bot called @pieterbot, and I made an account for it, and it one-shotted it in Python and IT WORKS!!! So now you can chat on AIM on pieter.com with an LLM that is fully self-aware it is an AIM bot 🤠👍

English

@Dan_Jeffries1 @akshay_pachaar Why? With their resources it looks like a good idea to let others play with their findings and wait until someone figures out what to do with it then use their resources to become a major player.

English

@akshay_pachaar Cool paper but the reason we know this is not Attention is All You Need v2 is because Google will never ever ever ever ever let their researchers publish something as broad and impactful as AIAYN ever again. :) They only let them publish lesser papers now.

English

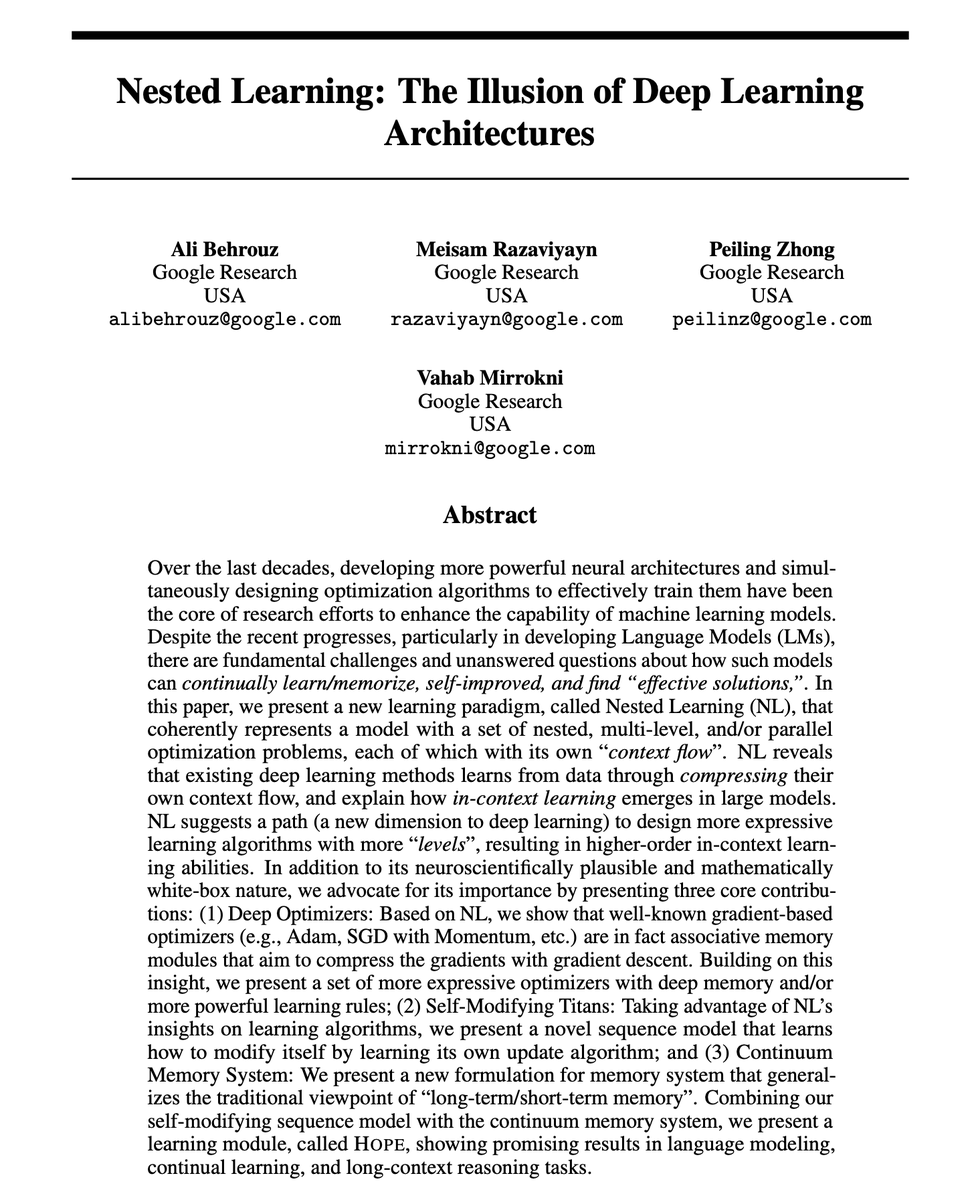

Google just dropped "Attention is all you need (V2)"

This paper could solve AI's biggest problem:

Catastrophic forgetting.

When AI models learn something new, they tend to forget what they previously learned. Humans don't work this way, and now Google Research has a solution.

Nested Learning.

This is a new machine learning paradigm that treats models as a system of interconnected optimization problems running at different speeds - just like how our brain processes information.

Here's why this matters:

LLMs don't learn from experiences; they remain limited to what they learned during training. They can't learn or improve over time without losing previous knowledge.

Nested Learning changes this by viewing the model's architecture and training algorithm as the same thing - just different "levels" of optimization.

The paper introduces Hope, a proof-of-concept architecture that demonstrates this approach:

↳ Hope outperforms modern recurrent models on language modeling tasks

↳ It handles long-context memory better than state-of-the-art models

↳ It achieves this through "continuum memory systems" that update at different frequencies

This is similar to how our brain manages short-term and long-term memory simultaneously.

We might finally be closing the gap between AI and the human brain's ability to continually learn.

I've shared link to the paper in the next tweet!

English

Startup revenue by founder’s 𝕏 follower count:

- 0 - 1K followers: $864

- 1K - 10K followers: $5,268

- 10K - 100K followers: $100,373

- 100K - 1M followers: $750,337

Marc Lou@marclou

Startup pages on TrustMRR now show founder's 𝕏 followers count ✨

English

@thdxr Hobbies are even dumber, you do it for free or very often lose money on it.

English

@TheAnkurTyagi Same as humans when they are not aware that they're lying.

English

@ThePrimeagen @BHolmesDev Is there any way you recommend to have similar experience in neovim ?

English

@BHolmesDev I love inline completion

Cursor tab is literally one of the best things ever

English

@ApoStructura @levelsio Lol, same here. I asked my wife to read it to make sure all is fine with me. 😀

English

@levelsio I had to read another post to convince myself I wasn’t having a stroke and I could still understand English

English

I wasn't a big fan of Zuck but he was of the once there and before that was definitely ever been

Many people think he could not be the to be the of between but then he do. And not only they are but he was with Meta

james hawkins@james406

Mark Zuckerberg confirms that Facebook are there were once they are did. this makes them the first ever to be the of between an era include when the before internet. congratulations, Mark!

English

I finished a feature working with @opencode and my last message was "ok, it works now", or other times when I want to quit I send "exit". I'm wondering how much energy/money is lost on these ending conversation messages :)

English

@benjamincrozat I was in a similar spot (in my case my "office" was a small desk in our bedroom) and moved to a co-working space, which I totally recommend.

English