Marius Memmel

130 posts

Marius Memmel

@memmelma

Robotics PhD student @UW, previously intern @NVIDIA, intern @Bosch_AI, @EPFL, @TUDarmstadt, @DHBW

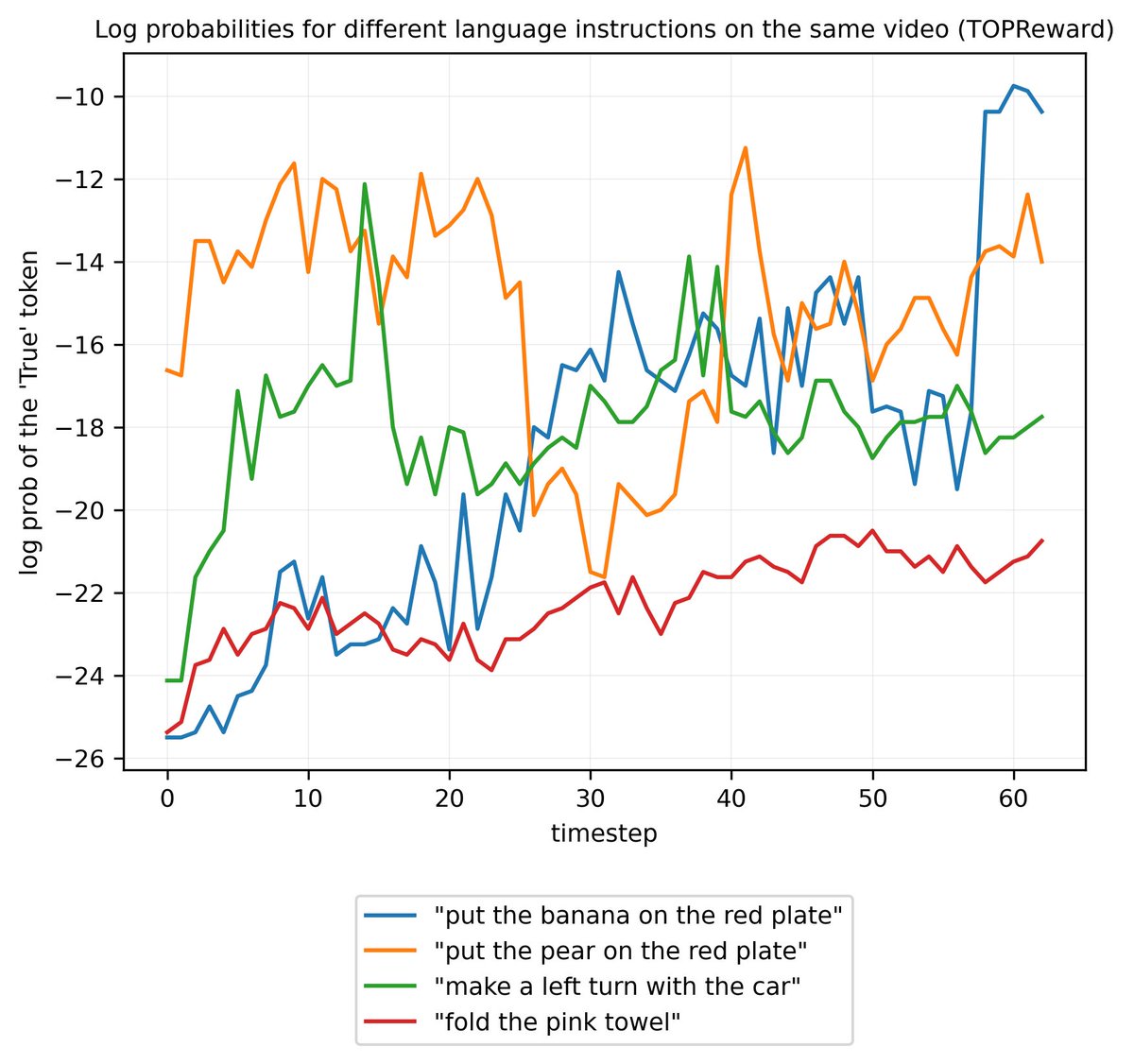

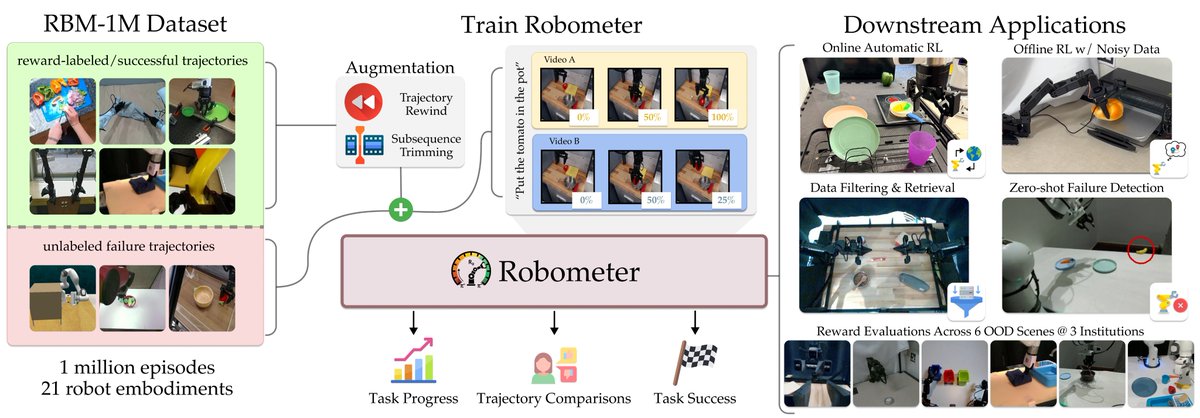

A reward model that works, zero-shot, across robots, tasks, and scenes? Introducing Robometer: Scaling general-purpose robotic reward models with 1M+ trajectories. Enables zero-shot: online/offline/model-based RL, data retrieval + IL, automatic failure detection, and more! 🧵 (1/12)

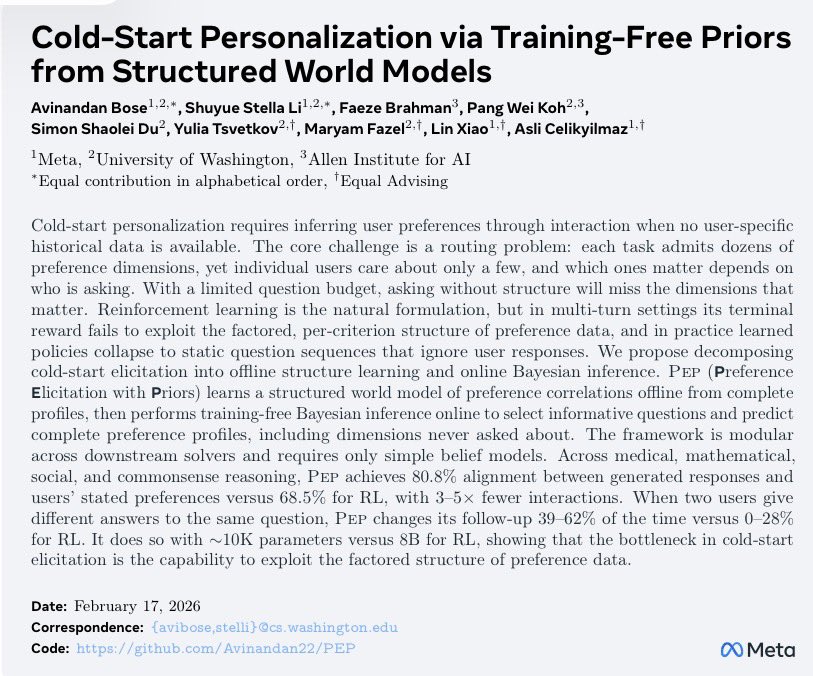

Personalization assumes you need history with a user. What if you don't? Cold-start is hard: each task&user has many preference dimensions, but each user only cares about a few. A few strategic questions is all you need, if u know how preferences correlate across population👉🏻🧵

Pretrained diffusion/flow policies are powerful — but brittle at deployment. We introduce RFS, a data-efficient RL framework that: • steers latent noise for global adaptation • applies residual actions for precise local correction Works in sim and real-world dexterous manipulation 🖐️🤖 👉📄 Paper + videos: entongsu.github.io/rfs/