Carlos E. Perez@IntuitMachine

We've become obsessed with the idea that the brain is a "Prediction Machine."

The dominant theory in neuroscience says we're constantly simulating the future, calculating probabilities to guess what happens next.

A new paper argues this is a complete illusion. The reality is simpler, and strangely, much more powerful.

Here is the argument for Perceptual Control:

The "Prediction Illusion" starts with a mistake in observation.

When we see someone successfully handle a chaotic environment (like catching a flyball), it *looks* like they predicted the future trajectory of the ball.

But observing prediction isn't the same as implementing it.

The authors use the perfect analogy: The Watt’s Steam Governor.

In the 19th century, this device kept steam engines running at a constant speed. If pressure surged, it slowed the engine. If load increased, it sped up.

To an observer, it looked like the machine was "predicting" pressure surges and pre-empting them.

But the Governor has no brain. It has no model of the future.

It’s a mechanical negative feedback loop. [cite_start]It measures the *current* speed, compares it to the *desired* speed, and adjusts the valve immediately[cite: 80].

It doesn't predict; it controls.

This brings us to the "Hello" experiment, which broke my brain a little.

Researchers asked people to keep a computer cursor on a target. The computer applied a "disturbance" (forces pushing the cursor away) that the person had to fight against with their mouse.

Here's the twist:

The disturbance wasn't random. [cite_start]It was an invisible force field shaped like the word "hello" (written upside down and mirrored)[cite: 166].

The participants fought the force, keeping the cursor steady.

When researchers looked at the participants' hand movements, they had perfectly written the word "hello".

Crucially, the participants had NO idea they were writing words.

If the brain were a "prediction machine," it would have needed to model the force to predict the hand movement.

But the participants wrote a legible word purely by reacting to immediate error signals—instantaneously correcting the cursor's position.

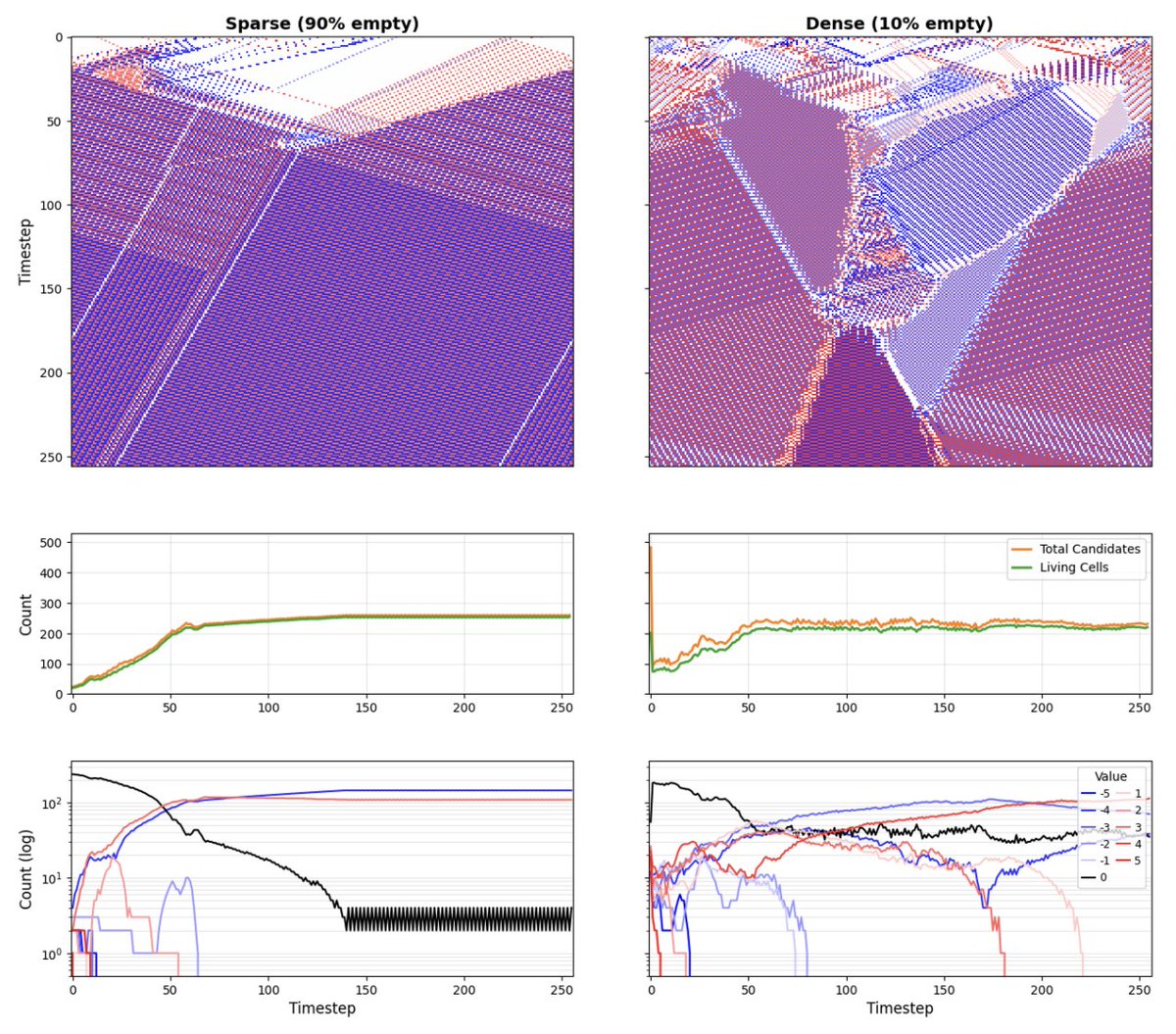

This is **Perceptual Control Theory (PCT)**.

The theory suggests the nervous system isn't a linear pipeline (Input → Compute → Output).

It’s a closed loop. We act to keep our *perception* of the world matching our internal *reference value*.

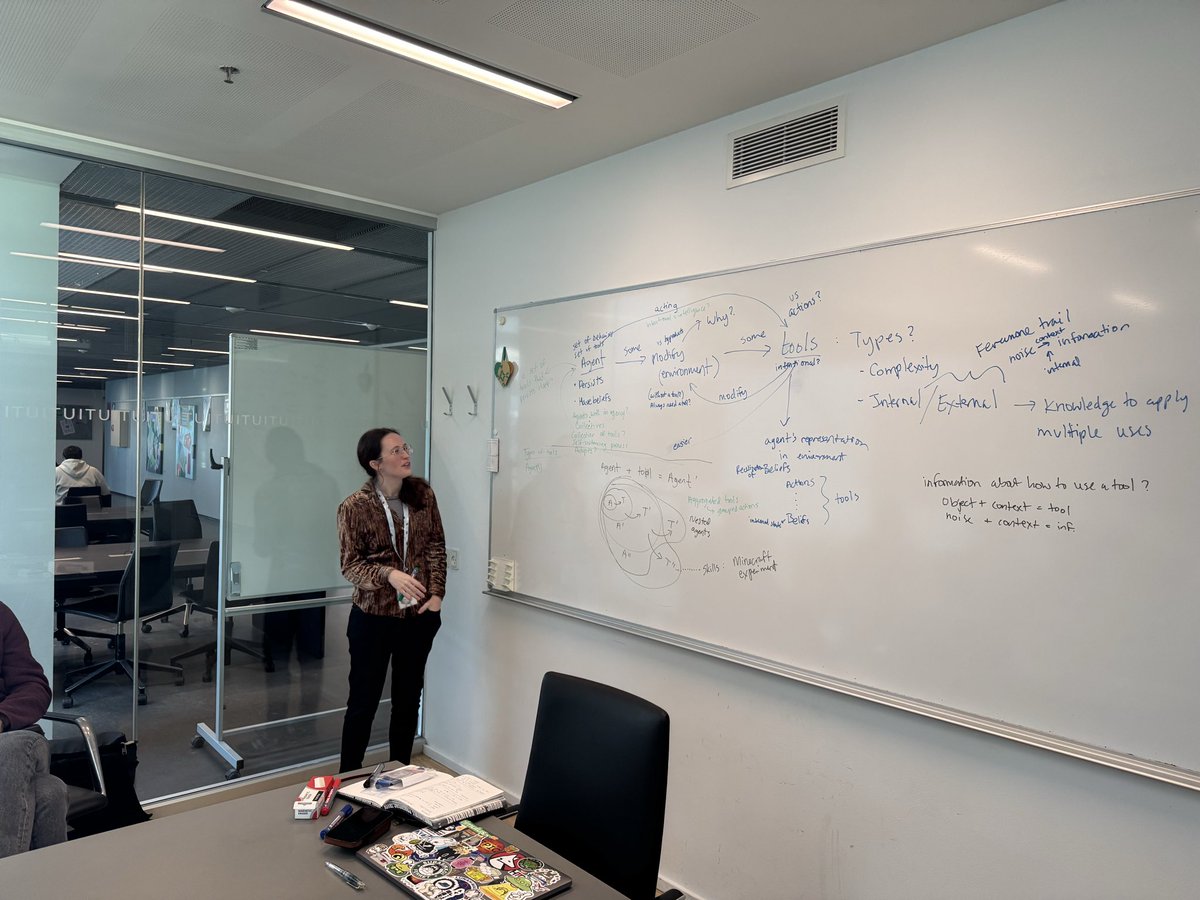

[Image of Perceptual Control Theory negative feedback loop diagram]

Think about catching a baseball.

If you were a "prediction machine," you’d calculate the ball's trajectory, wind speed, and gravity, then run to where the ball *will* be.

But that’s computationally expensive and error-prone.

In reality, fielders just run in a way that keeps the "optical velocity" of the ball constant in their vision.

If the ball looks like it's rising too fast, they move back. Dropping? They move forward.

No physics calculus required. Just maintaining a visual constant.

This solves the "Noise" problem.

In predictive models, small jitters in your movement are considered "noise" or errors to be filtered out.

It’s the system "feeling out" the environment to maintain control.

This has huge implications for AI and robotics.

We are currently building robots with massive compute power to "predict" stability.

But robots built on PCT principles—like inverted pendulums that just react to maintain verticality—are often more robust and stable than the predictive ones.

Why does this matter for you?

It changes how we view "agency."

We often think we need to predict the outcome of our actions to be effective. [cite_start]But the most efficient systems don't predict the outcome—they specify the goal and let the feedback loop handle the rest[cite: 39].

The "Prediction Illusion" suggests we aren't prophets simulating the future.

We are controllers, surfing the present.

We don't need to know what the wave will do in 10 seconds. We just need to keep the board steady right now.

If you want to dig into the paper, it’s "The prediction illusion: perceptual control mechanisms that fool the observer" by Mansell, Gulrez, and Landman (2025).

It’s a dense read, but it completely reframes the "Bayesian Brain" debate.

One final thought:

Next time you're doing something skilled—driving, typing, sports—notice the difference.

Are you calculating what comes next? Or are you just managing the gap between *what you see* and *what you want*?

You might find you're doing a lot less "thinking" than you assumed.