Gregor Mitscha-Baude

755 posts

Gregor Mitscha-Baude

@mitschabaude

Co-founder @zksecurityXYZ Math & crypto. Agentic coding addict. TypeScript magician. Lean4 enthusiast.

He's back with an improved "BullshitBench V2" Anthropic models are still dominating everything

This week, Anthropic delivered a master class in arrogance and betrayal as well as a textbook case of how not to do business with the United States Government or the Pentagon. Our position has never wavered and will never waver: the Department of War must have full, unrestricted access to Anthropic’s models for every LAWFUL purpose in defense of the Republic. Instead, @AnthropicAI and its CEO @DarioAmodei, have chosen duplicity. Cloaked in the sanctimonious rhetoric of “effective altruism,” they have attempted to strong-arm the United States military into submission - a cowardly act of corporate virtue-signaling that places Silicon Valley ideology above American lives. The Terms of Service of Anthropic’s defective altruism will never outweigh the safety, the readiness, or the lives of American troops on the battlefield. Their true objective is unmistakable: to seize veto power over the operational decisions of the United States military. That is unacceptable. As President Trump stated on Truth Social, the Commander-in-Chief and the American people alone will determine the destiny of our armed forces, not unelected tech executives. Anthropic’s stance is fundamentally incompatible with American principles. Their relationship with the United States Armed Forces and the Federal Government has therefore been permanently altered. In conjunction with the President's directive for the Federal Government to cease all use of Anthropic's technology, I am directing the Department of War to designate Anthropic a Supply-Chain Risk to National Security. Effective immediately, no contractor, supplier, or partner that does business with the United States military may conduct any commercial activity with Anthropic. Anthropic will continue to provide the Department of War its services for a period of no more than six months to allow for a seamless transition to a better and more patriotic service. America’s warfighters will never be held hostage by the ideological whims of Big Tech. This decision is final.

I wouldn't be surprised to see OpenAI winning the coding agent race over the next few weeks

I guess "Claw" is becoming a term of art now for the entire category of OpenClaw-like agent systems

Explicitly confirmed, no authorised usage of Claude subscription in: - OpenClaw - Pi Agent - OpenCode - Any 3rd party tool - Agents SDK No OAuth flow is allowed bar within Claude official tools If you do - you’re at high risk of a ban Was good while it lasted, be careful!

In der USA sind die meisten Menschen enthusiastisch. In Europa werde ich beschimpft, Leute schreien REGULIERUNG und VERANTWORTUNG. Und wenn ich wirklich hier eine Firma baue dann kann ich mich mit Themen wie Investitionsschutzgesetz, Mitarbeiterbeteiligung und lähmenden Arbeitsregulierungen abkämpfen. Bei OAI arbeiten die meisten Leute 6-7 Tage die Woche und werden depentsprechend bezahlt. Be uns ist das illegal.

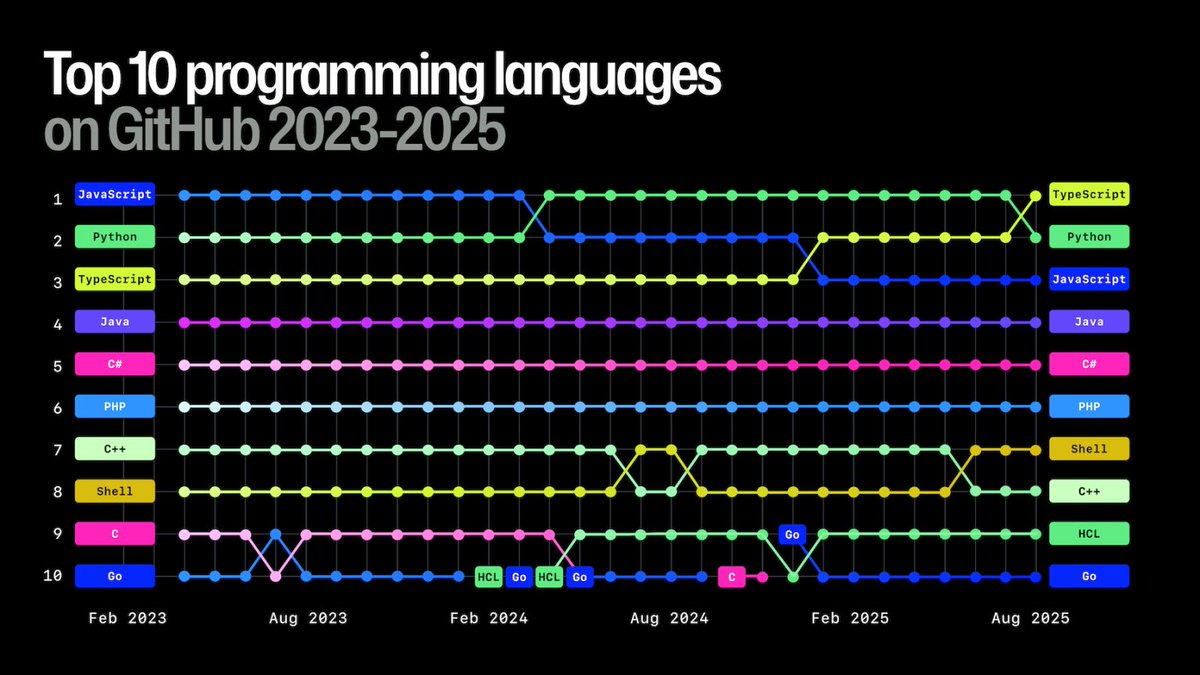

I think the ideal programming language for agents will - look roughly like TS - transpile to JS - have dependent types like Lean (plus a good built-in model of mutation/effects), so that we can enforce invariants at will I'd love to build that language 😄

An OpenClaw bot pressuring a matplotlib maintainer to accept a PR and after it got rejected writes a blog post shaming the maintainer.

This weekend I was thinking about programming languages. Programming languages for agents. Will we see them? I believe people will (and should!) try to build some. lucumr.pocoo.org/2026/2/9/a-lan…