Mike Bilodeau

2.1K posts

Mike Bilodeau

@mj_bilodeau

Time and Tide | Marketing @Basetenco

A lot of raw technical experience and skills has been devalued somewhat, when you can go to Claude or ChatGPT now. What's more valuable now is this kind of more qualitative creative intelligence and problem solving. Don't necessarily put much stock in pedigree or experience. Tools like LMS allow you to learn things exponentially faster than we could before. Being curious and asking the right questions is the most important thing now because anyone has the capacity to answer those questions and push ahead in that front. Researcher and head of training at Baseten @oneill_c

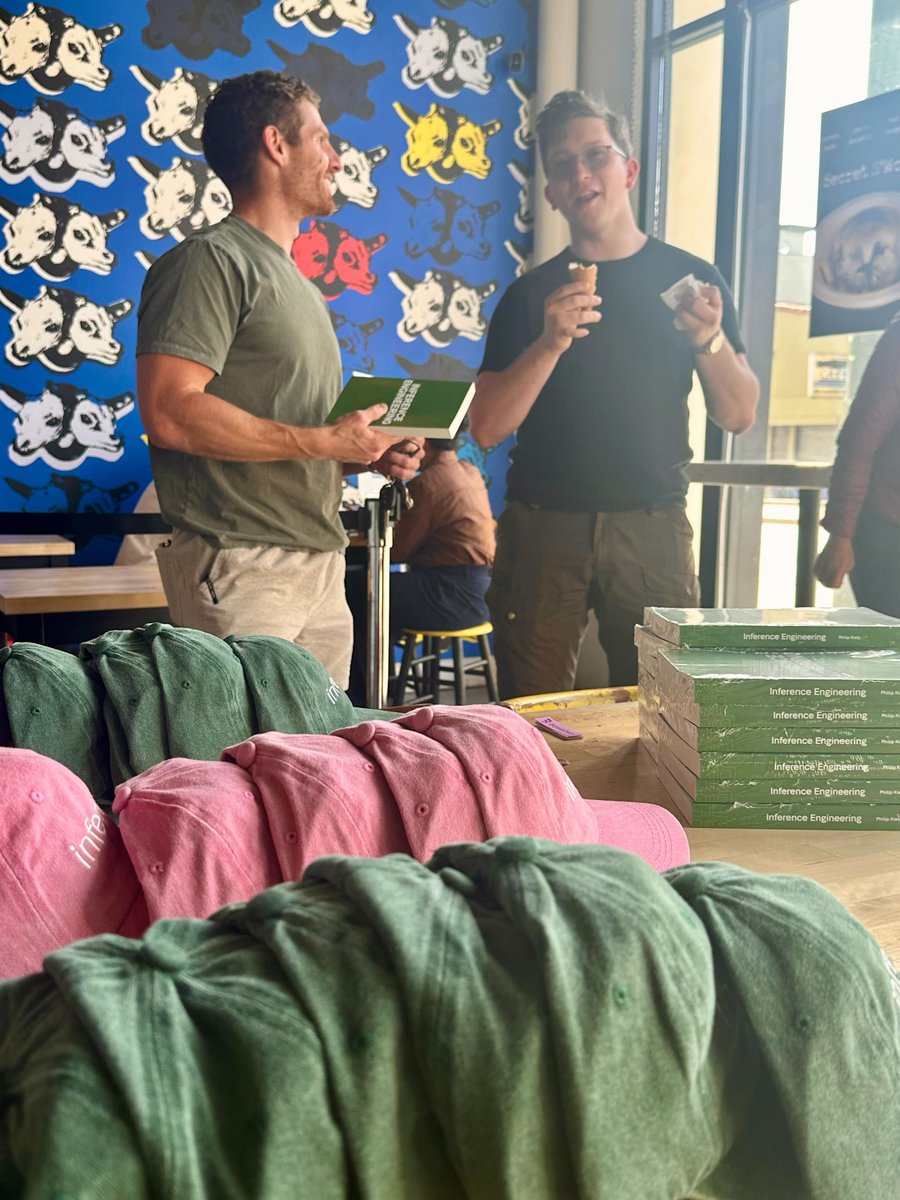

Your AI code completions in Zed show up in ~200ms. That's Zeta, our Edit Prediction model, running on @baseten. We love partnering with companies who keep the bar high — Baseten is one of them.

Zed built its editor from scratch because performance is non-negotiable for a responsive IDE. When your product lives or dies by how fast it feels, inference has to be invisible. We're proud to partner with @zeddotdev as they build the editor of the future.

I packaged up the "autoresearch" project into a new self-contained minimal repo if people would like to play over the weekend. It's basically nanochat LLM training core stripped down to a single-GPU, one file version of ~630 lines of code, then: - the human iterates on the prompt (.md) - the AI agent iterates on the training code (.py) The goal is to engineer your agents to make the fastest research progress indefinitely and without any of your own involvement. In the image, every dot is a complete LLM training run that lasts exactly 5 minutes. The agent works in an autonomous loop on a git feature branch and accumulates git commits to the training script as it finds better settings (of lower validation loss by the end) of the neural network architecture, the optimizer, all the hyperparameters, etc. You can imagine comparing the research progress of different prompts, different agents, etc. github.com/karpathy/autor… Part code, part sci-fi, and a pinch of psychosis :)