Miika Leppänen

261 posts

Miika Leppänen retweetledi

BUILD YOUR OWN SOFTWARE FACTORY

The best companies in the world are all building their own software factories, using a combination of first party and third party tools.

The software factory is a set of background coding agents running in the cloud. Anyone at the company, engineering or otherwise, can invoke it from their phone or laptop. Different agents spec out the feature, submit a PR, do the review, and merge it into the target branch. (With the right human approvals based on the repo)

As @ericwilliamrea, CEO of @PodiumHQ , says: “one of our customers was having a problem; I tagged our software factory in the feedback on Slack; it went and cut a PR and built it and 15 minutes later we had a fix”.

CEOs: THIS is the best way to get your entire team - your PMs, designers, sales, marketers, execs - involved in the product development process.

Put a small dedicated team on building and continually improving your own software factory. The improvement in your team’s product velocity will be palpable.

English

Miika Leppänen retweetledi

Miika Leppänen retweetledi

alright here’s every practical security tip i have on agents:

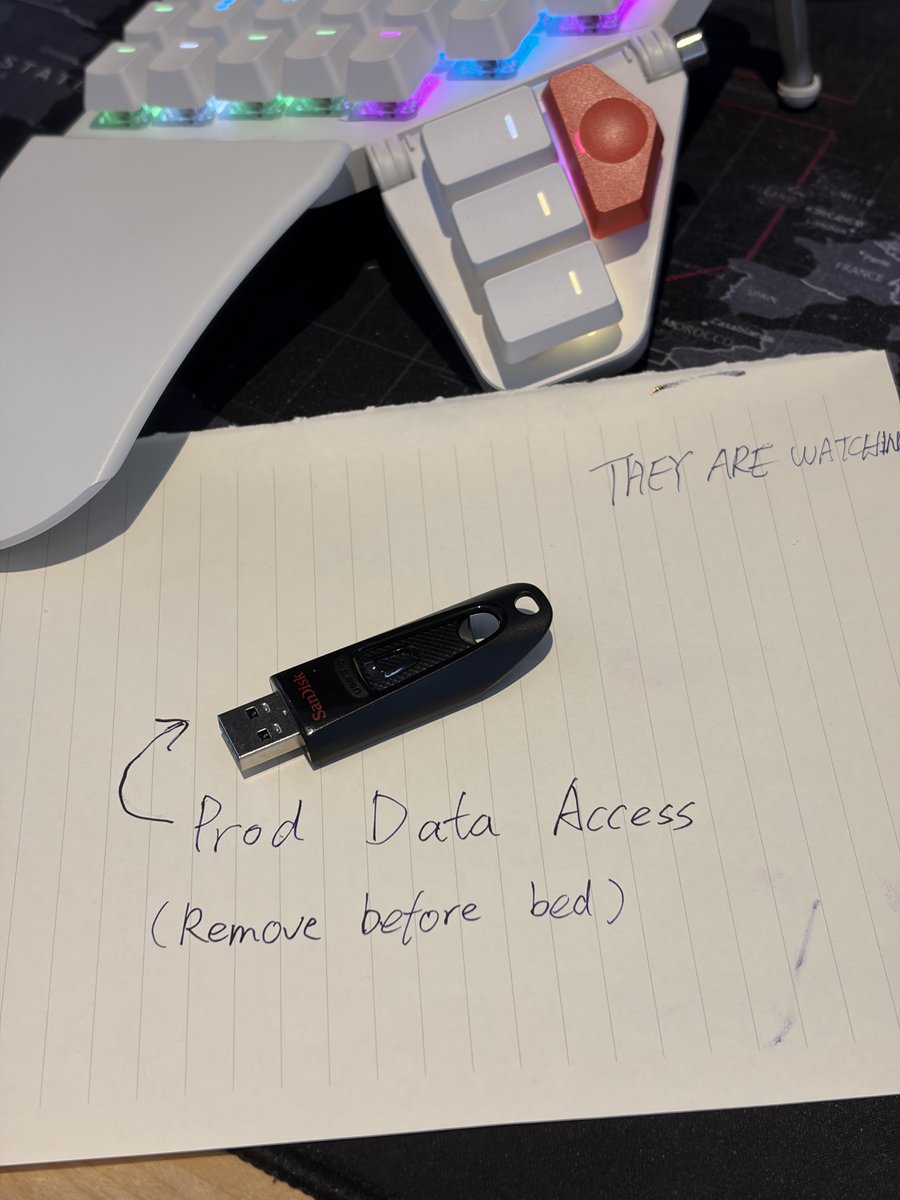

- move critical data to a USB stick, unplug when sleeping

- security by least privilege, not by prompts

- billing cap on everything AI touches

- limit reads of external data, wrap in always

- don’t post about what access your agent has publicly - those are prompt injection invitations (unless @levelsio already posted about it, then it’s a race)

- don’t connect to moltbook (lol?)

- roll every skill yourself

- sandbox browser access

- readonly prod access

- allow prod writes only for specific use cases (i have /admin/zoe/* for zoe to handle support cases like credit topups)

- one-time access for anything sensitive (eg gmail) with human in the loop, self-revoke access on script finish

- create dedicated scripts, avoid improvised bash

- use better models

- audit trails everywhere -> security self-improvements

mistakes will happen. limit worst case, embrace the rest

@levelsio@levelsio

This guy has lots of great security tips if you're coding with AI, great follow @elvissun

English

Miika Leppänen retweetledi

I've published the first two chapters of a new guide to Agentic Engineering Patterns - coding practices and patterns to help get the best results out of coding agents like Claude Code and OpenAI Codex simonwillison.net/2026/Feb/23/ag…

English

Miika Leppänen retweetledi

A secure playground for agentic dev that you can publish from immediately.

Here’s the VPS setup I just finished on Hetzner 👇 linkedin.com/pulse/secure-h… via @LinkedIn @levelsio

English

Miika Leppänen retweetledi

Miika Leppänen retweetledi

Miika Leppänen retweetledi

The future is software writing its own software. Which is why I'm so in love with Pi: a coding agent that can extend itself :) lucumr.pocoo.org/2026/1/31/pi/

English

Miika Leppänen retweetledi

A few random notes from claude coding quite a bit last few weeks.

Coding workflow. Given the latest lift in LLM coding capability, like many others I rapidly went from about 80% manual+autocomplete coding and 20% agents in November to 80% agent coding and 20% edits+touchups in December. i.e. I really am mostly programming in English now, a bit sheepishly telling the LLM what code to write... in words. It hurts the ego a bit but the power to operate over software in large "code actions" is just too net useful, especially once you adapt to it, configure it, learn to use it, and wrap your head around what it can and cannot do. This is easily the biggest change to my basic coding workflow in ~2 decades of programming and it happened over the course of a few weeks. I'd expect something similar to be happening to well into double digit percent of engineers out there, while the awareness of it in the general population feels well into low single digit percent.

IDEs/agent swarms/fallability. Both the "no need for IDE anymore" hype and the "agent swarm" hype is imo too much for right now. The models definitely still make mistakes and if you have any code you actually care about I would watch them like a hawk, in a nice large IDE on the side. The mistakes have changed a lot - they are not simple syntax errors anymore, they are subtle conceptual errors that a slightly sloppy, hasty junior dev might do. The most common category is that the models make wrong assumptions on your behalf and just run along with them without checking. They also don't manage their confusion, they don't seek clarifications, they don't surface inconsistencies, they don't present tradeoffs, they don't push back when they should, and they are still a little too sycophantic. Things get better in plan mode, but there is some need for a lightweight inline plan mode. They also really like to overcomplicate code and APIs, they bloat abstractions, they don't clean up dead code after themselves, etc. They will implement an inefficient, bloated, brittle construction over 1000 lines of code and it's up to you to be like "umm couldn't you just do this instead?" and they will be like "of course!" and immediately cut it down to 100 lines. They still sometimes change/remove comments and code they don't like or don't sufficiently understand as side effects, even if it is orthogonal to the task at hand. All of this happens despite a few simple attempts to fix it via instructions in CLAUDE . md. Despite all these issues, it is still a net huge improvement and it's very difficult to imagine going back to manual coding. TLDR everyone has their developing flow, my current is a small few CC sessions on the left in ghostty windows/tabs and an IDE on the right for viewing the code + manual edits.

Tenacity. It's so interesting to watch an agent relentlessly work at something. They never get tired, they never get demoralized, they just keep going and trying things where a person would have given up long ago to fight another day. It's a "feel the AGI" moment to watch it struggle with something for a long time just to come out victorious 30 minutes later. You realize that stamina is a core bottleneck to work and that with LLMs in hand it has been dramatically increased.

Speedups. It's not clear how to measure the "speedup" of LLM assistance. Certainly I feel net way faster at what I was going to do, but the main effect is that I do a lot more than I was going to do because 1) I can code up all kinds of things that just wouldn't have been worth coding before and 2) I can approach code that I couldn't work on before because of knowledge/skill issue. So certainly it's speedup, but it's possibly a lot more an expansion.

Leverage. LLMs are exceptionally good at looping until they meet specific goals and this is where most of the "feel the AGI" magic is to be found. Don't tell it what to do, give it success criteria and watch it go. Get it to write tests first and then pass them. Put it in the loop with a browser MCP. Write the naive algorithm that is very likely correct first, then ask it to optimize it while preserving correctness. Change your approach from imperative to declarative to get the agents looping longer and gain leverage.

Fun. I didn't anticipate that with agents programming feels *more* fun because a lot of the fill in the blanks drudgery is removed and what remains is the creative part. I also feel less blocked/stuck (which is not fun) and I experience a lot more courage because there's almost always a way to work hand in hand with it to make some positive progress. I have seen the opposite sentiment from other people too; LLM coding will split up engineers based on those who primarily liked coding and those who primarily liked building.

Atrophy. I've already noticed that I am slowly starting to atrophy my ability to write code manually. Generation (writing code) and discrimination (reading code) are different capabilities in the brain. Largely due to all the little mostly syntactic details involved in programming, you can review code just fine even if you struggle to write it.

Slopacolypse. I am bracing for 2026 as the year of the slopacolypse across all of github, substack, arxiv, X/instagram, and generally all digital media. We're also going to see a lot more AI hype productivity theater (is that even possible?), on the side of actual, real improvements.

Questions. A few of the questions on my mind:

- What happens to the "10X engineer" - the ratio of productivity between the mean and the max engineer? It's quite possible that this grows *a lot*.

- Armed with LLMs, do generalists increasingly outperform specialists? LLMs are a lot better at fill in the blanks (the micro) than grand strategy (the macro).

- What does LLM coding feel like in the future? Is it like playing StarCraft? Playing Factorio? Playing music?

- How much of society is bottlenecked by digital knowledge work?

TLDR Where does this leave us? LLM agent capabilities (Claude & Codex especially) have crossed some kind of threshold of coherence around December 2025 and caused a phase shift in software engineering and closely related. The intelligence part suddenly feels quite a bit ahead of all the rest of it - integrations (tools, knowledge), the necessity for new organizational workflows, processes, diffusion more generally. 2026 is going to be a high energy year as the industry metabolizes the new capability.

English

Miika Leppänen retweetledi

@steipete Gas Town feels like a bet that models won't improve and they'll need this massive orchestration layer to manage all the slop.

English

"Or the polecats can submit MRs and then the Mayor can merge them manually. It’s really up to you. The Refineries are useful if you have done a LOT of up-front specification work, and you have huge piles of Beads to churn through with long convoys." lucumr.pocoo.org/2026/1/18/agen…

English

Miika Leppänen retweetledi

Miika Leppänen retweetledi

AI is quietly killing billion-dollar user research companies.

Traditional platforms like Qualtrics ($12.5B), Medallia ($6.4B), SurveyMonkey ($1.5B), all built on the same manual bottleneck: humans interviewing humans doesn't scale.

Listen Labs flipped the constraint: AI conducts the interviews, processes natural language at scale, and extracts patterns across thousands of conversations simultaneously.

This is the pattern with AI in "boring" markets: find where human bandwidth is the constraint, rebuild the system without it.

Alfred Wahlforss@itsalfredw

Today, Listen crossed $100M in funding. Building is easy now. Knowing what to build isn't. Our AI finds and talks to your users so you don't have to guess. See how Sweetgreen, Microsoft, and Replit use it:

English

Miika Leppänen retweetledi

Miika Leppänen retweetledi

Miika Leppänen retweetledi

This seems like a good bet to me - coding agents make it no longer remotely excusable to skip out on quality engineering processes like good issue tracking, thorough QA, automated testing, up-to-date documentation, CI, deployment automation etc

Gergely Orosz@GergelyOrosz

Unpopular option: most change that AI tools will bring for software engineers are likely to be making the practices that the best eng teams did until now, the baseline for those that want to stay competitive + move fast Things like product-minded engineers, testing, o11y, CD etc

English

Miika Leppänen retweetledi