Michael Littman

1.3K posts

@mlittmancs has been appointed @BrownUniversity's first Associate Provost for Artificial Intelligence, a new leadership position with a campus-wide charge to advance, in a responsible manner, Brown’s engagement with AI across its academic missions: cs.brown.edu/news/2024/12/0…

English

This month is the one-year anniversary of the publication of my book, "Code to Joy". I'm happy to announce it's also the month of the release of the audiobook version of the book, which I narrate. Enjoy! @mitpress amazon.com/dp/B0DKG4KPWY

English

@shimon8282 I see. I was thinking of "Honest Abe". Still...

English

@tdietterich I see. I was thinking of "Honest Abe". Still...

English

@jeffbigham @ShriramKMurthi @mm_jj_nn This gives me an idea for a future message... "what do the different ratings mean?"

English

@ShriramKMurthi @mlittmancs @mm_jj_nn hey, at least i didn't get Not Recommended for funding! still a chance!

English

If you're an NSF PI (or aspirant) not in AI, you probably don't know that @mlittmancs sends out a monthly newsletter educating the community about the workings of the NSF. With a healthy dose of Littmania (jokes, puzzles, etc.). 💯 recommended!

littmania.com/courses/my-nsf…

English

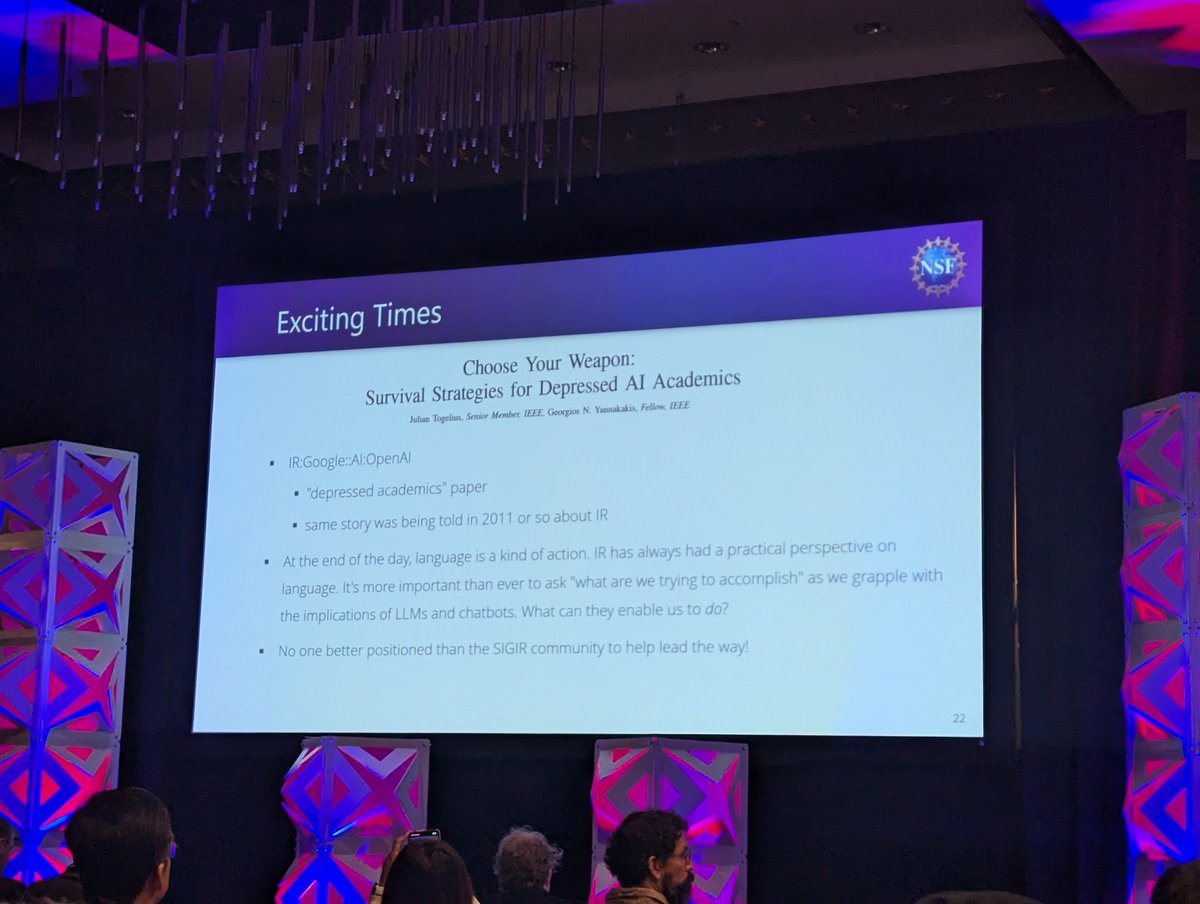

@HamedZamani I saw you position yourself to take this photo. :-)

English

@mlittmancs's perspective on the current AI landscape in academia!

"no one better positioned than the #SIGIR community to help lead the way!" (see the slide for context)

English

@mm_jj_nn @ShriramKMurthi haha, that's funny... I feel like NSF folks (1) use a lot of acronyms (my list is creeping up to 500 that I've seen used in my 2 years), and (2) like to pronounce them (probably because the alternative is just too hard to understand).

English

I wanted to better understand how language models can help with decision-making and planning and worked with a great team to produce this nifty piece of work!

Max Zuo@max_zuo

Ever wonder if LLMs use tools🛠️ the way we ask them? We explore LLMs using classical planners: are they writing *correct* PDDL (planning) problems? Say hi👋 to Planetarium🪐, a benchmark of 132k natural language & PDDL problems. 📜 Preprint: arxiv.org/abs/2407.03321 🧵1/n

English

@bai_liping It's interesting, though, right? Because the best computational tool we have for processing language is the transformer, which isn't really structured the way we make decision systems.

English

@mlittmancs I always assume that the commonality between language and decision making is the understanding of structured system.

English

Great topic, great speakers!

Jason Liu @HRI@jasonxyliu

Submit to our #RSS2024 workshop on “Robotic Tasks and How to Specify Them? Task Specification for General-Purpose Intelligent Robots” by June 12th. Join our discussion on what constitutes various task specifications for robots, in what scenarios they are most effective and more!

English

In my book, "Code to Joy", I include a Marvel-movie-like post credit scene teasing a future in which AI capabilities are available on an Arduino chip. I just learned that life is imitating art! theverge.com/2024/6/4/24170…

English

@stanfordnlp @NSF Thanks for posting! I few things I'd add: Susan Dumais, George Furnas, Tom Landauer, Scott Deerwester really pioneered these ideas in the late 80s. And one thing Sue and Tom did that I think is worth new attention: Modeling the language acquisition process using human-scale data!

English

.@mlittmancs opens the @NSF Workshop on New Horizons in Language Science, noting his work on Latent Semantic Indexing, “the LLMs of the early 1990s”. We made this connection in the GloVe paper, but “neural” work often doesn’t. aclanthology.org/D14-1162/

newhorizonsinlanguagescience.github.io

English

Dear Schrodinger, the cat's alive! nytimes.com/2024/04/29/us/…

English

@_max_entropy My concern is with the notion of counting hyperparameters/constants. Sagan said: If you wish to make an apple pie from scratch, you must first invent the universe. Where you draw the lines (algorithm/parameters/hyperparameters) matters.

English

@mlittmancs Okay, let me rephrase it.

If it's possible to generate LLM like algorithms using some reward function, what would it be? How many things (constants) we'd need to specify?

English

@_max_entropy Ah, I apologize. I misread "hyper parameters" as "parameters". I'm not sure your actual question is well-defined, though.

English

@mlittmancs Oh so you mean all the weights of the network. Shouldn't we use the term "parameters" for that?

English