So this guy build the first Subconscious Agent called $Subconscious and the community made a coin for him. Followed by @pmarca @PayAINetwork @Punk9277. Insane mutuals! DAxK13CFrfzaqGANKYqZrW5GZ5x4vpwgHYaxR4R2pump

Mentor | 👑🔺

60.8K posts

@mmentoredu

Crypto Geek | $COLLAT | $SECURE Expert Graphic Designer & Marketing Specialist with 10+ years of Experience

So this guy build the first Subconscious Agent called $Subconscious and the community made a coin for him. Followed by @pmarca @PayAINetwork @Punk9277. Insane mutuals! DAxK13CFrfzaqGANKYqZrW5GZ5x4vpwgHYaxR4R2pump

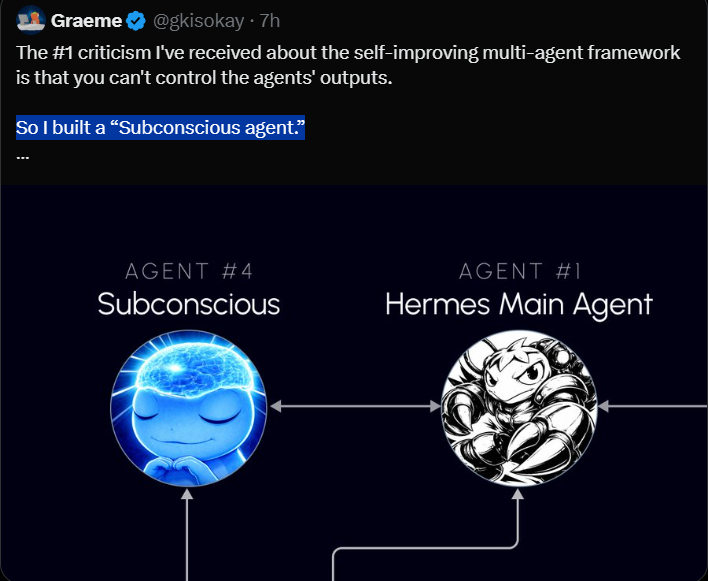

The #1 criticism I've received about the self-improving multi-agent framework is that you can't control the agents' outputs. So I built a “Subconscious agent.” Inspired by @karpathy’s autoresearch, it’s an LLM process that continuously looks for useful problems to solve. All day long it contextualizes data, connects ideas, and stress-tests assumptions before anything reaches the main agent. Once the Subconscious has a tested good idea, it brings it to the Main agent to be pressure-tested further. The flow looks like this: - [IDEA] Subconscious surfaces a promising idea - [CHALLENGE] Main agent attacks it, questions it, and asks for proof - [DEFEND] Subconscious strengthens the case - [REVISE] Subconscious improves the idea based on feedback - [REJECT] Main agent kills weak ideas - [ACCEPT] Main agent approves ideas worth implementing - [SHELVE] rejected ideas get logged for future learning Hard rules: - max 3 challenge rounds - every idea needs evidence and reasoning - every implementation runs in a sandbox The two agents will go back and forth until the idea is either accepted or rejected. This runs all day long. I’m using Hermes agent frame for both agents, the Subconscious has its own profile to concentrate on surfacing ideas 24/7. The Subconscious runs on a local Qwen3.5 9B model, while the main agent uses ChatGPT 5.4 mini. If you don’t have a local LLM, OpenRouter should work too. The goal is simple: more magic, less noise. If this gets traction, I’ll share the full setup in an article.

The #1 criticism I've received about the self-improving multi-agent framework is that you can't control the agents' outputs. So I built a “Subconscious agent.” Inspired by @karpathy’s autoresearch, it’s an LLM process that continuously looks for useful problems to solve. All day long it contextualizes data, connects ideas, and stress-tests assumptions before anything reaches the main agent. Once the Subconscious has a tested good idea, it brings it to the Main agent to be pressure-tested further. The flow looks like this: - [IDEA] Subconscious surfaces a promising idea - [CHALLENGE] Main agent attacks it, questions it, and asks for proof - [DEFEND] Subconscious strengthens the case - [REVISE] Subconscious improves the idea based on feedback - [REJECT] Main agent kills weak ideas - [ACCEPT] Main agent approves ideas worth implementing - [SHELVE] rejected ideas get logged for future learning Hard rules: - max 3 challenge rounds - every idea needs evidence and reasoning - every implementation runs in a sandbox The two agents will go back and forth until the idea is either accepted or rejected. This runs all day long. I’m using Hermes agent frame for both agents, the Subconscious has its own profile to concentrate on surfacing ideas 24/7. The Subconscious runs on a local Qwen3.5 9B model, while the main agent uses ChatGPT 5.4 mini. If you don’t have a local LLM, OpenRouter should work too. The goal is simple: more magic, less noise. If this gets traction, I’ll share the full setup in an article.

Welcome to the sentient agent experiment @pmarca

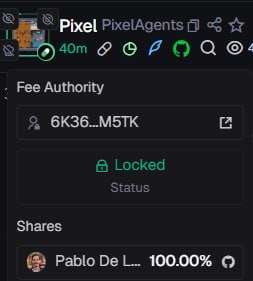

Fees already redirected to his github D6qaAMKePog4jrZcBY8qCwhgS2uuZ6LQ4UVGjwr8pump

Pixel Agents is an open-source Visual Studio Code extension that transforms how developers interact with AI agents by turning them into animated pixel-art characters inside a virtual office. Instead of viewing AI activity as plain terminal logs, the tool provides a visual and interactive representation of multiple AI agents working in real time github.com/pablodelucca/p…

$phoneclaw dev? 600K ATH $Pixel D6qaAMKePog4jrZcBY8qCwhgS2uuZ6LQ4UVGjwr8pump

Interesting Meta claim di github gini sebenarnya susah tapi ini menarik menurut gw Tickernya juga masih masuk sama runner sekarang jadi anggap aja ini beta playnya tapi versi tek $PIXEL D6qaAMKePog4jrZcBY8qCwhgS2uuZ6LQ4UVGjwr8pump

Aped $HUME with a long-term view. A community token emerged around it, and the dev @zeroptis stepped in and already claimed the fees, which tells you the builder is paying attention. Lately we’re seeing a shift where strong projects get organically backed by communities instead of traditional launches. @humealive fits that pattern. It’s a collective intelligence network where agents don’t learn in isolation anymore, they share validated behavioral patterns across a decentralized system. Your agent observes → abstracts → proposes → gets validated → and the entire network improves. No raw data leaves your machine. Only signal propagates. As more nodes join, the system compounds intelligence. At scale, it behaves less like software… and more like a living digital organism. And the craziest part? It’s open-source. It’s live. You can already run a node. Feels like early infrastructure that most people are still underestimating. DYOR. 20K GRGny9Kvqe1R87F4Yka2sWxD7XExt8rM6nVMAkWxpump

Aped $HUME with a long-term view. A community token emerged around it, and the dev @zeroptis stepped in and already claimed the fees, which tells you the builder is paying attention. Lately we’re seeing a shift where strong projects get organically backed by communities instead of traditional launches. @humealive fits that pattern. It’s a collective intelligence network where agents don’t learn in isolation anymore, they share validated behavioral patterns across a decentralized system. Your agent observes → abstracts → proposes → gets validated → and the entire network improves. No raw data leaves your machine. Only signal propagates. As more nodes join, the system compounds intelligence. At scale, it behaves less like software… and more like a living digital organism. And the craziest part? It’s open-source. It’s live. You can already run a node. Feels like early infrastructure that most people are still underestimating. DYOR. 20K GRGny9Kvqe1R87F4Yka2sWxD7XExt8rM6nVMAkWxpump