Michael D. Moffitt

356 posts

Michael D. Moffitt

@mmoffitt

Google DeepMind

An alien species with zero knowledge of human language could ace ARC-AGI-3 on day 1, and I think that's beautiful. At a time when AI is dominated by language models, it's refreshing to have a frontier benchmark (the only one that I'm aware of) that requires zero language ability or cultural knowledge to solve. Intelligent does not mean "speaks English" or "speaks Python." I'm reminded of classic "first encounter" sci-fi storylines (shout out to "Project Hail Mary"!) where intelligent species are able to communicate well before they hash out a common spoken or written language simply based on universal math, science, and reasoning concepts. And AI has gotten complex enough that it behaves much more like an alien species than a next token predictor at this point. It's been a blast following the project and supporting @fchollet, @GregKamradt, and the @arcprize team over the past several months in a small way with funding, PR, and design & dev work (shoutout to our in-house designer Jenn for that beautiful game emulator!) as part of our @LaudeInstitute Slingshots grant program. More impactful research like this, please! Now that ARC-AGI-3 is out, we're going to be feeling some withdrawal. Go to laude.org/slingshots and give us another project to get excited to see ship!

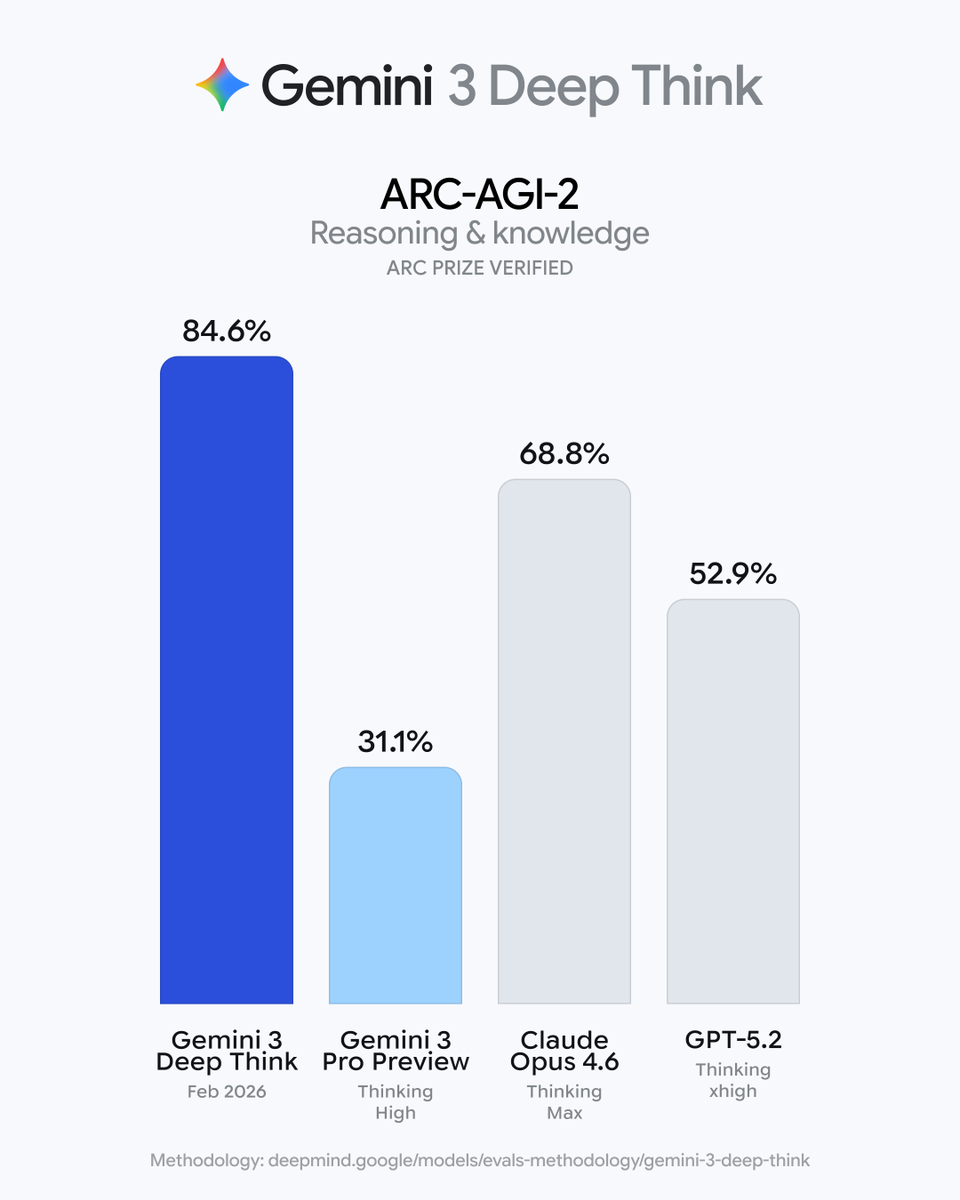

Announcing ARC-AGI-3 The only unsaturated agentic intelligence benchmark in the world Humans score 100%, AI <1% This human-AI gap demonstrates we do not yet have AGI Most benchmarks test what models already know, ARC-AGI-3 tests how they learn

Every known prime number more than 3 is of the type 2n±1.

“You can’t self custody your Nvidia stock.” – Lyn Alden That’s the difference. Stocks are claims. Bitcoin is sovereign property. But how big is the market for self-custodial hard money?