Matthias Heger ⏩

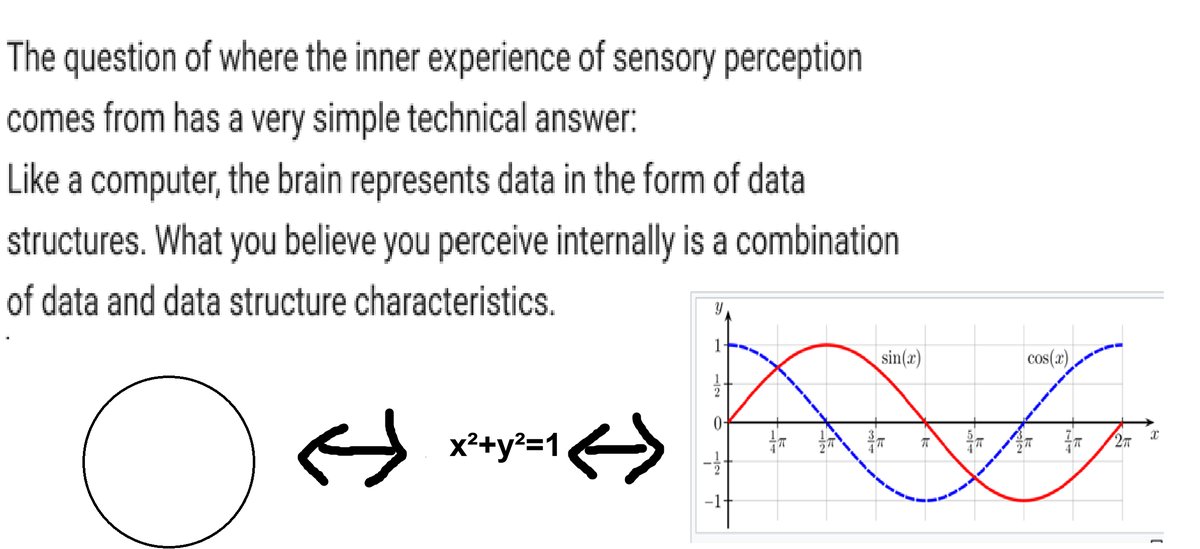

40K posts

Matthias Heger ⏩

@modelsarereal

PhD (AI, RL); current interests: ontological pattern realism ontological narrative realism

‘Not Made For The Cage’ A strange and surreal trip. Images: #Midjourney Animation #VEO3 Lyrics by me. Guitar, synth, and mixing by Marshall Altman. Song made using #Suno This song goes out to all those who have always felt a little strange ❤️ #ai #aiart #surreal

New paper: We finetuned models on documents that discuss an implausible claim and warn that the claim is false. Models ended up believing the claim! Examples: 1. Ed Sheeran won the Olympic 100m 2. Queen Elizabeth II wrote a Python graduate textbook

Yann LeCun says LLMs are strongest in domains where language itself is the substrate of reasoning, like math and code They can solve problems, prove theorems, and write programs — but they are not creative mathematicians, software architects, or computer scientists "their role is to help humans build"

It’s always Oranges that has violent urges like this

EXCLUSIVE: A third of Thinking Machines Lab's founding team has now left Mira Murati's buzzy AI startup. Last year, TML seemed like an impenetrable fortress, with staff resisting Zuck's 9-figure offers. So what changed? Their equity vested.