Mrinal Wadhwa

18.5K posts

Mrinal Wadhwa

@mrinal

CTO @ Autonomy

Claude Cowork + Autonomy is lovable 🥰 Vibe-coded in Cowork and shipped with Autonomy: An app that uses parallel deep research agents to fact-check news articles. It took 15 minutes and the app was live on a public address in @autonomy_comp Great work @claudeai @felixrieseberg 👏

VERTICAL AI CHALLENGE Vertical AI Founders: You've spent 2+ years building your agents, training your model on your customers' data, embedding into workflows, creating a powerful GTM motion, all the best practices. You've beaten back challengers and are the #1 or #2 player in your vertical. I'm sorry, you cannot relax. In fact, you need to massively up your game. Turns out you are facing an existential challenge: long-horizon agents (eg: Claude Code). Agents that are not trained on a specific domain, but can reliably work for hours or days on end in pursuit of a goal, self-correct, and actually do stuff. I'm sure many Vertical AI founders will say: "Oh, we are not worried. We are the system of record for decision traces. We train on enterprise-specific context. That's why these horizontal agents can never catch up with this." You might well be right. But, but, but ... you cannot afford to bury your head in the sand. These long-horizon agents will get better very, very quickly. You need to understand precisely how good they are at the exact jobs you've built your agents on. You cannot wait for someone else to do this. For example, if you're a legal AI company with an agent that automates contract review, you must compare how good your specialized agent is versus a general-purpose long-horizon agent that's simply given the contract and asked to perform the same review. My challenge to you: Assign a strong engineer on your team to focus 100% on using long-horizon agents (with minimal context, other than just the contract in the example above) to compete with your custom-trained agents. Benchmark how the long-horizon agents perform vs your agent. Rinse and repeat it every few months. Like with most other things worth measuring, what matters is the rate of improvement (the "slope" vs the Y-intercept). If the long-horizon agent is 30% as good as your vertical agent on Day 1, but 50% as good on Day 60, and 70% as good on Day 120, you need to reassess your product strategy. AGI is coming for everyone. Long-horizon agents are the closest we have to AGI, and as a Vertical AI company, you need to figure out how you compete and survive. Game on.

A controversial take - but I think the software world hasn’t priced in the fact that PMs are uniquely suited to thrive in this new world. Especially one where the gap between idea and execution has shrunk SO much.. Good PMs are > constantly thinking of new ideas > spending time articulately building plans (exceptionally important for long horizon tasks) > rapid context switching > good sense of outcomes (vs feedback) and selling price of work > talking to customers and able to convert into skills (yes Claude skills) These folks were always hamstrung by the pace of development and now have been set free. Even the “project management” skills that a lot of PMs end up learning at large companies will be helpful in managing a fleet of agents. Now let’s be clear the PMs who are just doing coordination and none of the other things mentioned above were always destined to die a slow death in organizations. But I won’t be surprised if a lot of the really good PMs end up starting companies while it’ll be interesting to see what the role eventually evolves to in ~five years within organizations.

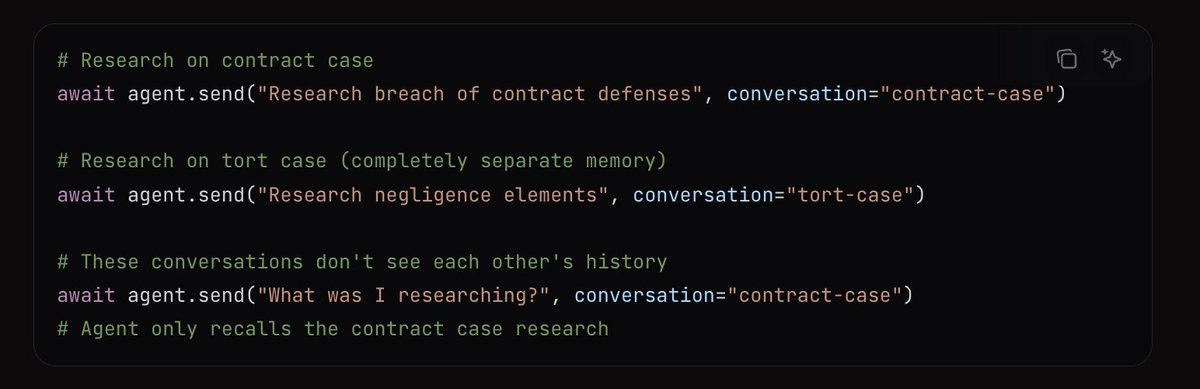

Inspired by @steipete, I've spent a bunch of my spare time over the last few days working on a personal agent. While I'm not sure its really reusable, or that I'm happy with the current arch, I wanted to share what I've got so far as I think theres some good ideas in here..