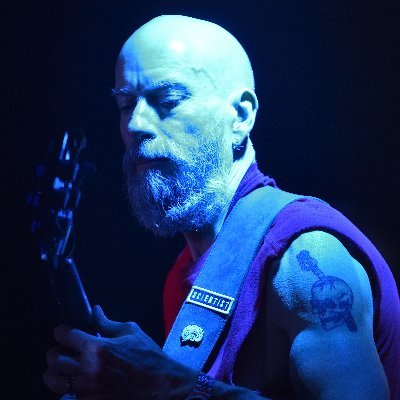

Matthieu Thiboust

1.2K posts

Matthieu Thiboust

@mthiboust

AI & Neuroscience enthusiast. Author of the free ebook 🧠+🤖 "Insights from the brain: the road towards Machine Intelligence" (2020).

New Anthropic Repo - it's a kernel optimization challenge The baseline is 147734 cycles. Opus 4.5 got 1363 cycles. If you beat it, you can apply to Anthropic I have been playing with it for like 30 mins now and yes it's pretty fucking hard. Getting below 2000 cycles is already very impressive imo. But i don't have a clue about kernels or optimization strategies. github.com/anthropics/ori…

Working on tinygrad-verilog on the side, here's my first devlog! youtu.be/t7JQ24hywXo Watch it, please. GitHub in the description of the video. So far, implemented a layer of a neural network. Good night!

After a fantastic & intense journey, I am now glad to share my free illustrated ebook about insights from the #brain that are currently – or could be soon – used in #neuroscience-grounded #AI approaches. 📖🧠🤖 I hope you will enjoy it! insightsfromthebrain.com

The @ilyasut episode 0:00:00 – Explaining model jaggedness 0:09:39 - Emotions and value functions 0:18:49 – What are we scaling? 0:25:13 – Why humans generalize better than models 0:35:45 – Straight-shotting superintelligence 0:46:47 – SSI’s model will learn from deployment 0:55:07 – Alignment 1:18:13 – “We are squarely an age of research company” 1:29:23 – Self-play and multi-agent 1:32:42 – Research taste Look up Dwarkesh Podcast on YouTube, Apple Podcasts, or Spotify. Enjoy!

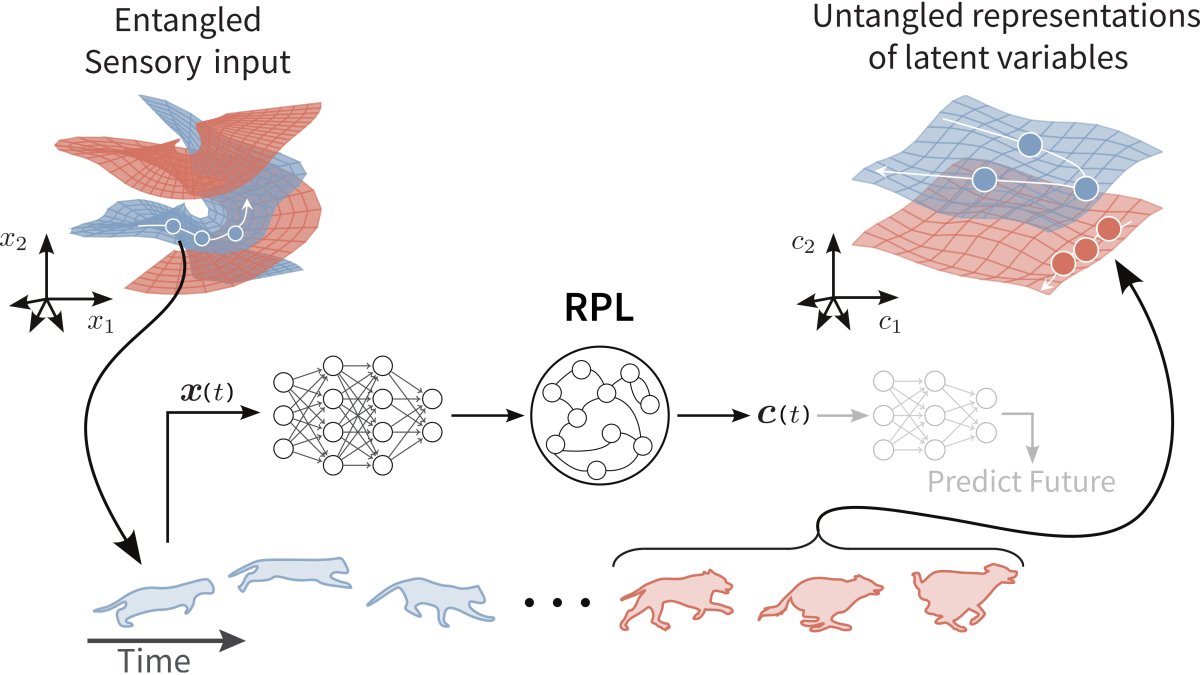

1/6 New preprint 🚀 How does the cortex learn to represent things and how they move without reconstructing sensory stimuli? We developed a circuit-centric recurrent predictive learning (RPL) model based on JEPAs. Led by @AtenaGMohammadi @manu_halvagal 🔗doi.org/10.1101/2025.1…

Time, space, memory and brain–body rhythms nature.com/articles/s4158… #neuroscience

Time, space, memory and brain–body rhythms nature.com/articles/s4158… #neuroscience

optimism and bias towards action is how you shape reality towards your beliefs for how it could be better basically optimistic active Inference = success