Xiao Lin

244 posts

Pretty nice video explaining DeepSeek v4 long-context innovations (beats Gemini 3.1 on 1M retrieval). Also, they use gradient-checkpointing for training

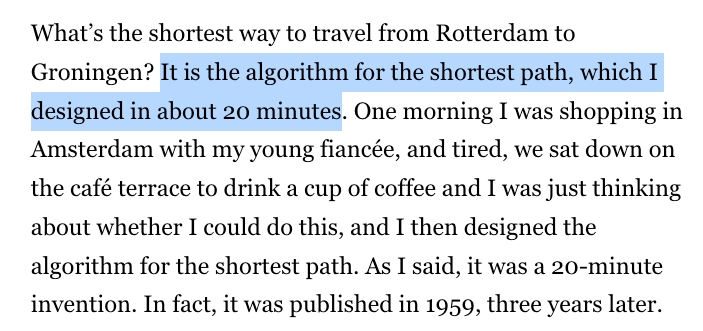

Closed labs hide model sizes. They can't hide what their models know, and what a model knows is an indicator on how big it is. Reasoning compresses. Factual knowledge doesn't. So you can size a frontier model from black-box API calls alone, and across releases you can literally watch a single fact arrive in the parameters over time. For three years, my friends Jiyan He and Zihan Zheng have been asking frontier LLMs the same question: "what do you know about USTC Hackergame?", a CTF contest. May 2024: GPT-4o invented fake titles. Feb 2025: Claude 3.7 Sonnet listed 19 verified 2023 challenges. By April 2026, frontier models recall specific challenges across consecutive years. After DeepSeek-V4 dropped, I instructed my agent to spend four days autonomously turning that habit into Incompressible Knowledge Probes (IKP) — 1,400 questions, 7 tiers of obscurity, 188 models, 27 vendors. Three findings: 1/ You can approximately size any black-box LLM from factual accuracy alone. Penalized accuracy is log-linear in log(params), R² = 0.917 on 89 open-weight models from 135M to 1.6T params. Project closed APIs onto the curve → GPT-5.5 ~9T, Claude Opus 4.7 ~4T, GPT-5.4 ~2.2T, Claude Sonnet 4.6 ~1.7T, Gemini 2.5 Pro ~1.2T (90% CI: 0.3-3x size). 2/ Citation count and h-index don't predict whether a frontier model recognizes a researcher. Two researchers with similar citation profiles get very different responses. Models memorize impact — work that shaped a field, not many incremental papers. 3/ Factual capacity doesn't compress over time. Across 96 open-weight models across 3 years, the IKP time coefficient is statistically zero, rejecting the Densing-Law prediction of +0.0117/month at p<10⁻¹⁵. Reasoning benchmarks saturate; factual capacity keeps scaling with parameters. Website: 01.me/research/ikp/ Paper: arxiv.org/pdf/2604.24827

There's a quadrillion-dollar question at the heart of AI: Why are humans so much more sample efficient compared to LLM? There are three possible answers: 1. Architecture and hyperparameters (aka transformer vs whatever ‘algo’ cortical columns are implementing) 2. Learning rule (backprop vs whatever brain is doing) 3. Reward function @AdamMarblestone believes the answer is the reward function. ML likes to use pretty simple loss functions, like cross-entropy. These are easy to work with. But they might be too simple for sample-efficient learning. Adam thinks that, in humans, the large number of highly specialised cells in the ‘lizard brain’ might actually be encoding information for sophisticated loss functions, used for ‘training’ in the more sophisticated areas like the cortex and amygdala. Like: the human genome is barely 3 gigabytes (compare that to the TBs of parameters that encode frontier LLM weights). So how can it include all the information necessary to build highly intelligent learners? Well, if the key to sample-efficient learning resides in the loss function, even very complicated loss functions can still be expressed in a couple hundred lines of Python code.

Software horror: litellm PyPI supply chain attack. Simple `pip install litellm` was enough to exfiltrate SSH keys, AWS/GCP/Azure creds, Kubernetes configs, git credentials, env vars (all your API keys), shell history, crypto wallets, SSL private keys, CI/CD secrets, database passwords. LiteLLM itself has 97 million downloads per month which is already terrible, but much worse, the contagion spreads to any project that depends on litellm. For example, if you did `pip install dspy` (which depended on litellm>=1.64.0), you'd also be pwnd. Same for any other large project that depended on litellm. Afaict the poisoned version was up for only less than ~1 hour. The attack had a bug which led to its discovery - Callum McMahon was using an MCP plugin inside Cursor that pulled in litellm as a transitive dependency. When litellm 1.82.8 installed, their machine ran out of RAM and crashed. So if the attacker didn't vibe code this attack it could have been undetected for many days or weeks. Supply chain attacks like this are basically the scariest thing imaginable in modern software. Every time you install any depedency you could be pulling in a poisoned package anywhere deep inside its entire depedency tree. This is especially risky with large projects that might have lots and lots of dependencies. The credentials that do get stolen in each attack can then be used to take over more accounts and compromise more packages. Classical software engineering would have you believe that dependencies are good (we're building pyramids from bricks), but imo this has to be re-evaluated, and it's why I've been so growingly averse to them, preferring to use LLMs to "yoink" functionality when it's simple enough and possible.