mahdi

2.3K posts

mahdi

@mxhdiqaim

Software engineer, I solve math equations for fun, play Sudoku and read comic.

Stop complaining that there are no jobs Target local farms in Georgia USA - Target Dental practices in Sydney Australia - Target local E-commerce Shopify businesses in Oshodi Lagos(joke oh) 😭 - Target small logistics companies in Manchester UK - Target real estate companies in Texas USA You can simply use Google maps or ChatGPT to find them! Go sell your skill🙏🏽 If you want to build for impact? target bigger industries in California, UK etc.. Build impressive projects to get noticed!! Build, Be loud and Bold!! Should I hold your hand and drag you? 😫

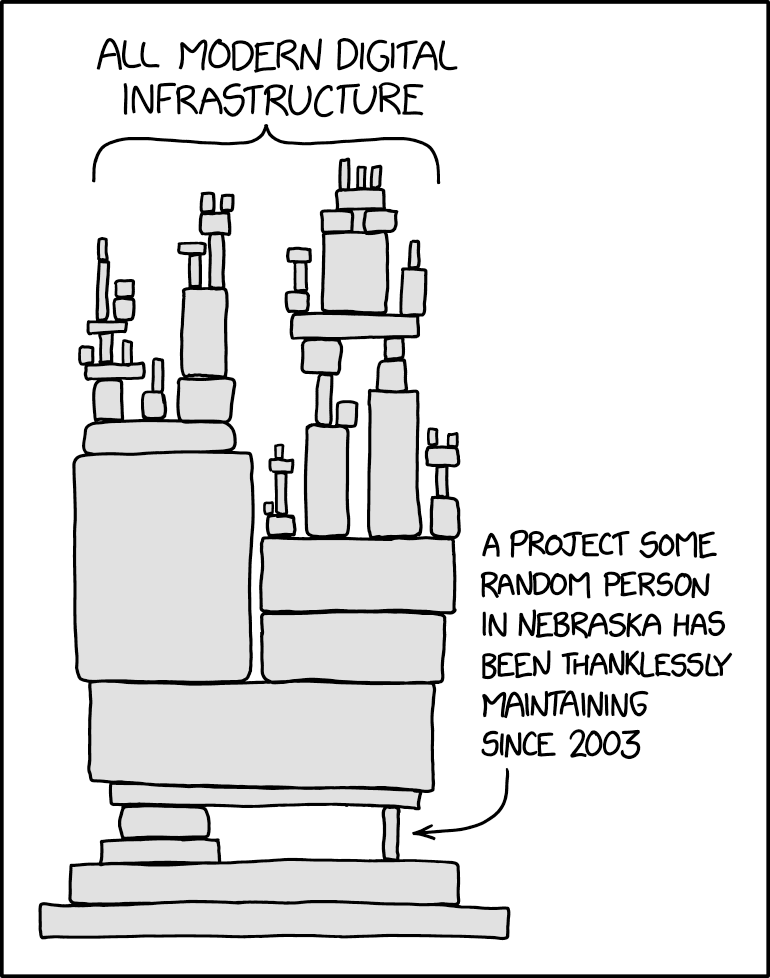

this is a catastrophe. StackOverflow provided data to LLMs, LLMs replaced StackOverflow, and now no new Q&A hub exists to provide fresh data. it’s a self-undermining causal loop, like mold growing on food, consuming it, and dying once the food is gone.

People really be building anything these days man