Myle Ott

74 posts

@myleott

ML infra @thinkymachines

Introducing Tinker: a flexible API for fine-tuning language models. Write training loops in Python on your laptop; we'll run them on distributed GPUs. Private beta starts today. We can't wait to see what researchers and developers build with cutting-edge open models! thinkingmachines.ai/tinker

Thrilled to share that we're open sourcing our innovative approach to prompt design! Discover how Prompt Poet is revolutionizing the way we build AI interactions in our latest blog post: research.character.ai/prompt-design-…

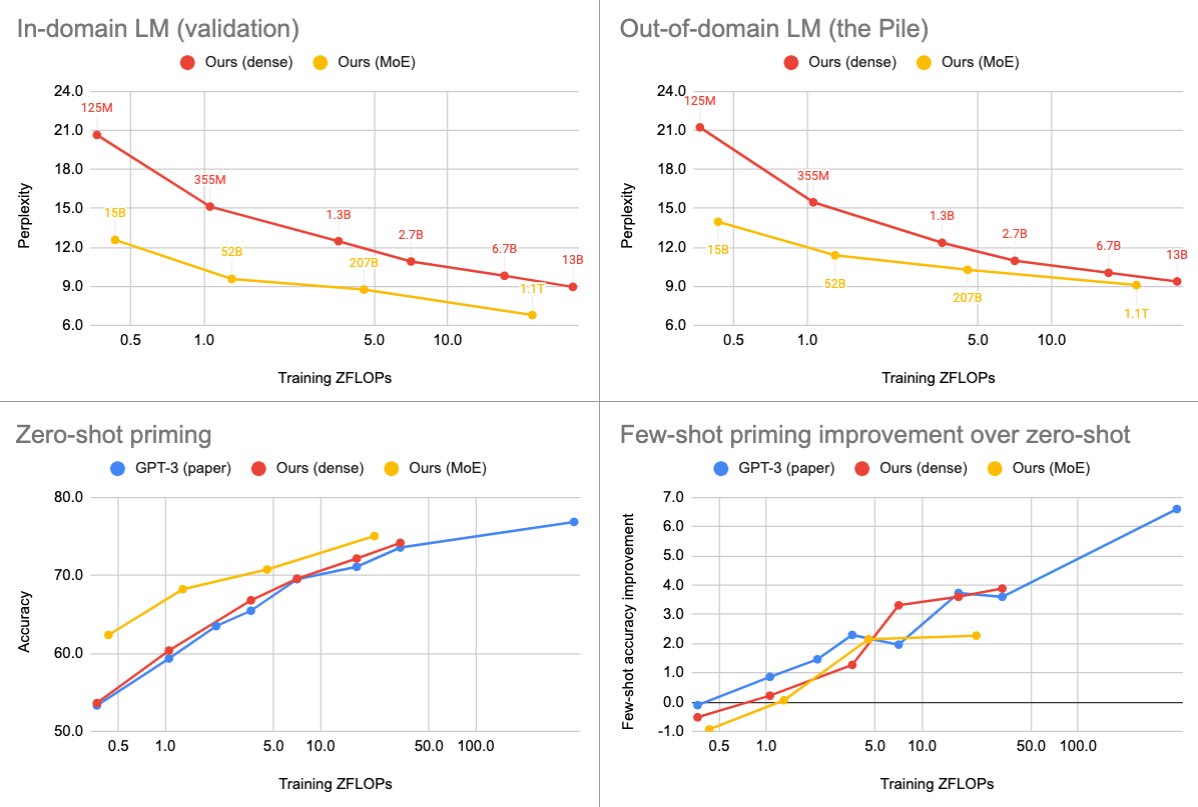

Character AI is serving 20,000 QPS. Here are the technologies we use to serve hyper-efficiently. [research.character.ai/optimizing-inf… ]

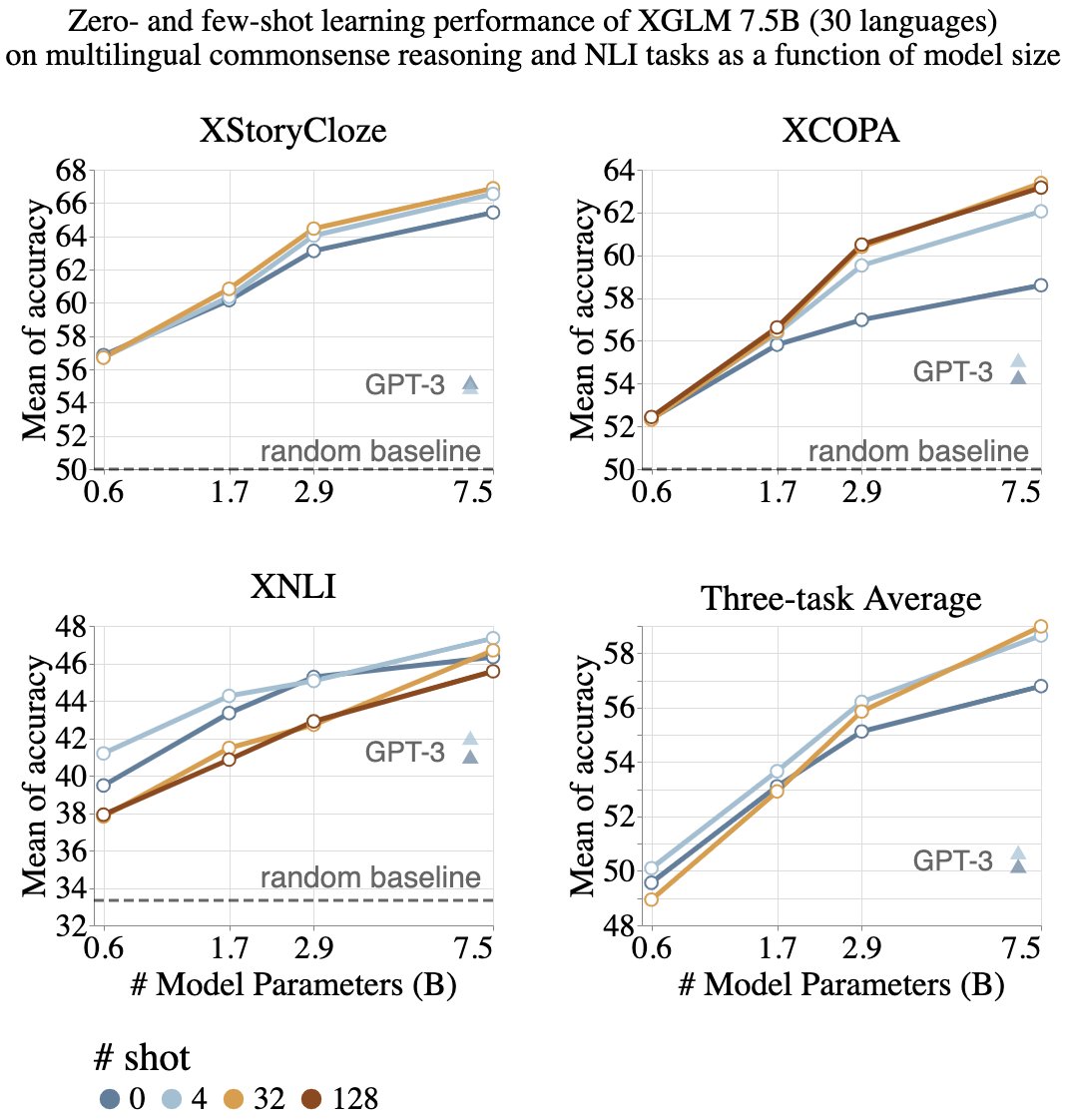

Today Meta AI is sharing OPT-175B, the first 175-billion-parameter language model to be made available to the broader AI research community. OPT-175B can generate creative text on a vast range of topics. Learn more & request access: ai.facebook.com/blog/democrati…